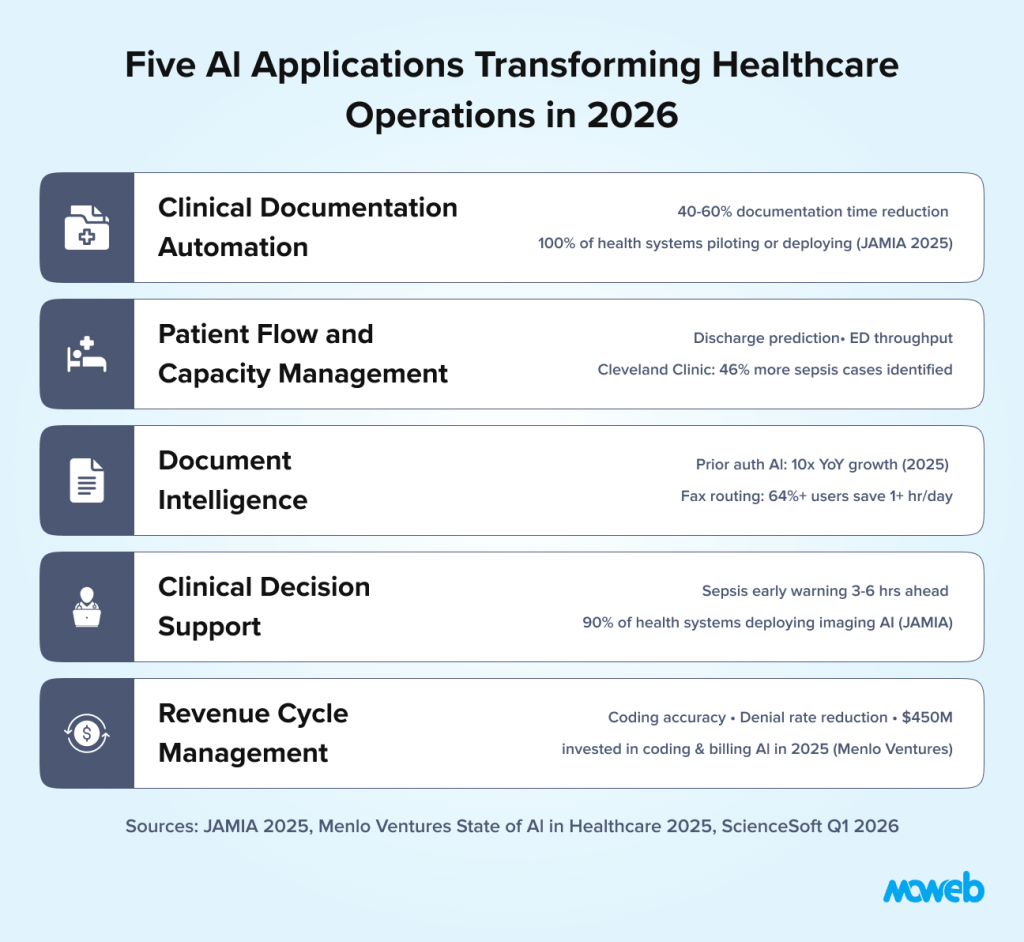

What is AI used for in healthcare operations? AI in healthcare operations is applied across five primary areas: clinical documentation automation (using ambient AI to capture and draft clinical notes during patient encounters, reducing documentation time by 40–60%), patient flow and capacity management (predicting admissions, discharges, and bed demand to reduce boarding and improve throughput), document intelligence (extracting, classifying, and routing clinical documents including referrals, prior authorisations, and discharge summaries), clinical decision support (surfacing relevant clinical evidence, drug interaction alerts, and care gap flags at the point of care), and revenue cycle management (automating coding, billing, and prior authorisation workflows). Healthcare AI delivers an average $3.20 return for every $1 invested within 14 months, according to peer-reviewed analysis published by PMC/NIH.

Why is AI adoption in healthcare operations accelerating in 2026? Three compounding pressures are forcing healthcare organisations to move from AI experimentation to production deployment in 2026: clinician burnout driven by administrative overload (physicians work an average 57.8 hours per week, with only 27.2 hours on direct patient care the rest consumed by documentation, order entry, and administrative tasks), workforce shortages that make staffing-intensive manual workflows unsustainable at scale, and financial pressure on health systems that must find operational efficiency without reducing care quality. Healthcare AI spending nearly tripled to $1.4 billion in 2025 (Menlo Ventures), and 100% of health systems surveyed by JAMIA have begun piloting or deploying ambient AI the first use case to reach universal adoption activity across surveyed health systems.

Healthcare has an administrative problem that is also a clinical problem.

Research examining physician workflow and time allocation found that documentation and administrative work consume nearly twice as much time as direct patient care. AMA survey data shows physicians work an average 57.8 hours per week, with only 27.2 hours on direct patient care and 13 hours per week on indirect care tasks such as documentation and order entry alone. Clinicians spend a substantial fraction of their working day on tasks that are not direct patient interaction: writing notes, completing prior authorisation requests, reviewing and routing documents, entering data into EHR systems, and managing the compliance and coding requirements that follow every patient encounter.

The downstream consequences are measurable. Burnout rates among clinicians are at record highs. Staff turnover is driving up labour costs and care continuity gaps. Hospital throughput is constrained not by clinical capacity but by administrative bottlenecks. Revenue cycle errors from manual coding add hundreds of millions in reclaimable revenue across the system annually.

AI does not solve all of these problems. But it directly addresses the administrative burden component which is both the largest driver of clinician dissatisfaction and the most tractable operational challenge for technology. Healthcare AI delivers an average $3.20 return for every $1 invested within 14 months, based on peer-reviewed analysis published by PMC/NIH. Healthcare AI spending nearly tripled to $1.4 billion in 2025, according to Menlo Ventures’ State of AI in Healthcare report, minting eight healthcare AI unicorns and signalling that the market has moved from exploration to structural investment. The investment case is compelling. The implementation challenge is navigating the compliance, clinical governance, and workflow integration requirements that make healthcare AI materially more complex to deploy than AI in less regulated sectors.

This guide covers the five operational AI applications delivering measurable value in healthcare settings in 2026, the regulatory framework that governs them, and a realistic implementation approach for health systems and healthcare organisations that want to move from pilot to production.

Why Healthcare AI Requires a Different Implementation Approach

Healthcare AI shares many technical foundations with enterprise AI in other sectors RAG systems, document intelligence, predictive ML models but the deployment context is fundamentally different in three ways that shape every implementation decision.

Clinical safety is the primary design constraint. An AI system that produces an incorrect answer in a knowledge management application causes a productivity problem. An AI system that produces a clinically incorrect output a wrong drug interaction alert, a missed care gap flag, a mislabelled document can cause patient harm. Every healthcare AI system must be designed with the clinical failure mode as the primary concern, not a secondary consideration. This means human-in-the-loop design is not optional; it is a care quality requirement.

HIPAA creates specific data handling obligations. Any AI system that processes, stores, or analyses protected health information (PHI) requires a Business Associate Agreement (BAA) with every vendor in the data pathway, encryption at rest and in transit, minimum necessary data handling, access controls documented to HIPAA Security Rule standards, and audit logging of all PHI access. These are not checkbox requirements they define the entire data architecture of a healthcare AI system. An AI vendor who cannot sign a BAA or who cannot document their data handling against HIPAA standards cannot handle your patient data.

Before starting any healthcare AI programme, a structured AI readiness assessment that includes a HIPAA and governance readiness dimension is essential.

FDA oversight applies to clinical AI. AI systems that analyse medical data and produce outputs that influence clinical decisions may qualify as Software as a Medical Device (SaMD) under FDA guidance. SaMD faces pre-market notification or clearance requirements, post-market surveillance obligations, and performance monitoring standards that are materially more demanding than general enterprise software deployment. The FDA’s evolving guidance on AI/ML-based SaMD is the regulatory reference for any AI system whose outputs directly influence diagnosis, treatment, or clinical decision-making. In December 2025, HHS launched a department-wide Request for Information on AI to coordinate its approach to clinical AI adoption signalling that federal regulatory attention to healthcare AI is intensifying in 2026.

These three constraints do not make healthcare AI impossible. They make the design decisions more consequential and the compliance architecture a prerequisite, not an afterthought. The organisations deploying healthcare AI successfully in 2026 are those that resolved these questions before development started.

Use Case 1: Clinical Documentation Automation

Clinical documentation is the single highest-impact AI application for clinician satisfaction and operational efficiency. It is also the area where AI capability has advanced most dramatically in the past 18 months and the first use case to reach universal adoption activity across all health systems surveyed by JAMIA in 2025.

The core problem is well documented. Physicians spend an estimated 2 hours on documentation for every hour of direct patient care. EHR documentation requirements have expanded continuously as billing, compliance, and quality reporting demands have grown, without a proportional investment in reducing the documentation burden on clinicians. The result is that highly trained clinical professionals are spending a substantial fraction of their working time on data entry.

Ambient clinical documentation AI also called ambient AI or AI scribes addresses this by listening to the patient-clinician encounter (with patient consent), understanding the clinical content of the conversation, and producing a draft clinical note structured to the documentation requirements of the relevant encounter type. The clinician reviews and approves the draft rather than generating it from scratch.

The productivity improvement from well-implemented ambient documentation AI is substantial. Documentation time reductions of 40–60% are consistently reported in clinical settings. Houston Methodist’s system-wide ambient AI rollout in early 2026 reported a 40% reduction in documentation time, a 27% increase in time spent with patients, and a 33% reduction in after-hours clinical work. Mass General Brigham saw a 40% relative drop in self-reported burnout during an AI scribe pilot. Critically, the time reclaimed is not just administrative efficiency it is time that returns to direct patient care or to the work-life balance that affects clinician retention.

Major EHR vendors (Epic, Oracle Health, athenahealth) have embedded ambient AI natively into their platforms, shifting the market from third-party bolt-on tools toward deeply integrated, system-wide solutions. AI-generated progress notes are now accepted by CMS and major insurance providers for billing purposes. The technology maturity for ambient documentation AI has reached a point where the primary implementation challenge is workflow integration and change management, not the AI capability itself. Startups like Abridge which raised $250 million in 2025 and reached 100+ US health systems and Ambience have captured nearly 70% of new ambient AI market share despite EHR incumbents’ scale advantage, demonstrating that performance differentiation remains significant.

The compliance consideration for documentation AI is consent and PHI handling. The conversation between a patient and their clinician is PHI. The AI system processing that conversation must do so under a BAA, with documented data minimisation practices and a clear data retention and deletion policy. Patient consent for ambient recording must be obtained and documented before any session is captured.

Use Case 2: Patient Flow and Capacity Management

Patient flow optimisation is the operational AI use case with the most direct impact on hospital financial performance and care access. Bed shortages, emergency department boarding, and operating room scheduling inefficiency are not primarily staffing problems they are prediction and coordination problems that AI is well positioned to address.

The core application is demand forecasting for inpatient capacity: predicting admissions volumes by day, service line, and acuity level, so that bed management, staffing, and discharge planning can be proactively coordinated rather than reactively managed.

Traditional capacity management relies on historical averages and planner judgment. The limitation is that healthcare demand is influenced by variables that averages cannot capture: seasonal respiratory illness patterns, local public health events, care pathway changes that shift admission rates, and the lagged effects of outpatient referral volumes on inpatient demand. AI models integrating real-time signals from these sources produce significantly more accurate short-term forecasts than historical averaging.

The practical applications of improved capacity forecasting include:

Discharge prediction: Identifying patients likely to be ready for discharge in the next 24 to 48 hours, enabling proactive discharge planning coordination between clinical teams, social work, and post-acute care providers. Reducing the average length of stay even by a fraction of a day has significant financial impact in a health system with hundreds of inpatient beds.

ED throughput optimisation: Predicting ED demand by time of day and acuity level, enabling more dynamic staffing and bed allocation decisions. AI-driven ED capacity management systems are reducing average door-to-admission times and reducing left-without-being-seen rates in documented implementations.

Operating room scheduling: Predictive models for case duration, turnover time, and cancellation probability that enable more accurate OR scheduling and reduce the expensive idle time that poorly predicted schedules generate.

Early warning systems for clinical deterioration: Predictive models monitoring vital signs, lab trends, and clinical assessment patterns to identify patients at elevated risk of deterioration before it manifests as a clinical crisis. These systems have demonstrated reductions in unplanned ICU transfers and code blue events in prospective studies. Cleveland Clinic’s deployment of Bayesian Health’s AI sepsis platform yielded a ten-fold reduction in false positives, a 46% increase in identified sepsis cases, and earlier alerts in seven times as many cases before antibiotic administration.

The data requirements for patient flow AI are largely available within existing EHR and operational systems: admission and discharge records, bed census data, ED arrival and departure times, clinical assessment data. The primary integration challenge is real-time access to these data streams, which in many health systems remain in siloed systems with batch rather than real-time data access patterns.

Clinical predictive models require the same model validation, bias monitoring, and drift detection framework as financial AI. Our guide to MLOps best practices for regulated industries covers that framework in detail.

Use Case 3: Document Intelligence and Automation

Healthcare organisations process enormous volumes of documents: referral letters, insurance prior authorisation requests, discharge summaries, lab and imaging reports, clinical correspondence, and the administrative paperwork that accompanies every patient interaction. The majority of this document processing is still manual read by a person, classified, and routed to the appropriate workflow or entered into the appropriate system.

AI document intelligence automates this processing pipeline. The system receives an incoming document, classifies it by document type and urgency, extracts the clinically or administratively relevant data fields, routes it to the appropriate queue or workflow, and flags any items requiring immediate human attention.

The use cases with the highest documented ROI in healthcare document intelligence include:

Prior authorisation processing. Prior authorisation requests from payers are among the most administratively burdensome processes in healthcare operations. Each request requires matching the clinical documentation to the payer’s criteria, assembling the supporting evidence, and submitting in the payer’s specific format. AI document intelligence can automate the data gathering and assembly steps, dramatically reducing the manual time per authorisation and reducing the denial rates from incomplete submissions. Prior authorisation AI grew ten-fold year-over-year in 2025 (Menlo Ventures), reflecting the scale of unmet demand in this workflow.

Referral management. Incoming referrals must be triaged for urgency, matched to the appropriate specialist and appointment slot, and acknowledged to the referring provider. AI triage and routing reduces the time from referral receipt to appointment scheduling, improves the accuracy of urgency classification, and eliminates manual data re-entry between referral documents and scheduling systems. AI-powered fax and document management tools report users saving over 64% at least an hour per day through automated routing, according to eClinicalWorks survey data.

Clinical document summarisation. For complex patients with extensive medical histories, AI summarisation of relevant clinical records producing a concise summary of active conditions, relevant history, current medications, and recent test results significantly reduces the time clinicians spend on chart review before an encounter. This is particularly valuable in emergency and consultation settings where clinicians need rapid orientation to an unfamiliar patient’s history.

Coding and billing support. AI models trained on clinical documentation can suggest ICD-10 and CPT codes based on the encounter documentation, reducing coding errors and omissions that result in claim denials and revenue cycle delays. Human coders review and confirm AI suggestions rather than generating codes from scratch. Coding and billing automation attracted $450 million in healthcare AI investment in 2025 the second largest category after ambient documentation reflecting the direct revenue impact of coding accuracy improvements.

Use Case 4: Clinical Decision Support

Clinical decision support (CDS) is the most clinically complex and most regulated area of healthcare AI, and also the area with the most significant potential impact on care quality outcomes.

The traditional form of CDS alert-based systems embedded in EHRs that fire rules when defined conditions are met has suffered from well-documented alert fatigue. When clinicians receive dozens of pop-up alerts per shift, the majority of which are clinically irrelevant to the specific patient at hand, the alerts are overridden at high rates and their protective value is diluted.

AI-powered CDS addresses this by shifting from rule-based alerting to contextual, patient-specific recommendations that are integrated into the clinical workflow at the moment they are relevant, with evidence-based supporting information rather than a generic alert.

Current high-value AI CDS applications in production include:

Sepsis prediction and early warning. ML models monitoring vital signs, lab values, and clinical assessment data that identify patients at elevated sepsis risk 3 to 6 hours before clinical diagnosis, enabling earlier intervention. Multiple health systems have documented 20% to 30% reductions in sepsis mortality in prospective studies of AI early warning systems.

Drug interaction and prescribing support. Contextual AI that highlights potential drug interactions, dose adjustments for renal or hepatic function, and allergy cross-reactivity at the point of prescribing, integrated into the workflow without disruptive pop-up interruption.

Diagnostic imaging support. AI analysis of radiological images (chest X-ray, CT, MRI, pathology slides) that flags areas of concern for radiologist review, prioritises urgent findings, and reduces missed findings.Imaging and radiology is the most widely deployed clinical AI use case, with 90% of health systems in the JAMIA survey reporting at least partial deployment. FDA-cleared AI imaging tools continue to expand: in February 2026, Median Technologies received FDA 510(k) clearance for its AI-based lung cancer screening detection solution, reflecting the maturation of imaging AI into defined clinical screening programmes.

Care gap identification. AI models analysing patient records against evidence-based care guidelines to surface preventive care gaps, chronic disease management gaps, and medication adherence risks at the point of contact, enabling opportunistic care gap closure in primary care and chronic disease management settings.

The regulatory consideration for clinical decision support is the FDA’s SaMD guidance. CDS tools that are “locked” (not user modifiable) and that directly influence clinical decisions are likely to be classified as SaMD. CDS tools that provide information for clinical consideration and rely on clinician judgment for the final decision are more likely to qualify for enforcement discretion under FDA’s CDS guidance. The classification question must be resolved before deployment, with regulatory counsel involved in the design phase.

A critical risk emerging in 2026 is clinical deskilling from uncritical GenAI use in CDS noted by Wolters Kluwer’s clinical governance experts as a governance priority. CDS governance frameworks must address not only AI accuracy but also whether AI-assisted workflows are maintaining, rather than eroding, clinical reasoning skills.

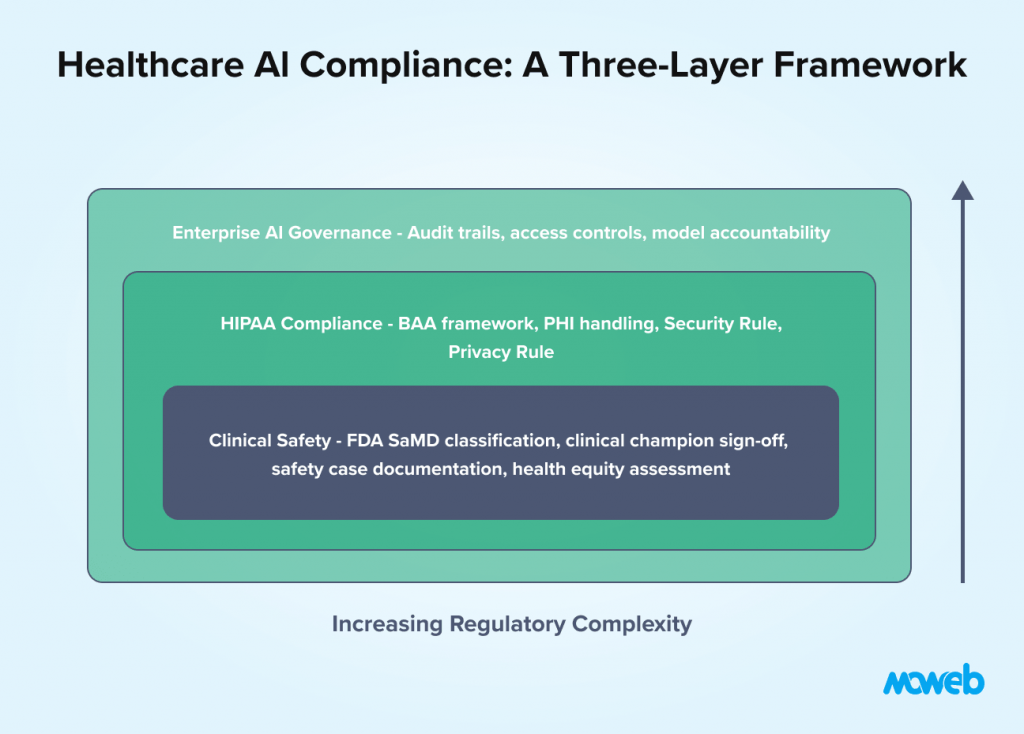

The Healthcare AI Compliance Framework

Healthcare AI operates under a layered compliance framework that differs from other regulated sectors in its clinical safety orientation. The key requirements for operational healthcare AI deployment:

HIPAA Security Rule compliance for any system processing PHI: documented risk analysis, technical safeguards (encryption, access controls, audit logging), administrative safeguards (policies, training, BAAs), and physical safeguards for any on-premises components.

HIPAA Privacy Rule compliance for data use: minimum necessary principle for data access, patient consent documentation where required, accounting of disclosures, and response procedures for patient access requests.

FDA SaMD classification and compliance for clinical AI: pre-market notification or clearance for higher-risk SaMD, post-market surveillance obligations including adverse event reporting, performance monitoring against pre-specified acceptance criteria, and documentation of the AI development lifecycle to FDA quality system standards.

Clinical governance documentation that is specific to healthcare AI and beyond standard enterprise AI governance: clinical champion sign-off before deployment, clinical safety case documentation, validation on representative patient populations (not just convenience samples), and a defined process for clinician feedback on AI performance.

Bias and health equity assessment for AI systems that influence clinical care: evaluation of model performance across demographic subgroups (age, race, gender, socioeconomic status) to ensure the AI system does not amplify existing health disparities. Health equity is increasingly a regulatory and accreditation focus for healthcare AI. The ONC’s January 2026 Request for Information on Diagnostic Imaging Interoperability Standards signals that bias and performance documentation requirements for clinical AI are moving toward formalisation at the federal level.

For the broader AI governance framework that provides the foundation for these healthcare-specific requirements, our guide to AI governance for LLMs and enterprise agents covers the universal controls that apply across all enterprise AI deployments.

Implementation Roadmap for Healthcare Organisations

The correct starting point for healthcare AI implementation is determined by the same principle as in fintech: choose the use case with the most accessible data, the clearest ROI model, and the lowest regulatory complexity then build governance discipline and compliance infrastructure that scales to more complex use cases.

Phase 1 (Months 1 to 3): Administrative AI with lowest clinical risk Documentation support, referral routing, and prior authorisation automation carry the lowest clinical risk (they do not directly influence care decisions) and have the clearest operational ROI. Start here. Build the HIPAA compliance architecture, the BAA framework with vendors, and the audit logging infrastructure that all subsequent phases will rely on. For the security architecture behind compliant conversational AI systems in healthcare, see our guide to building secure enterprise chatbots with audit trails and compliance.

For realistic cost expectations before committing to a healthcare AI pilot, see our breakdown of what an AI proof of concept costs in 2026.

Phase 2 (Months 3 to 6): Operational AI with moderate clinical involvement Patient flow forecasting and bed management AI, ED throughput optimisation, and discharge prediction involve clinical data but produce outputs that support operational planning rather than individual clinical decisions. These have a lower regulatory burden than direct CDS applications and produce measurable financial impact.

Phase 3 (Months 6 to 12): Clinical AI with governance foundation in place With HIPAA compliance architecture, clinical governance documentation, and two phases of production AI validated, the organisation is ready to tackle CDS and clinical deterioration prediction the highest-impact and highest-regulatory-complexity applications. The governance framework established in Phases 1 and 2 provides the template; the clinical safety case documentation is the new element.

This sequencing is not conservative it is realistic. Healthcare AI programmes that start with clinical decision support before establishing the compliance architecture and clinical governance framework consistently encounter delays, rework, and institutional confidence crises that cost significantly more than the time saved by starting with the hardest problem. A 2025 Healthcare IT News survey found 47% of healthcare leaders cited data quality and integration as major barriers to AI, and 39% cited regulatory and privacy concerns both of which are precisely addressed by the phased approach described here.

Frequently Asked Questions About AI in Healthcare Operations

What is ambient AI or AI scribe in healthcare?

Ambient AI in healthcare refers to AI systems that listen to the clinical encounter between a patient and clinician (with patient consent), understand the clinical content, and produce a draft clinical note structured to the documentation requirements of the encounter type. The clinician reviews and approves the draft rather than generating it from scratch. Ambient documentation AI consistently reduces documentation time by 40–60% in clinical settings and is the highest-adoption AI application in healthcare operations in 2026 the only use case with 100% adoption activity across all health systems surveyed by JAMIA.

Does HIPAA apply to AI systems in healthcare?

Yes. Any AI system that processes, stores, or analyses protected health information (PHI) including patient records used for training, patient data accessed at inference time, and clinical conversation recordings for documentation AI must comply with HIPAA. This requires a Business Associate Agreement with every vendor in the data pathway, documented data handling practices, encryption at rest and in transit, access controls, and audit logging of PHI access. An AI vendor who cannot sign a BAA cannot legally handle your patient data.

What is Software as a Medical Device (SaMD) and when does AI qualify?

Software as a Medical Device (SaMD) is defined by the FDA and international regulators as software intended to perform medical functions without being part of a hardware medical device. AI systems that produce outputs intended to directly influence clinical diagnosis, treatment, or patient management decisions are likely to qualify as SaMD, requiring pre-market notification or clearance before deployment. AI systems that provide information for clinical consideration without directly driving decisions may qualify for enforcement discretion under the FDA’s CDS guidance. Healthcare AI teams should involve regulatory counsel in this classification assessment during the design phase.

What is the ROI of AI in healthcare operations?

Based on peer-reviewed analysis published by PMC/NIH, healthcare AI delivers an average $3.20 return for every $1 invested within 14 months. Specific use cases vary: clinical documentation automation reduces physician documentation time by 40–60%, with direct impact on clinician capacity and retention. Patient flow AI reduces average length of stay and ED boarding time with direct financial impact measurable in revenue per bed per day. Revenue cycle AI reduces coding errors and denial rates, recovering revenue that would otherwise be written off. The return is highly use-case-specific and depends on quality of implementation and organisational adoption.

How should healthcare organisations handle AI bias and health equity?

Healthcare AI systems influencing clinical decisions must be evaluated for performance across demographic subgroups including age, race, gender, and socioeconomic status. A model that performs well on the majority population but poorly on specific subgroups can amplify existing health disparities rather than reducing them. Pre-deployment bias assessment against the specific patient population the system will serve (not just publicly available benchmark datasets) is a clinical governance requirement. Post-deployment monitoring on a defined cadence tracks whether performance disparities emerge as the model encounters real-world data.

What governance is required for AI in clinical decision support?

Clinical decision support AI requires a governance framework that goes beyond standard enterprise AI governance: a named clinical champion who signs off on the clinical validity of the AI outputs before deployment, a clinical safety case documenting the failure mode analysis and human override procedures, validation against a representative patient population from the target deployment setting, a clinician feedback mechanism for flagging incorrect or potentially harmful outputs, and a defined process for suspending the system if safety concerns are identified post-deployment.

What is the risk of clinical deskilling from healthcare AI, and how should it be governed?

Clinical deskilling is the risk that AI-assisted workflows progressively reduce clinicians’ active exercise of clinical reasoning particularly in documentation, CDS, and diagnostic support contexts. When AI consistently provides the answer, the cognitive work of arriving at that answer independently may diminish over time. Governance frameworks for clinical AI should include periodic unassisted performance assessments, mandatory human override review (rather than passive acceptance of AI suggestions), and workflow design that requires the clinician to actively engage with, rather than passively approve, AI outputs. Wolters Kluwer’s clinical governance experts have identified this as a top governance priority for 2026 as AI moves from pilot to system-wide deployment.

Conclusion: Healthcare AI Works When It Is Designed for Clinical Reality, Not Just Technical Performance

The healthcare organisations that are successfully deploying AI in 2026 share a common approach. They started with the administrative burden, where the compliance complexity is lower and the ROI is clear. They built the HIPAA architecture and governance framework before scaling to clinical applications. They involved clinical champions in the design process, not just as stakeholders but as co-designers of the clinical workflow integration. And they defined success by clinical outcomes and clinician adoption, not just by technical performance metrics.

The result is AI systems that clinicians use, trust, and advocate for rather than systems that technically work but are ignored in practice because they were not designed into the clinical workflow.

Healthcare AI’s potential to address the workforce crisis, reduce clinician burnout, and improve care quality simultaneously is genuinely significant. Mayo Clinic’s commitment of over $1 billion in AI investment across 200+ projects, Kaiser Permanente’s largest-ever technology deployment of ambient AI across 40 hospitals and 600+ medical offices, and Advocate Health’s evaluation of 225+ AI solutions before selecting 40 for production these are not pilots. They are the signal that healthcare AI has reached the infrastructure investment phase. Realising that potential requires the same investment in implementation rigour, compliance architecture, and clinical change management that any high-stakes enterprise AI deployment demands.

Moweb’s AI & ML development and AI Security & Governance practices work with healthcare organisations to build AI systems designed for clinical reality with HIPAA compliance, clinical governance documentation, and bias assessment as standard delivery components. Talk to our team about your healthcare AI programme.

Found this post insightful? Don’t forget to share it with your network!