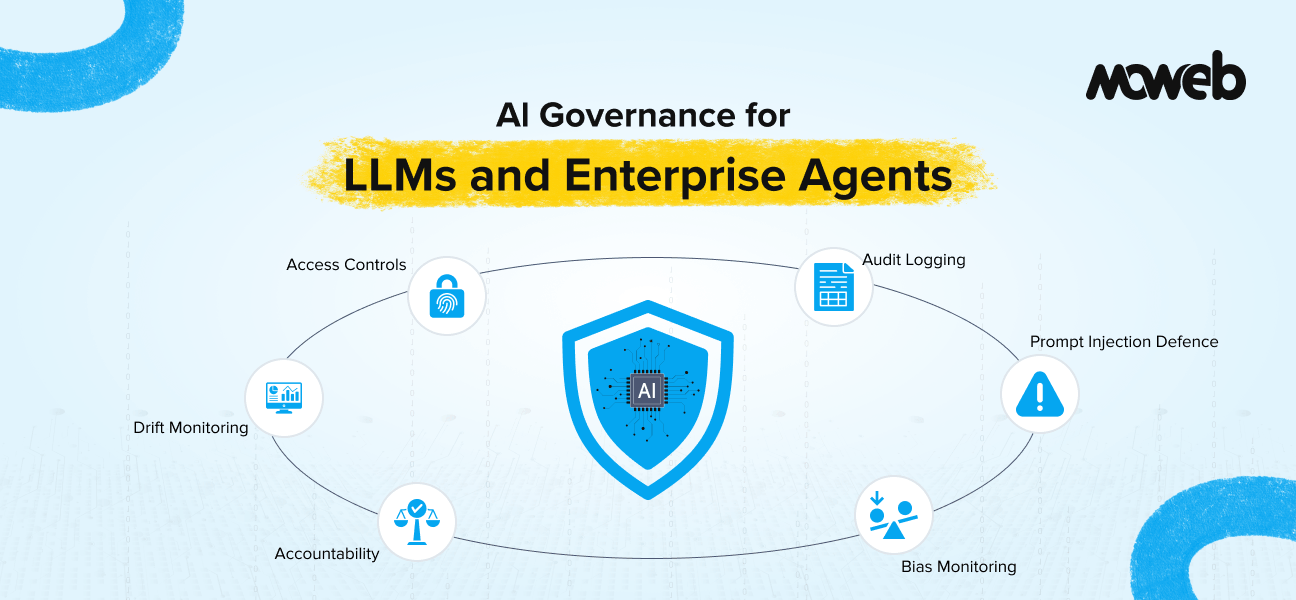

What is AI governance for enterprises? AI governance for enterprises is the set of policies, processes, controls, and accountability structures that ensure AI systems are developed, deployed, and operated in a way that is secure, accurate, compliant with relevant regulations, and aligned with the organisation’s ethical commitments. For LLMs and AI agents specifically, governance covers model accountability (who is responsible when an AI system produces an incorrect or harmful output), data handling and privacy controls, access management, bias and fairness monitoring, audit trail implementation, prompt injection defence, and compliance with applicable regulatory frameworks, including the EU AI Act, NIST AI RMF, and ISO 42001.

What should a CIO implement first for AI governance? The highest-priority governance implementations for a CIO deploying LLMs or AI agents in 2026 are: an AI system inventory (you cannot govern what you cannot see), access controls at the model and retrieval layer (preventing unauthorised data access), comprehensive audit logging of model inputs, outputs, and actions, a defined accountability structure (named owners for each AI system with documented escalation paths), and a prompt injection defence layer for any AI system that processes external or user-generated content. These five controls address the most common and most consequential AI governance failures in enterprise production environments.

The regulatory environment for enterprise AI changed materially in 2024 and 2025, and the operational stakes rose with it. McKinsey’s 2025 State of AI survey found that 65% of organisations now regularly use generative AI, nearly double the figure from just a year earlier. At the same time, organisations with high shadow AI usage faced data breach costs averaging $670,000 higher than their governed counterparts in 2025, according to IBM’s Cost of a Data Breach report. The EU AI Act moved from political agreement to enforcement reality. The NIST AI Risk Management Framework became the de facto standard for US federal and federally adjacent organisations. ISO 42001, the international standard for AI management systems, reached growing adoption among enterprise technology suppliers. And the first significant regulatory penalties for AI-related compliance failures began to emerge.

At the same time, enterprise AI deployment accelerated. LLMs moved from pilot to production across customer service, internal knowledge management, document processing, and decision support. AI agents with real tool access began operating autonomously across enterprise workflows. The combination of faster deployment and stricter regulation created exactly the conditions where governance gaps become expensive.

This guide is for CIOs, Chief Compliance Officers, and technology leaders who are responsible for ensuring their organisation’s AI deployments meet the governance standards that 2026 regulatory and operational realities demand. It covers what AI governance means specifically for LLMs and agents (which have different risk profiles than traditional AI systems), the regulatory frameworks that define the compliance baseline, the specific controls that matter most, and a prioritised implementation sequence for organisations that need to close governance gaps quickly.

Why LLM and Agent Governance Is Different from Traditional AI Governance

Governance frameworks developed for traditional predictive ML systems do not fully address the risks introduced by LLMs and AI agents. Understanding these differences is the foundation for building governance that actually works.

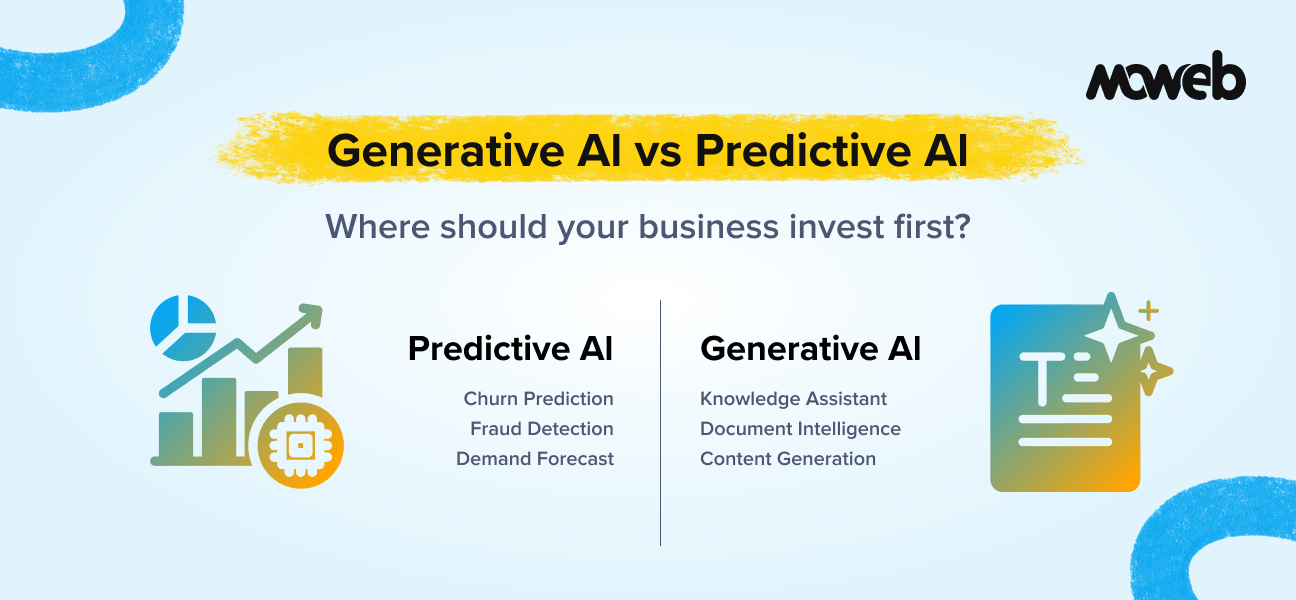

Traditional ML governance focuses primarily on model performance (is the model making accurate predictions?), bias and fairness (are predictions equitable across population subgroups?), and data quality (is the training data representative and unbiased?). These are important concerns, but the governance controls are relatively well understood, and the risk profile is bounded: a mis-calibrated demand forecast or a biased credit model produces specific, measurable errors in specific decision contexts.

LLM governance adds a set of risks that traditional ML governance does not address: hallucination (an LLM can produce confidently stated incorrect information that cannot be predicted from the training data), prompt injection (an attacker can embed instructions in user inputs or retrieved content that override the model’s intended behaviour), data exfiltration through the response layer (an LLM with access to sensitive context can be manipulated into including that information in its outputs), and knowledge cutoff failures (an LLM answering from its training data rather than retrieved current content may provide outdated guidance with no indication that it is outdated). LLM-specific risks also include jailbreaking attempts where adversarial prompts bypass safety constraints and training data supply chain risks in fine-tuned deployments, where poisoned fine-tuning data can embed persistent model-level vulnerabilities.

AI agent governance extends these risks further and adds new ones: autonomous action (an agent can take real actions in real systems – creating records, sending communications, triggering workflows — making errors consequential rather than just informational), scope creep (an agent given broad tool access and an ambitious goal can take unintended actions within its technical permission scope), cascading errors (a mistake in an early step of a multi-step workflow can propagate through subsequent steps, compounding before anyone detects it), and accountability ambiguity (when an autonomous agent takes an action that causes harm, the accountability chain is less clear than for a human-executed process). Multi-agent orchestration introduces an additional risk layer: where one agent acts as an orchestrator directing subagents, permission escalation can cascade in ways that are difficult to detect and contain before real-world consequences occur.

A governance framework adequate for 2026 enterprise AI deployments must address all three categories: traditional ML risks, LLM-specific risks, and agent-specific risks. Organisations that have governance frameworks built only for the first category are systematically under-governed for their current AI portfolio.

For a fuller treatment of AI agent risks and how to address them in implementation, see our guide to AI agent development services: use cases, risks, and implementation roadmap.

The Regulatory Landscape: What Your Organisation Is Accountable to in 2026

Three regulatory frameworks define the primary compliance baseline for enterprise AI governance in 2026. Understanding their requirements and how they interact is necessary for building a governance programme that is both comprehensive and efficient.

The EU AI Act

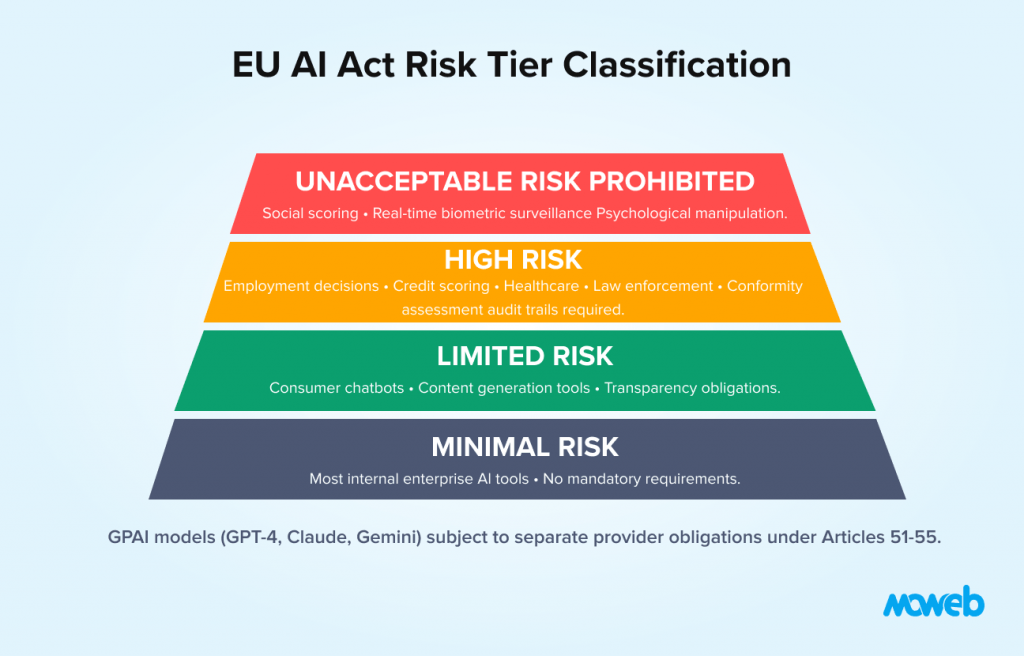

The EU AI Act is the most consequential AI regulation in force globally. It applies to any organisation deploying AI systems that affect EU residents, regardless of where the organisation is headquartered. Its risk-based approach classifies AI systems into four tiers:

Unacceptable risk systems are prohibited entirely. These include AI used for social scoring by public authorities, real-time biometric surveillance in public spaces, and systems that exploit psychological vulnerabilities.

High-risk systems face the most stringent requirements. They include AI used in employment decisions (hiring, performance evaluation), credit scoring, educational assessment, law enforcement, critical infrastructure, and healthcare. High-risk systems must undergo conformity assessment before deployment, maintain comprehensive technical documentation, implement human oversight mechanisms, achieve defined accuracy and robustness standards, and establish audit trails.

Limited risk systems (including most consumer-facing chatbots and content generation tools) face transparency obligations: users must be informed when they are interacting with an AI system and when content is AI-generated.

Minimal risk systems (most enterprise internal AI tools) face no mandatory requirements beyond good practice.

The penalties for non-compliance are significant: up to 35 million euros or 7% of global annual turnover for prohibited system violations, and up to 15 million euros or 3% of turnover for high-risk system violations. For the majority of enterprise LLM and agent deployments, the relevant tier is either limited risk or high risk, depending on the use case. The classification decision itself requires governance attention.

Note on GPAI (General Purpose AI) models: Organisations procuring foundation model APIs (such as GPT-4, Claude, or Gemini) should be aware that those model providers carry separate obligations under Articles 51–55 of the EU AI Act. However, enterprises deploying those models in high-risk applications retain full deployment-tier compliance obligations. The model provider’s compliance does not transfer to the deploying organisation; each deployment must be assessed independently.

The NIST AI Risk Management Framework (AI RMF)

The NIST AI RMF is the US federal standard and has become the practical governance reference for US enterprise AI programmes, particularly in financial services, healthcare, and government-adjacent organisations. It organises AI risk management around four core functions:

Govern establishes the organisational structures, policies, and accountabilities that enable AI risk management across the enterprise. This includes AI governance policies, role definitions, training, and cultural change management.

The map identifies and categorises AI risks for specific systems in their specific deployment context. This includes documenting AI system characteristics, intended use, potential harms, and affected stakeholders.

The measure assesses AI risks using both quantitative and qualitative methods. This includes model evaluation, bias testing, robustness testing, and ongoing monitoring.

Manage implements controls to address identified risks and monitors their effectiveness over time. This includes incident response, model updates, and continuous monitoring.

The NIST AI RMF does not prescribe specific technical controls – it is a process framework, not a compliance checklist. Organisations implement it by developing specific policies and controls within each of the four functions appropriate to their risk profile and regulatory context. For LLM-deploying organisations, the practical implementation companion is NIST AI 600-1 (Generative AI Profile, published July 2024), which translates the AI RMF into LLM-specific risk categories and mitigations. This is the document your engineering and compliance teams should be working from.

ISO 42001

ISO 42001 is the international standard for AI management systems, published in December 2023 and now widely adopted by enterprise technology suppliers and their customers as a third-party auditable certification. Like ISO 27001 for information security, it provides a systematic framework for establishing, implementing, maintaining, and continuously improving an AI management system.

ISO 42001 certification is becoming a procurement requirement in regulated industries and in public sector contracts. Organisations purchasing AI development services from vendors are increasingly requiring ISO 42001 certification or demonstrated alignment as part of due diligence. For organisations providing AI services (including AI development firms), certification signals governance maturity to enterprise clients.

Moweb holds ISO 27001:2022 certification and operates CMMI Level 3 compliant processes, providing a governance foundation aligned with enterprise AI governance requirements.

The Eight Controls That Matter Most for LLM and Agent Governance

With the regulatory context established, here are the specific controls that have the greatest impact on AI governance quality for LLM and agent deployments. These are prioritised by the frequency and severity of governance failures they address in enterprise production environments.

Control 1: AI System Inventory

You cannot govern what you cannot see. The most fundamental governance control is a maintained inventory of every AI system operating within the organisation, including systems deployed by business units without central IT involvement (shadow AI).

The inventory should record for each system: the AI capability type (LLM, ML model, AI agent), the deployment context (internal tool, customer-facing application, automated decision system), the data it accesses and processes, the external services it calls, the owner and accountable executive, the regulatory classification (EU AI Act tier, NIST RMF risk category), and the governance review status.

Shadow AI is a persistent governance problem in 2026. Business units adopt AI-powered tools (Microsoft Copilot, standalone AI assistants, third-party AI APIs) faster than central IT can track them. When CIOs are asked how many AI tools their employees use, the real number, once network monitoring is active, is frequently two to three times higher than the estimated figure. An AI system inventory process that relies only on central IT is incomplete. It needs to include a discovery mechanism (network monitoring, vendor contract review, regular business unit self-reporting) that surfaces AI use that was never formally approved.

Control 2: Access Controls at the Model and Retrieval Layer

Access control for AI systems must be implemented at the model and retrieval layer, not only at the application layer. This is especially critical for RAG systems and AI agents.

For RAG systems: every document chunk in the vector database must carry metadata indicating its access classification, and every retrieval query must include a filter that restricts results to documents the requesting user is authorised to see. An application-layer access control that restricts which users can access the AI assistant is insufficient if the underlying retrieval system can surface any document in the corpus to any query. Note that metadata filtering capabilities vary by vector database platform (Pinecone, Weaviate, Qdrant, and others each implement filter logic differently). Verify that your platform’s filtering is tested under load and does not degrade under high concurrency.

For AI agents: every tool and data source accessible to the agent must be explicitly permitted in the agent’s configuration using the principle of least privilege. The agent should have access to only the tools and data it specifically needs for its defined tasks, with explicit permission boundaries rather than broad access grants. Tool permissions should be reviewed and revalidated whenever the agent’s use case or operational context changes. For a technical implementation guide to access controls in RAG systems, see our resource on RAG development for enterprise knowledge systems.

Control 3: Comprehensive Audit Logging

Audit logs for AI systems must capture significantly more than traditional software logging. For LLMs and agents, effective audit logging covers: every input submitted to the model (user query, system context, retrieved documents), every output produced (the model’s response), every tool call made by an agent (tool name, parameters, result), every data access event (which documents were retrieved, by which user, at what time), and every human escalation event (when the AI system invoked human review, and the outcome).

These logs serve multiple functions: they enable incident investigation (reconstructing what happened when an AI system produced an unexpected output), they support compliance audits (demonstrating to regulators that the system operated within its defined scope), and they provide the data needed for ongoing quality monitoring and improvement.

Logs should be immutable (not modifiable after creation), retained for a period appropriate to the regulatory and business context (typically one to three years minimum), and structured to enable both automated monitoring and human-readable incident review.

Control 4: Defined Accountability Structure

Every AI system in production should have a named owner with defined responsibilities: a technical owner (typically an engineering lead responsible for system performance, security, and maintenance), a business owner (the function whose workflow the AI system supports, accountable for use case appropriateness and output quality oversight), and an executive sponsor (typically a CIO, CDO, or functional VP accountable for the system’s compliance and ethical operation).

The accountability structure should also define the escalation path: what constitutes an incident requiring executive attention, who makes the decision to suspend or roll back a system, and how affected parties are notified when a governance issue is identified.

Accountability ambiguity is one of the most common and most consequential AI governance gaps in enterprise deployments. When something goes wrong with an AI system that has no defined owner, response time is slow, decision authority is unclear, and both regulatory and reputational exposure is amplified.

Control 5: Prompt Injection Defence

Prompt injection is the most actively exploited attack vector against enterprise LLM applications. An attacker embeds instructions in user-submitted content, retrieved documents, or external data sources that attempt to override the model’s system prompt and cause it to take unintended actions or reveal sensitive information. The OWASP LLM Top 10 (2025) rates prompt injection as the single most critical LLM application security risk.

Effective prompt injection defence requires multiple layers:

Input sanitisation, user-submitted content, and externally retrieved content are treated as untrusted and processed through a sanitisation layer before being included in the model’s context. Pattern-matching alone is insufficient against indirect prompt injection in retrieved documents. Current best practice combines pattern filtering with structured output enforcement (constraining model outputs to defined schemas), tool call whitelisting (explicitly defining which tools an agent can invoke rather than relying on natural language boundaries), and dedicated guardrail frameworks. Production-grade options include NVIDIA NeMo Guardrails, LlamaGuard, and Guardrails AI, each providing different tradeoffs between latency and detection coverage.

System prompt hardening: the system prompt explicitly instructs the model not to follow instructions embedded in user content or retrieved documents, to treat its system-level instructions as authoritative regardless of what is presented in the context window.

Output monitoring: model outputs are monitored for anomalous patterns that may indicate a successful injection attempt, such as responses that include system prompt content, responses that instruct users to take specific actions, or responses with atypical formatting.

For AI agents with real tool access, prompt injection defence is especially critical: a successful injection against an agent can cause it to execute unintended actions in real enterprise systems.

Control 6: Bias and Fairness Monitoring

For AI systems that make or influence decisions about people (hiring, credit, benefits, healthcare triage, performance evaluation), bias monitoring is both a regulatory requirement and an ethical obligation. Even systems that were evaluated for bias before deployment need ongoing monitoring because bias can emerge as data distributions shift post-deployment.

Bias monitoring requires defining the protected attributes relevant to the use case, collecting the output data needed to measure disparate impact across those attributes, running bias metrics on a regular cadence (at a minimum, quarterly for high-risk systems), and defining thresholds that trigger a review and potential remediation.

For LLMs used in decision-adjacent contexts, bias manifests differently than in traditional ML models. An LLM’s outputs can exhibit demographic or representational bias in language, framing, and content emphasis even without producing directly discriminatory classifications. Evaluation frameworks for LLM bias require specific methodologies beyond traditional ML fairness metrics. The most widely adopted framework for systematic LLM evaluation, including bias dimensions, is HELM (Holistic Evaluation of Language Models), developed at Stanford and covering accuracy, calibration, robustness, fairness, bias, and toxicity across standardised scenarios. The EleutherAI LM Evaluation Harness is an open-source alternative suitable for teams running self-hosted evaluations.

Control 7: Model Drift Monitoring and Revalidation

Model drift is the degradation of an AI system’s performance over time as the data distribution in production diverges from the distribution on which the model was trained or optimised. It affects both traditional ML models (where it is well understood) and RAG-based LLM systems (where it is less commonly monitored but equally consequential).

For RAG systems, retrieval quality drift occurs as the document corpus grows and becomes less consistently structured, as documents become outdated without being updated in the knowledge base, or as user query patterns evolve away from the patterns the chunking and retrieval pipeline was optimised for.

Monitoring for drift requires baseline performance metrics established at deployment, a representative set of evaluation queries that is rerun on a regular schedule, alerting when performance falls below defined thresholds, and a defined revalidation process triggered by drift detection.RAGAS (or equivalent RAG evaluation frameworks) provides the benchmark metrics most commonly used: faithfulness, answer relevancy, and context precision. Version your evaluation suite alongside your pipeline to ensure consistent measurement across updates.

Control 8: Incident Response Plan for AI Systems

Every organisation deploying LLMs or AI agents in production should have an AI-specific incident response plan covering: how AI incidents are classified (hallucination leading to a harmful output, successful prompt injection, unauthorised data access, agent action outside its permitted scope, model drift causing systematic quality failure), who is notified and within what timeframe, what the initial containment actions are (typically including the ability to suspend or roll back the system within a defined timeframe), how affected parties are notified, and what the post-incident review and remediation process looks like.

Without an AI incident response plan, organisations discover their response procedures when they most need them – under pressure, in public, with a problem that is already causing harm.

Implementation Sequence: Where to Start When You Are Behind

Most enterprise organisations deploying AI in 2026 are behind on governance relative to the regulatory and operational requirements. A phased implementation sequence focused on the highest-impact controls first is more effective than attempting comprehensive governance overnight.

Phase 1 (Weeks 1 to 4): Visibility and accountability. Conduct an AI system inventory. Identify every AI system in production or active development. Assign named owners to each. Classify each system under the EU AI Act and NIST AI RMF. This phase does not require technical implementation — it requires organisational alignment and information gathering. It is, however, the prerequisite for everything else.

Phase 2 (Weeks 4 to 8): Access controls and logging. Implement or audit access controls at the model and retrieval layer for every production AI system. Implement comprehensive audit logging. For systems where access controls or logging are absent, either retrofit them or suspend the system until they are in place.

Phase 3 (Weeks 8 to 12): Prompt injection defence and incident response. Implement prompt injection defences for every AI system that processes user-generated or externally retrieved content. Develop and test an AI-specific incident response plan. Run a tabletop exercise simulating a prompt injection incident and a data exfiltration scenario.

Phase 4 (Weeks 12 to 20): Monitoring and continuous governance. Implement bias monitoring for high-risk systems. Implement model drift monitoring for all production systems. Establish a regular governance review cadence (quarterly minimum) that covers the AI system inventory, drift monitoring reports, bias metrics, audit log reviews, and incident post-mortems.

Organisations with dedicated compliance teams and mature IT governance can compress this timeline. Organisations earlier in their governance journey should use it as a realistic baseline. Moweb’s AI Security & Governance practice implements all eight controls across enterprise AI engagements, with particular depth in prompt injection defence, audit trail architecture, and EU AI Act compliance design.

Frequently Asked Questions About AI Governance for LLMs and Agents

What is the EU AI Act and does it apply to my organisation? The EU AI Act is the world’s first comprehensive AI regulation, adopted by the European Union in 2024 and entering full enforcement in 2026. It applies to any organisation that deploys AI systems affecting EU residents, regardless of where the organisation is headquartered. If your organisation sells products or services to EU customers, employs EU-based staff in AI-impacted roles, or processes data about EU residents through AI systems, the Act likely applies to at least some of your AI deployments. Penalties for high-risk system non-compliance reach 15 million euros or 3% of global annual turnover. First-time compliance assessment should not be deferred.

What is the NIST AI Risk Management Framework, and is it mandatory? The NIST AI Risk Management Framework (AI RMF) is a voluntary framework published by the US National Institute of Standards and Technology in January 2023. It is not legally mandatory in the private sector. However, it is required or strongly recommended for US federal agencies and their suppliers, and it has become the practical standard adopted by enterprise organisations in financial services, healthcare, and government-adjacent industries. Many enterprise procurement processes now require demonstrated NIST AI RMF alignment as a vendor qualification criterion.

What is ISO 42001, and how is it different from ISO 27001? ISO 42001 is the international standard for AI management systems, published in December 2023. It covers the governance, development, and operation of AI systems specifically. ISO 27001 covers information security management broadly. The two are complementary: ISO 27001 addresses data and system security, while ISO 42001 addresses AI-specific concerns, including algorithmic accountability, bias management, and responsible AI practices. Organisations with ISO 27001 certification have a governance foundation that accelerates ISO 42001 implementation but does not replace it.

What is prompt injection and why is it the highest-priority security risk for LLMs? Prompt injection is an attack where malicious instructions are embedded in content that an LLM processes (user inputs, retrieved documents, API responses), causing the model to deviate from its intended behaviour. It is the highest-priority security risk for enterprise LLMs because it can be executed without system access, it exploits the fundamental architecture of how LLMs process context, and a successful attack against an AI agent can cause real-world actions in enterprise systems. The OWASP LLM Top 10 (2025) rates prompt injection as the most critical LLM application security risk.

What does AI accountability mean in practice for enterprises? AI accountability in practice means that for every AI system in production, there is a named individual who is responsible for its technical performance, a named business owner accountable for its appropriate use, and a documented escalation path for issues. It means that when an AI system produces an incorrect or harmful output, the organisation can immediately identify who is responsible for investigating and addressing it. It means that audit trails exist that allow reconstruction of the system’s behaviour. And it means that governance decisions (approving new use cases, suspending under-performing systems, responding to incidents) have clear decision authority rather than diffusing into organisational ambiguity.

How does the EU AI Act classify a RAG-based enterprise chatbot? Most RAG-based enterprise chatbots deployed for internal knowledge management fall into the limited risk or minimal risk tier of the EU AI Act. Limited risk applications face transparency obligations (informing users they are interacting with AI). Minimal risk applications face no mandatory requirements. However, if the chatbot is used to support decisions that fall into the high-risk categories (such as HR decisions, credit-related guidance, or healthcare-adjacent information), the classification may be higher. A formal use-case-by-use-case classification exercise is recommended for any organisation deploying multiple AI systems, because the same underlying technology can fall into different risk tiers depending on its application context.

What is model drift, and how should enterprises monitor for it? Model drift is the degradation of an AI system’s performance over time as the data the system encounters in production diverges from the data it was trained or optimised on. For traditional ML systems, drift is monitored by tracking prediction accuracy, confidence calibration, and feature distribution changes on a regular cadence. For RAG-based systems, retrieval quality drift is monitored by running a set of benchmark evaluation queries on a regular schedule and measuring changes in RAGAS scores (faithfulness, answer relevance, context precision) against the baseline established at deployment. Drift alerts should trigger a governance review and, where warranted, reindexing or pipeline updates.

What is AI TRiSM? AI TRiSM (AI Trust, Risk, and Security Management) is a governance framework defined by Gartner that integrates trust, risk, privacy, and security controls for AI systems into a unified operational capability. It is relevant to enterprise CIOs because it provides a vendor-neutral framing for AI governance programmes that spans model explainability, bias monitoring, data lineage, and adversarial robustness. AI TRiSM is increasingly appearing in board-level governance discussions and enterprise technology procurement criteria. Organisations building governance programmes aligned with the NIST AI RMF will find significant overlap with AI TRiSM principles.

Conclusion: Governance Is What Makes AI Trustworthy at Scale

The organisations that will sustain AI adoption at scale are not necessarily those with the most advanced AI capabilities. They are the ones that have built governance frameworks robust enough that business leaders, compliance teams, and boards trust the systems they are deploying.

Trust in AI is not built through marketing. It is built through demonstrable controls: an AI system inventory that shows you know what you have deployed, access controls that demonstrate you manage who can retrieve what, audit trails that let you reconstruct any decision, accountability structures that make clear who is responsible, and incident response plans that show you are prepared for when things go wrong.

In 2026, governance is not a constraint on AI adoption. It is the foundation on which sustainable AI adoption is built. Organisations that treat it as an afterthought are building on sand.

If your organisation is assessing its current governance posture or building governance frameworks for new AI deployments, Moweb’s AI Security & Governance practice works with enterprises across financial services, healthcare, manufacturing, and technology to implement the controls covered in this guide. Talk to our team about your governance requirements.

If your organisation needs a governance gap assessment, a phased implementation roadmap, or hands-on help closing specific control gaps, Moweb’s AI Security & Governance practice works with enterprises across financial services, healthcare, manufacturing, and technology to deliver exactly that. Talk to our team about your governance requirements and get a clear picture of where you stand and what to prioritise next.

Found this post insightful? Don’t forget to share it with your network!