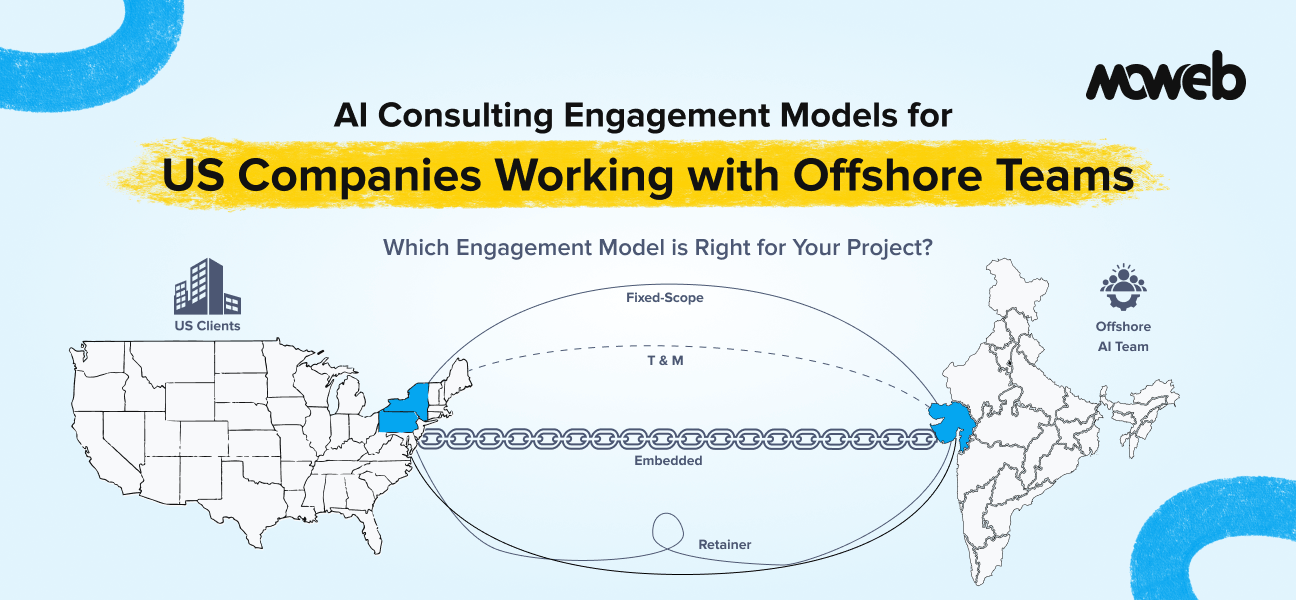

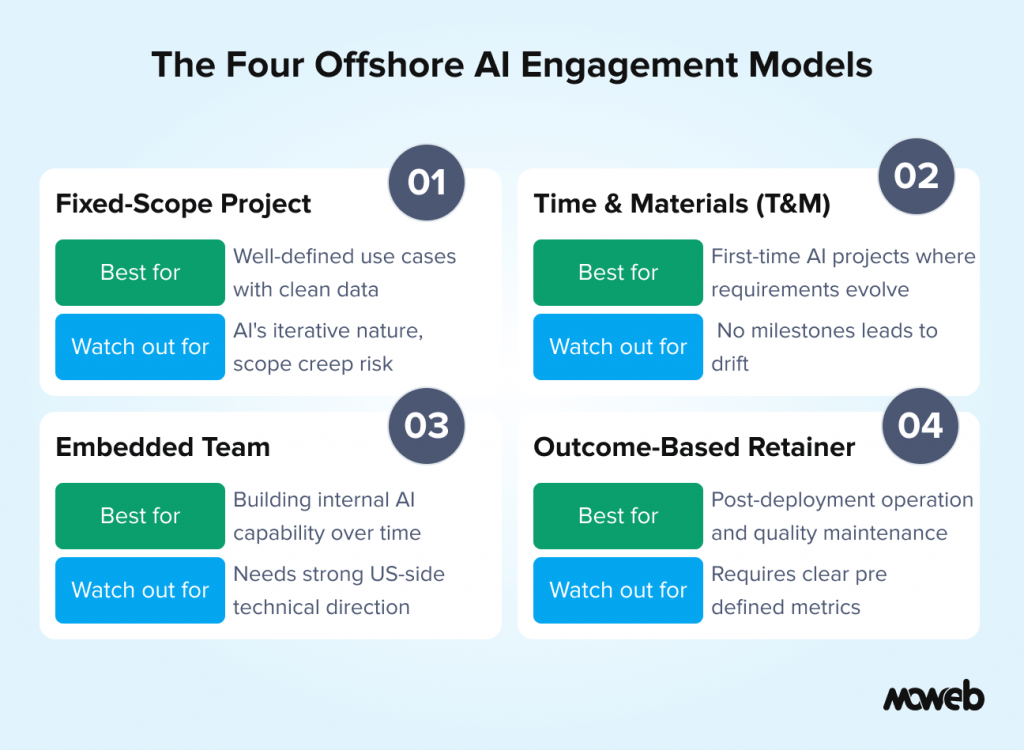

What engagement models work best for US companies working with offshore AI teams? For US companies working with offshore AI development teams, four engagement models are commonly used, each suited to different project types and organizational contexts. Fixed-scope projects work when requirements are precise, and the AI use case is well-defined. Time-and-materials (T&M) engagements work when requirements will evolve as the team learns from real data and user behavior. Embedded team models work when the US organization wants to build internal AI capability alongside delivery. Outcome-based retainers work when the focus is on ongoing system quality and operational improvement rather than discrete project delivery. Most successful long-term offshore AI relationships combine T&M for initial build phases with a support retainer for operations.

What should US companies negotiate with offshore AI vendors? The most important contract terms for US companies working with offshore AI teams are: IP ownership (all code, model configurations, and data pipelines must be explicitly assigned to the US company, not retained by the vendor), data handling obligations (where is data processed, stored, and who has access during the engagement), knowledge transfer requirements (the US company must be able to operate the system independently at engagement end), SLA commitments for post-launch support (response time, uptime, escalation procedures), and change management provisions (how are material changes to the AI system scoped, priced, and approved). These provisions should be reviewed by legal counsel familiar with cross-border software development agreements.

US companies working with offshore AI development teams in 2026 are navigating a more mature but still complex engagement landscape. The days when offshore meant “cheap developers executing pre-defined tasks” are long gone for AI work. Building production AI systems requires genuine domain expertise, architectural judgment, security discipline, and ongoing operational support – none of which can be purchased on a time-and-materials basis from a lowest-cost vendor who just added “AI” to their services page. Unlike nearshore teams in Latin America that offer time-zone proximity, offshore AI teams in India and Eastern Europe compete on depth of AI/ML talent and cost efficiency, but they require more structured AI consulting engagement models to succeed.

At the same time, the cost advantage of working with offshore AI teams remains significant. A senior AI engineer in India or Eastern Europe with genuine production experience costs roughly 30% to 50% of the equivalent US-based hire, depending on seniority and specialization. For US mid-market companies with real AI ambitions but constrained AI budgets, offshore delivery done well is a legitimate and frequently compelling route to production AI systems they could not afford to build domestically.

The key phrase is “done well.” And doing it well depends heavily on choosing the right engagement model, structuring the right contractual protections, and managing the relationship with the operational discipline that offshore AI delivery requires.

This guide covers what US companies need to know to make offshore AI consulting engagements productive rather than frustrating.

Why the Engagement Model Matters More for AI Than for Traditional Software

In traditional offshore software development, engagement model mismatches are expensive but recoverable. A fixed-scope project with poorly defined requirements produces rework. A T&M engagement without clear milestones drifts. These are painful but fixable.

In offshore AI development, engagement model mismatches are more consequential for three reasons.

First, AI project requirements are inherently iterative. You rarely know exactly what the model or system needs to do until you have run it on real data and seen where it falls short. A fixed-scope AI engagement with rigid deliverable definitions frequently fails because the deliverables defined before the project started do not accurately reflect what the project needs to produce once real data behaviour is understood. US clients who insist on fixed-scope pricing for AI projects often get exactly what was scoped – which is not necessarily what they needed.

Second, AI quality is harder to assess remotely. A traditional software deliverable can be tested against defined acceptance criteria: does the button work, does the form submit, does the report generate? An AI system’s quality is probabilistic and context-dependent: it is not right or wrong, it is accurate to a greater or lesser degree across a distribution of inputs. Evaluating this requires a structured evaluation framework and meaningful test data, not just a demonstration on clean examples. US clients who accept “it looks good in the demo” as delivery acceptance for an AI engagement frequently discover production quality gaps after the engagement has closed.

Third, AI systems require ongoing operational involvement. Traditional software, once delivered, is relatively static. AI systems degrade without maintenance: models drift, knowledge bases become stale, prompt injection vulnerabilities emerge, and usage patterns reveal failure modes that testing did not anticipate. MLOps infrastructure, including automated retraining pipelines, drift monitoring, and inference logging, must be part of the engagement scope from the start, not retrofitted after go-live. An offshore AI engagement that ends at “go live” with no post-deployment support provisions leaves the US company with a system that will degrade without the technical knowledge to address it.

Understanding these three dynamics shapes which engagement model is appropriate for each situation.

The Four Engagement Models for US-Offshore AI Delivery

Model 1: Fixed-Scope Project

How it works: The US company and the offshore team define specific deliverables, a timeline, and a fixed price before the project begins. The offshore team delivers the defined scope; the US company pays the agreed price.

When it works for AI: Fixed-scope works for AI when the use case is genuinely well-defined, and the data environment is understood before the project starts. The best candidates are: RAG system builds where the document corpus is clean and accessible, the user queries are well-understood, and the quality thresholds are pre-defined; ML model builds where labelled historical data exists, and the prediction target is precisely specified; or second or third AI deployments at organisations that have done this before and have the evaluation frameworks in place to define deliverables precisely.

When it fails for AI: Fixed-scope fails for AI when requirements are defined at the aspirational level (“we want an AI that improves customer satisfaction”) rather than the specific level (“we want a RAG system that answers 90% of our top 50 customer query types with accuracy above 85% as measured by RAGAS”). It also fails when data quality is unknown at the time of scoping, which is the majority of first-time AI projects.

Key contract provisions: Definition of done must include specific, measurable quality metrics (not just functional delivery). Data quality assumptions must be explicitly stated with change order provisions if actual data quality requires additional remediation work. Intellectual property assignment must explicitly transfer all deliverables to the US company.

Model 2: Time-and-Materials (T&M)

How it works: The US company pays for the offshore team’s time at defined daily or weekly rates. Scope evolves as the project progresses; cost is variable.

When it works for AI: T&M is the natural fit for AI projects where requirements will evolve, which is most first-time AI deployments at US mid-market companies. The iterative nature of AI development (data quality discovery, model evaluation, prompt optimisation, retrieval tuning) means the actual work required cannot be precisely scoped upfront without meaningful data access.

T&M also works well when the US company wants a strong influence over technical decisions throughout the engagement, not just at delivery. With T&M, the US stakeholder is an active participant in the project rather than a deliverable recipient at the end.

When it fails for AI: T&M fails when there is no governance over scope: when the project drifts without clear milestones, when the offshore team bills hours on exploratory work without producing progress toward defined outcomes, or when the US company’s internal decision-making delays add time to the engagement without accountability.

Key contract provisions: Despite the variable nature of T&M, milestone-based checkpoints should be defined at the start: specific deliverables that mark the end of each phase (data assessment complete, architecture reviewed and approved, evaluation baseline established, production deployment complete). Budget caps per phase provide cost predictability without the rigidity of a fixed scope. Weekly status reporting with specific metrics is non-negotiable. Consider starting with a paid 2-week discovery or pilot sprint ($3,000–$8,000) before committing to the full T&M engagement. This lets you validate the vendor’s technical capability and communication quality with minimal risk.

Model 3: Embedded Team Model

How it works: The offshore team embeds within the US company’s development process, working as an extension of the internal team: in the same project management tools, following the same engineering standards, participating in the same sprint ceremonies, and with direct reporting relationships to the US technical lead.

When it works for AI: The embedded model is the best choice when the US company wants to build internal AI capability over time rather than simply receive a delivered product. The embedded model transfers knowledge organically through daily collaboration rather than through a formal handover at the end of a project. It also works well for ongoing AI product development where the system is continuously evolving rather than being built once and handed over.

When it fails for AI: The embedded model fails when the US company does not have the internal technical leadership to direct the offshore team effectively. An embedded offshore AI team without strong US-side technical direction tends to execute tasks rather than exercise architectural judgment, producing technically functional work that does not add up to a strategically coherent system.

Key contract provisions: The US company must retain full IP ownership of all work produced within the embedded arrangement. Engineering standards, code review processes, and deployment approval procedures must be explicitly agreed upon and documented. Exit provisions must address knowledge continuity — what happens to institutional knowledge if the offshore team members rotate?

Model 4: Outcome-Based Retainer

How it works: The US company pays a monthly retainer for defined operational outcomes: system uptime, response quality above defined thresholds, a specified volume of monitored incidents addressed within defined SLAs, and a specified volume of enhancement work delivered per month.

When it works for AI: The outcome-based retainer is the ideal model for post-deployment AI system operations. Once a system is in production, the value delivered is operational: the system works, quality is maintained, the knowledge base is current, and issues are addressed quickly. Paying per hour for this work misaligns incentives – the vendor is rewarded for incidents, not prevented ones. An outcome-based retainer aligns the offshore team’s incentives with the US company’s operational goals.

When it fails for AI: Outcome-based retainers require clearly defined metrics that both parties trust. If the quality metrics are vague or disputed, the model becomes contentious. They also require enough operational history to set reasonable baseline expectations – they are not appropriate for new system launches where performance baselines have not been established.

Managing the Offshore AI Relationship: What US Companies Get Wrong

Beyond engagement model selection, how US companies manage their offshore AI relationships significantly affects outcomes. These are the patterns that consistently cause problems.

Treating offshore AI engineers as ticket executors rather than partners. The best offshore AI engineers are not order-takers. They have formed views on architecture, tooling, and quality trade-offs. US companies that create a relationship where offshore team members execute instructions without feeling empowered to push back on bad ideas tend to get what they ask for rather than what they need. The most productive offshore AI relationships treat the offshore team as a genuine technical partner with expected contribution to design decisions, not just execution.

Accepting sprint demos as acceptance. AI quality cannot be assessed in a live demo. Acceptance criteria for AI work must be metric-based: RAGAS scores for RAG systems, precision and recall for ML models, and task completion rates for AI agents. US companies that accept demo quality as delivery quality discover production gaps after the engagement has closed and the leverage has gone.

Underinvesting in data preparation before the engagement starts. Data quality problems discovered mid-engagement create scope change conversations that are expensive and relationship-straining. US companies that conduct an honest data quality assessment and provide the offshore team with clean, representative, accessible data from day one have significantly smoother engagement trajectories.

Not planning for the knowledge transfer. The end of an offshore AI engagement is a knowledge transfer event that needs to be planned, not assumed. What documentation will be produced? What training will the internal team receive? What is the post-engagement support model? US companies that do not negotiate these provisions explicitly frequently end the engagement with a working system, but no ability to maintain or evolve it.

What US Companies Should Expect from Moweb as an Offshore AI Partner

Moweb operates from offices in Ahmedabad, India, and Secaucus, New Jersey, which means we operate in both Indian Standard Time and US Eastern/Central/Pacific time zones simultaneously. Our New Jersey presence means US clients have a domestic point of contact for engagement management, compliance conversations, and stakeholder alignment, while our India engineering team provides the depth and cost structure of offshore delivery.

Our standard engagement approach for US clients:

- Discovery phase (weeks 1-2): We conduct an AI readiness assessment covering data quality, infrastructure, use case clarity, and governance requirements before any build commitment. US clients know exactly what they are building and what it will cost before the main engagement begins.

- Transparent T&M with milestone gates: We work T&M for AI builds with clearly defined milestone deliverables and budget caps per phase. You are never surprised by cost because we communicate scope changes as they emerge, not after.

- Metric-based delivery acceptance: We define quality metrics in the discovery phase and deliver against them. “It works” is not our acceptance standard. RAGAS scores, precision/recall, task completion rates, and access control validation are.

- Knowledge transfer by design: Every engagement includes architecture documentation, operational runbooks, and internal team training sessions. Our goal is that your team can operate the system independently. We want a long-term relationship built on value, not dependency.

US-accessible support: Post-deployment support is available via our New Jersey office during US business hours and from our India team for extended coverage. US clients do not manage time zone gaps alone.

Frequently Asked Questions About Offshore AI Consulting Engagements for US Companies

Is working with an offshore AI team as reliable as a US-based team? Reliability in an offshore AI engagement is a function of the vendor’s experience, governance practices, and engagement model – not geography. An offshore AI team with genuine production experience, a structured delivery methodology, and clear quality metrics is more reliable than a US-based team that is new to AI development. The key is evaluating vendors on production track record and delivery methodology rather than location.

How do we manage time zone differences with an offshore AI team? The most effective approach is structured overlap windows: defined daily hours when US and offshore team members are simultaneously available for real-time collaboration (typically 2 to 4 hours covering late morning US Eastern and evening India time). Outside overlap windows, asynchronous communication handles routine updates and progress reporting. The key investment is in documentation discipline: decisions, blockers, and design choices must be written down, not assumed to be remembered from verbal conversations.

What IP protections should US companies require in an offshore AI contract? At minimum: explicit assignment of all intellectual property (including code, model configurations, prompt templates, data pipelines, and documentation) to the US company; no retention or reuse rights for the offshore vendor on any client-specific work; data handling obligations specifying where US company data is processed and stored; and a clear definition of what constitutes work product versus vendor tooling that predated the engagement.

How should US companies handle data security when working with offshore AI teams? Data security in offshore AI engagements requires: a Data Processing Agreement specifying how the offshore team accesses, processes, and stores US company data; access controls that limit offshore team members to the minimum data required for their specific tasks; audit logging of all data access during the engagement; and clear data deletion obligations at engagement end. For regulated data (HIPAA, CCPA, financial PII), additional contractual protections and potentially additional technical controls (data anonymisation before sharing, processing within US-only infrastructure) may be required.

What is a reasonable daily rate for an offshore AI engineer in 2026? Rates vary by location, seniority, and specialisation. Senior AI engineers with RAG, ML, and agent deployment experience at established Indian development firms typically range from $80 to $150 per person per day in 2026. Mid-level AI engineers with one to three years of production experience range from $50 to $90 per person per day. These rates are roughly 30% to 50% of equivalent US market rates, making offshore delivery genuinely compelling for cost-sensitive US mid-market companies. These daily rates (based on an 8-hour workday) equate to roughly $35–$65/hr for senior engineers and $20–$40/hr for mid-level, approximately 30% to 50% of US market rates. Be cautious of rates significantly below these ranges – they typically reflect inexperienced engineers being presented as AI specialists.

How do we evaluate an offshore AI vendor’s genuine experience vs claimed experience? Ask for: a specific production deployment they can walk you through technically (what LLM, what vector database, what chunking strategy, what evaluation framework); a reference client in your industry or use case area you can speak with directly; and the specific engineers who would work on your project – not the senior architects who present in sales conversations. The ability to answer “what went wrong on a past project and how you fixed it” confidently is one of the most reliable signals of genuine production experience.

Conclusion: The Right Offshore AI Engagement Creates Lasting Capability

The US companies that have the most productive offshore AI relationships in 2026 treat them as capability partnerships, not cost arbitrage arrangements. They choose engagement models that match their actual project context, invest in the data quality work that makes delivery smooth, define quality metrics before accepting delivery, and plan knowledge transfer as a first-class deliverable rather than a nice-to-have.

The offshore AI vendors worth working with are the ones who operate the same way: who challenge underspecified requirements, who communicate scope changes as they emerge, who define and deliver against quality metrics, and whose goal is that you can operate independently after the engagement ends. Moweb’s AI Strategy & Consulting and Generative AI & LLM development teams operate exactly this way for US clients. We are based in New Jersey and Ahmedabad, we work in your time zones, and we build AI systems you can trust and operate. If you are a US company planning an AI project and want to understand what working with Moweb actually looks like, let’s have that conversation.

Found this post insightful? Don’t forget to share it with your network!