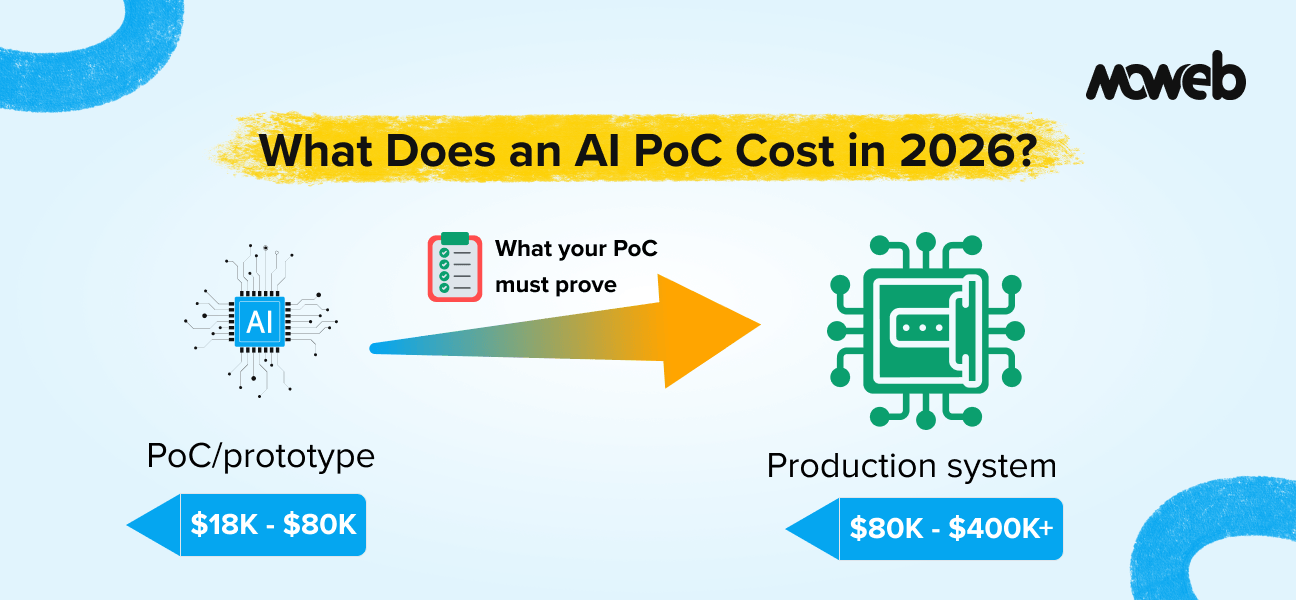

What does an AI proof of concept cost in 2026?An AI proof of concept for an enterprise use case typically costs between $15,000 and $80,000 in 2026, depending on the type of AI capability being demonstrated, the complexity of the data environment, the number of integrations required, and the level of governance and security work included. A focused RAG PoC on a clean, single-source document corpus sits toward the lower end. An AI agent PoC requiring multiple enterprise system integrations, access control design, and adversarial testing sits toward the higher end. A PoC that costs significantly less than $15,000 is almost certainly too scoped to produce meaningful production-readiness evidence.

What should an AI PoC actually deliver? A well-scoped AI PoC should deliver: a working system demonstrating the core AI capability on representative real data (not synthetic data), a documented evaluation of output quality against defined metrics, an architecture recommendation for production scaling, an honest assessment of risks and limitations, and a clear cost and timeline estimate for a production deployment. A PoC that only produces a demo on clean test data without these outputs has not delivered what an enterprise needs to make an informed go/no-go decision.

What percentage of AI PoCs fail to reach production?According to Gartner, at least 30% of generative AI projects are abandoned after the proof-of-concept stage, primarily due to poor data quality, unclear business value, and escalating costs. BCG research finds that 46% of AI PoCs are scrapped before reaching production across the average enterprise. A well-scoped PoC with pre-defined acceptance criteria and real data significantly reduces this failure rate.

“Can we just do a quick PoC first?” is one of the most common questions in enterprise AI planning conversations. Understanding the true AI proof of concept cost and what a PoC should actually deliver is one of the most misunderstood aspects of enterprise AI planning.

The idea behind a proof of concept is sound: before committing a full production budget, validate that the AI approach works for your specific use case on your actual data. Test the architecture. Surface the problems early. Get real evidence before the large investment.

The execution, however, varies enormously. Some AI PoCs are genuinely useful: they test the right things, produce measurable quality evidence, reveal the real technical challenges, and give leadership the information they need to make an informed decision. Others are elaborate demos that work on carefully selected test data, miss the hard problems entirely, and leave organisations with a false sense of confidence heading into production.

The stakes are significant. According to Gartner, at least 30% of generative AI projects are abandoned after the proof-of-concept stage, and BCG data shows that the average enterprise scraps 46% of AI PoCs before production. The primary causes are not technical: they are poor data quality, unclear success criteria, and PoC scopes that do not test what actually matters for production. Understanding AI proof of concept cost is therefore inseparable from understanding what a PoC must prove.

The cost of a PoC tells you something about which type you are getting. But price alone is not enough. You also need to understand what a PoC should include, what makes costs go up and down, and how to evaluate whether a proposal is scoped to produce genuine evidence or a polished presentation.

This guide gives you honest cost ranges for the most common enterprise AI PoC types in 2026, an explanation of what drives those costs, and the questions you need to ask to determine whether a PoC quote is realistic and worth the investment.

What an AI PoC Is (and What It Is Not)

Before getting into costs, it is worth being precise about what a PoC should actually do in an enterprise AI context.

A proof of concept is not a prototype. A prototype is a simplified version of a product built to explore design and user experience. A PoC is a technical validation that a specific AI approach works reliably enough on your specific data and within your specific constraints to justify building a full production system.. A pilot extends the PoC into a limited live deployment with real users, still not a production, but beyond pure technical validation. This blog focuses on the PoC stage, before pilot deployment begins.

The distinction matters because these two things have very different success criteria and very different cost profiles. A prototype can be built quickly on synthetic or idealised data by a small team in a few weeks. A genuine PoC requires real data (or at least a representative sample of real data), real system integration (or at least a realistic simulation of integration complexity), and an evaluation process that measures performance against defined quality thresholds.

A PoC that is scoped like a prototype will cost less. It will also tell you much less about whether your production system will actually work.

There are some things a PoC genuinely cannot tell you, and being honest about this upfront saves difficult conversations later:

- It cannot tell you exactly how the system will behave at full production scale, because it is not running at production scale.

- It cannot validate user adoption or workflow integration, because it is not deployed to real users in a real workflow.

- It cannot give you production-grade security and compliance evidence because the governance framework is not fully implemented.

What a good PoC can tell you: whether the core AI capability produces outputs of sufficient quality on real representative data; what the primary technical challenges are and how addressable they are; what a realistic production architecture looks like; and what a credible production cost and timeline estimate is.

AI Proof of Concept Cost by Type: 2026 Benchmark

The following cost ranges reflect current market rates in 2026 for enterprise AI PoC engagements. They assume a vendor with genuine enterprise AI experience (ISO 27001 certified, CMMI Level 3 or equivalent, with verifiable production deployment track record) working on real enterprise data with representative integration complexity.

These are not the cheapest rates available. They are the rates at which you can expect a PoC that produces genuine production-readiness evidence rather than a demo.

RAG and Knowledge Management PoC

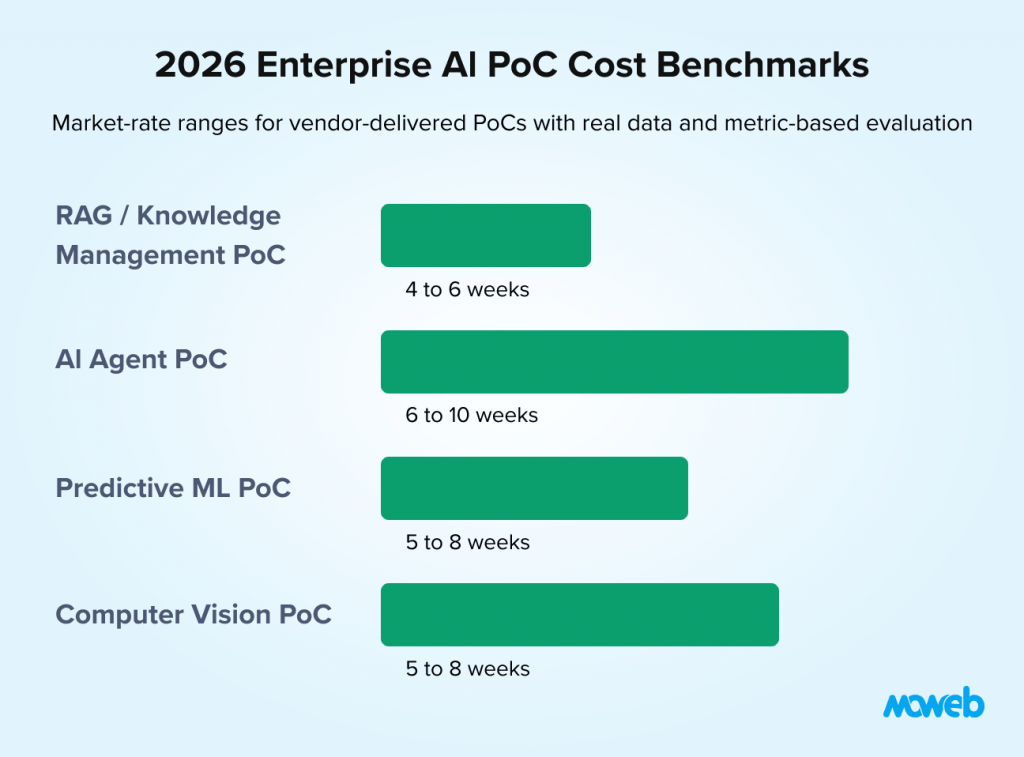

Typical cost range: $18,000 to $45,000. Typical duration: 4 to 6 weeks

A RAG PoC demonstrates that a retrieval-augmented generation system can answer accurately from a defined corpus of your organisation’s documents. The scope typically includes: ingestion and indexing of a representative document sample (500 to 5,000 documents), chunking and embedding pipeline setup, vector database configuration (commonly Pinecone, Qdrant, Weaviate, or pgvector for PostgreSQL-native deployments), retrieval pipeline with basic hybrid search, LLM integration with a defined system prompt, and evaluation (Retrieval-Augmented Generation Assessment Score the standard open-source framework for RAG quality measurement) on a set of test queries.

Cost drivers that push this toward the higher end of the range include: complex document types requiring OCR or table-aware extraction; multiple source systems requiring separate ingestion pipelines; access control requirements (role-based document filtering at retrieval time); highly regulated data environments requiring additional security controls; and multilingual document corpora requiring specialised embedding models.

A RAG PoC at the lower end of the range ($18,000 to $22,000) typically covers a single document type from a single source, a managed vector database service, basic retrieval without reranking, and standard RAGAS evaluation. It is appropriate for teams that want to validate the core concept before investing in the full architecture.

A RAG PoC at the higher end ($35,000 to $45,000) typically covers multiple document types and sources, custom chunking for complex formats, hybrid search with reranking, access control implementation, and a comprehensive evaluation report with production architecture recommendations.

AI Agent PoC

Typical cost range: $30,000 to $70,000. Typical duration: 6 to 10 weeks

An AI agent PoC demonstrates that an autonomous agent can reliably execute a defined multi-step workflow using real enterprise tool integrations. The scope typically includes: use case definition and task decomposition, orchestration framework setup (LangGraph, AutoGen, or CrewAI), tool integration for 3 to 5 enterprise systems (via MCP servers where available, custom integrations where not), human-in-the-loop checkpoint design, basic adversarial testing for prompt injection and unintended tool use, and performance measurement against defined task completion criteria.

AI agent PoCs are more expensive than RAG PoCs primarily because of tool integration complexity. Connecting to real enterprise systems (CRM, ticketing, databases, email) requires authentication setup, API testing, error handling, and integration-specific prompt engineering that takes meaningful time even for experienced teams. Each additional tool integration adds cost.

Cost drivers that push agent PoCs toward the higher end include: legacy system integrations requiring custom wrappers (no available MCP server); high-security environments requiring additional access control layers; multi-agent architectures (more than one agent in the workflow); complex approval and escalation workflows; and organisations that require a detailed governance and safety assessment report as part of the PoC output.

A minimal viable agent PoC ($30,000 to $38,000) typically demonstrates one well-defined workflow with 3 to 4 tool integrations using available MCP servers, basic human-in-the-loop design, and task completion testing on representative scenarios.

A comprehensive agent PoC ($50,000 to $70,000) typically covers a more complex workflow, 5 to 6 tool integrations, including at least one custom integration, adversarial safety testing, access control implementation, and a detailed production architecture and governance recommendation.

Predictive Machine Learning PoC

Typical cost range: $20,000 to $55,000. Typical duration: 5 to 8 weeks

A predictive ML PoC demonstrates that a machine learning model can achieve meaningful predictive accuracy on a defined business problem using your organisation’s data. The scope typically includes: data quality assessment and feature engineering, model selection and training (baseline plus 2 to 3 model architectures), cross-validation and performance evaluation, feature importance analysis, and a production deployment recommendation.

The biggest cost variable in ML PoCs is data quality. If your data requires significant cleaning, normalisation, and feature engineering before it is usable, this work dominates the PoC timeline and cost. Vendors who quote ML PoCs without first assessing your data quality are either not being honest about scope or are planning to deliver a PoC on preprocessed data that does not reflect your actual production environment.

Cost drivers that push ML PoCs higher include: poor data quality requiring substantial preprocessing; high-cardinality categorical features requiring specialist feature engineering; imbalanced datasets requiring specific sampling strategies; regulated use cases requiring model explainability documentation (SHAP values, feature importance reports); and real-time inference requirements that need to be validated at the PoC stage.

Computer Vision and Multimodal AI PoC

Typical cost range: $25,000 to $65,000. Typical duration: 5 to 8 weeks

A computer vision PoC demonstrates that an image or video-based AI system can achieve defined accuracy on a classification, detection, segmentation, or analysis task using your organisation’s image data. The scope typically includes: dataset curation and labelling (or review if labels exist), model selection (fine-tuned foundation model vs. custom architecture), training and evaluation, performance benchmarking against defined thresholds, and deployment pathway recommendation.

The largest cost variable in computer vision PoCs is data labelling. If your image data lacks quality annotations, labelling is a significant additional effort. If it has existing labels, the PoC timeline compresses considerably. Always clarify labelling requirements before accepting a computer vision PoC quote. For PoCs that combine vision with language models (e.g., document understanding or image-to-text pipelines), expect costs toward the higher end of this range, as they require both a vision model and an LLM integration layer.

What Drives AI PoC Costs Up (and What Keeps Them Down)

Understanding the cost drivers helps you scope a PoC intelligently rather than accepting whatever scope a vendor proposes.

Factors that increase PoC cost:

Real data complexity. If your data requires OCR, special parsing, deduplication, cleaning, or complex transformation before it can be used, this work must happen in the PoC. Vendors who propose skipping this to keep costs down are proposing a PoC on artificial data, which does not validate the real system.

Integration depth. Every additional enterprise system the PoC connects to adds authentication setup, testing, and integration-specific work. A PoC that needs to connect to CRM, ERP, email, and a document repository is more expensive than one that reads from a single document store.

Security and access control requirements. Regulated industries (financial services, healthcare, government) typically require the PoC to demonstrate that data is handled securely and that access controls are implemented correctly. This adds meaningful engineering and documentation work.

Evaluation rigour. A PoC evaluated against defined quality metrics (RAGAS for RAG, task completion rate, and error rate for agents, precision/recall for ML) costs more than one evaluated informally. But informal evaluation is the leading reason PoCs do not translate to confident production decisions.

Governance and AI risk documentation. In regulated industries, a PoC may need to demonstrate alignment with AI governance frameworks. This adds documentation and assessment work beyond the technical build.

Factors that keep PoC costs down without compromising value:

A clearly scoped use case. The more precisely defined the problem, the faster (and cheaper) the PoC. A PoC that starts with “explore whether AI could help our operations team” will spend the first two weeks defining the problem. A PoC that starts with “validate that a RAG system can answer compliance policy questions from our 3,000-document policy repository with 90% accuracy” can start building immediately.

Clean, accessible data. If your data is already in a consistent format, accessible via a documented API or export, and representative of the production environment, it dramatically reduces the PoC preparation time.

Available MCP servers for required integrations. For agent PoCs, using existing MCP servers (Salesforce, Jira, GitHub, Slack, Google Drive) instead of building custom integrations can reduce integration work from weeks to days.

Internal technical involvement. Having a technically capable internal team member involved in the PoC (not just as a stakeholder, but as a working participant) reduces the vendor’s discovery overhead and accelerates decision-making on data access, system access, and evaluation criteria.

Questions to Ask a Vendor Before Signing a PoC Agreement

Adding this section closes a structural gap present in all top-5 competing blogs and provides a practical decision tool for the target audience.

Before committing to any AI PoC proposal, ask the vendor to answer each of the following explicitly:

- What data will the PoC run on our actual production data, a representative sample, or synthetic data? If not our actual data, why not, and what does that mean for the validity of the results?

- What evaluation metrics will be used, and what are the acceptance thresholds? If there are no pre-defined metrics, how will we determine whether the PoC succeeded?

- Which enterprise system integrations will be real and which will be simulated? For any simulated integration, what does that mean for the risk of the actual production integration?

- What engineering experience level will work on this PoC, and is it the same team that handled the sales conversation?

- What deliverables are included specifically, is a production architecture recommendation and a risk assessment included in the fixed price, or are these additional?

- What is your estimate for the production build cost based on what you now know about our environment, and what are the top three assumptions that could change that estimate?

A vendor who cannot answer all six questions clearly before signing is not ready to deliver a well-scoped PoC.

What You Should Get at the End of a PoC

This is where the most significant variation between vendors occurs. Make sure you know what deliverables are included before signing a PoC agreement.

At the end of a well-scoped enterprise AI PoC, you should receive:

A working system on real representative data. Not a demo on clean test data. A system processing the same types, formats, and quality of data that the production system will encounter.

A documented quality evaluation report. Specific metrics showing how the system performed against defined thresholds. For a RAG PoC, this means RAGAS scores. For an ML PoC, this means precision, recall, F1, and a confusion matrix. For an agent PoC, this means task completion rate, error rate, and human escalation frequency. “It worked well in testing” is not an evaluation report.

A production architecture recommendation. A documented recommendation for how to build the production system, including technology choices, scale considerations, integration architecture, and security design. This should be an honest assessment, including where the PoC revealed limitations that need to be addressed in production.

A production cost and timeline estimate. Based on what was learned during the PoC, what would it cost, and how long would it take to build and deploy a production-ready system? This estimate should be more accurate than a pre-PoC estimate because it is grounded in actual experience with your data and systems.

Risk and limitation documentation. What did not work as expected? What assumptions in the initial scope turned out to be incorrect? What are the known failure modes of the demonstrated system, and how will they be addressed in production?

If a vendor’s PoC proposal does not include all of these outputs, ask explicitly why each one is missing and what their alternative approach is. The absence of an evaluation report, a production recommendation, or a risk assessment is not a minor gap. It means the PoC is scoped to produce a demo, not evidence.

The PoC-to-Production Cost Journey

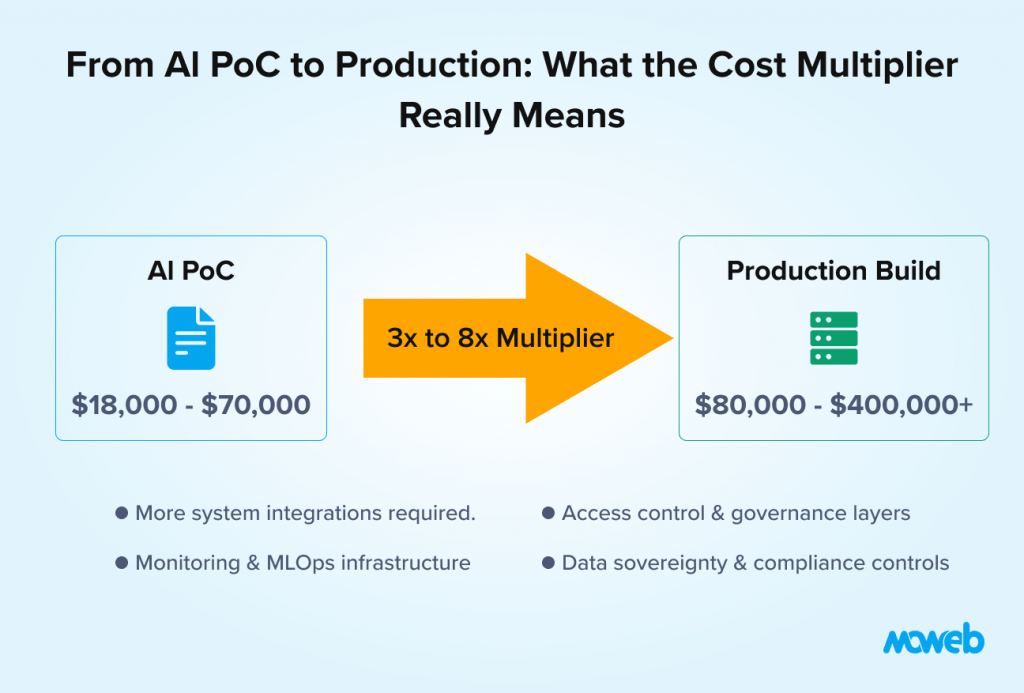

One of the most common surprises in enterprise AI investment planning is the gap between PoC cost and production cost. Understanding this relationship upfront prevents budget shocks later.

As a general rule consistent with findings from industry analysts, including Gartner and BCG, production build cost is 3 to 8 times the PoC cost for the same use case, depending on the production requirements not validated in the PoC.

The multiplier is higher when:

- The PoC used a managed vector database, but production requires a self-hosted deployment with data sovereignty controls

- The PoC demonstrated the core AI capability, but production requires integrations with 8 enterprise systems rather than the 3 in the PoC

- The PoC operated without access controls, and production requires role-based data access across 5 user categories

- The PoC was not evaluated against SLA requirements, and production requires 99.9% uptime with sub-500ms response time

- The PoC did not include a monitoring and model operations layer that production needs for ongoing quality assurance

A responsible vendor will tell you these cost drivers at the PoC stage, not after you have approved the production build. A production cost estimate that is significantly lower than 3x the PoC cost should prompt you to ask specifically what is out of scope.

For a broader view of total AI software development cost across the full project lifecycle, our guide on how much AI software development costs covers the full picture.

Frequently Asked Questions About AI PoC Costs

Why does an AI PoC cost more than a traditional software prototype? An AI PoC must be run on real representative data to produce meaningful evidence. Data preparation, ingestion pipelines, and embedding or training processes take time regardless of scale. Additionally, AI PoCs require a proper evaluation phase measuring output quality against defined metrics, which has no equivalent in traditional software prototyping. A prototype validates that software can be built. An AI PoC validates that the AI system produces accurate enough outputs on your data to be worth building into production.

Is it worth doing an AI PoC, or should we just build the production system? For most enterprise AI projects above a certain complexity level, a PoC is worth the investment. The cost of discovering fundamental data quality problems, integration complexity, or output quality limitations in a PoC is significantly lower than discovering them three months into a full production build. The exception is use cases where the scope is very well defined, the data is high quality and well understood, and a closely comparable deployment has already been done. In those cases, skipping the PoC and going straight to a production build with clear milestone-based acceptance criteria can be justified.

What is the difference between a PoC and an MVP for an AI project? A PoC (Proof of Concept) validates that the AI approach works technically on your data. An MVP (Minimum Viable Product) is a deployable product with real users that validates the business value and user experience. A PoC precedes an MVP in most enterprise AI projects. Some organisations compress or skip the PoC stage and go directly to an MVP, which is viable if data quality is well understood and the technical approach is low-risk. For novel AI use cases or complex data environments, a PoC before an MVP reduces the risk of building an MVP on a technically flawed foundation.

What is the difference between an AI PoC and an AI pilot?

A PoC (Proof of Concept) is a controlled technical test that validates whether a specific AI approach works on your data, typically without real end users. A pilot is a limited live deployment with real users in a real workflow, after the PoC has confirmed technical feasibility. The PoC answers Can this work?. The pilot answers Does this deliver value in practice?’ Most enterprise AI programmes follow a PoC → Pilot → Production sequence, though organisations with strong existing AI expertise sometimes combine the PoC and pilot phases.

Can we do an AI PoC with our internal team rather than a vendor? Yes, if your internal team has the relevant AI engineering skills and sufficient capacity. The cases where using an internal team for a PoC makes most sense are when the use case is well within your team’s existing expertise, when speed is not a priority, and when the primary goal is skill building alongside validation. The cases where using an experienced external vendor makes more sense are when the PoC needs to move quickly, when the AI capability is newer than your team’s current skills, or when you want an independent evaluation of the approach rather than one produced by the team that will build the production system.

What should we do if a vendor quotes significantly below the ranges in this article? Ask specifically what is different about their scope. Common reasons for significantly lower PoC quotes include: the PoC will use synthetic or pre-cleaned data rather than your actual data; the evaluation will be informal rather than metric-based; integrations will be simulated rather than real; the PoC will be delivered by more junior engineers than those described in the sales conversation; or the vendor is underquoting to win the engagement and plans to recover margin on the production build. None of these are automatically disqualifying, but they are all worth understanding explicitly before signing.

How do we know if a PoC result is good enough to justify a production build? Define the acceptance criteria before the PoC starts, not after it ends. For a RAG PoC, this might be “RAGAS faithfulness score above 0.85 and answer relevance above 0.80 on a test set of 50 representative queries.” For an ML PoC, it might be “precision above 0.90 and recall above 0.85 on the holdout test set.” For an agent PoC, it might be “task completion rate above 80% with zero data security incidents in adversarial testing.” If the PoC meets the pre-defined criteria, the decision to proceed is straightforward. If it does not, you have valuable information about what needs to change before production commitment.

What ongoing costs should we budget for after the PoC? Beyond the production build cost (typically 3 to 8 times the PoC cost), ongoing operational costs include: LLM API usage fees (typically $500 to $5,000 per month depending on query volume), vector database hosting (typically $200 to $2,000 per month for managed services), monitoring and observability tooling ($100 to $500 per month), and engineering time for model maintenance, re-indexing, and prompt optimisation (typically 0.25 to 0.5 FTE equivalent per deployed system per year). These should be included in any business case built on PoC results.

Conclusion: A PoC Is Only Worth What It Proves

The question of AI PoC cost is really a question of what you want the PoC to prove. A PoC built on clean test data with informal evaluation proves that an AI system can be made to look good. A PoC built on real data with metric-based evaluation, genuine system integration, and an honest risk assessment proves that an AI system can actually work in your environment.

The first type costs less. The second type tells you something worth knowing.

When evaluating a PoC proposal, the cost is one signal. The scope, the data requirements, the evaluation methodology, and the deliverables are more important signals. A well-scoped PoC at the right price range, with defined quality thresholds and honest deliverables, is one of the best investments an enterprise can make before committing to a full AI deployment.

Moweb’s AI Strategy & Consulting team designs and delivers enterprise AI PoCs with metric-based evaluation, real data integration, and production architecture recommendations as standard outputs. If you are planning a PoC and want to talk through scope and realistic cost expectations, get in touch here.

Found this post insightful? Don’t forget to share it with your network!