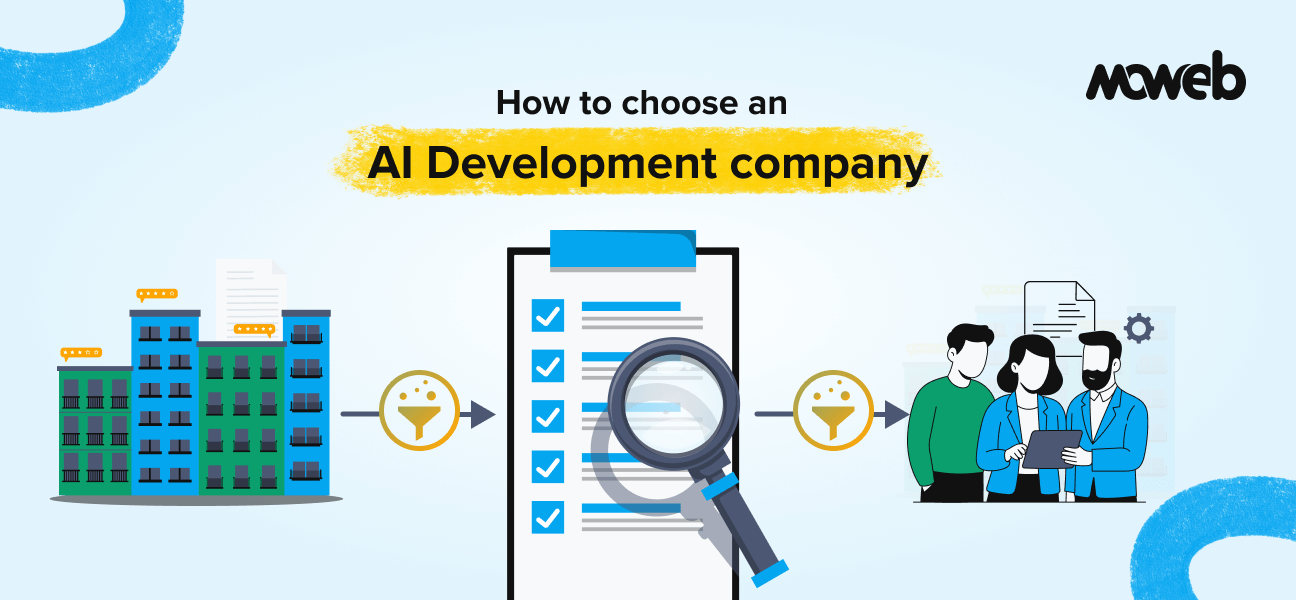

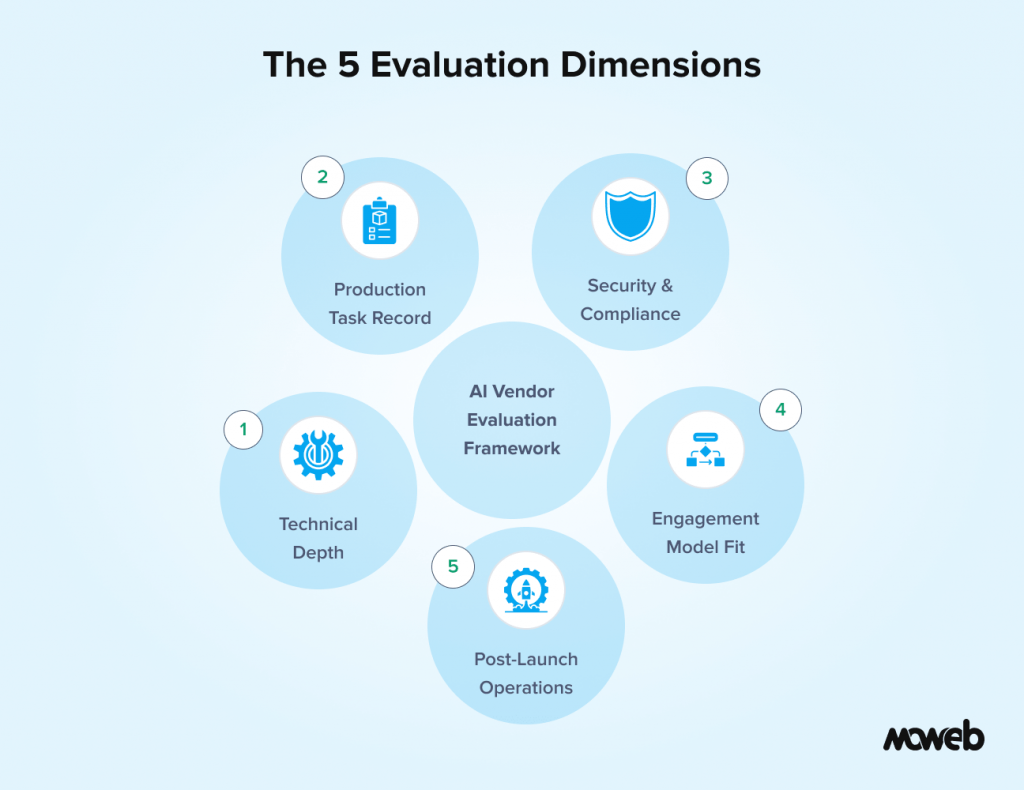

What should enterprises look for when choosing an AI development company? Enterprises should evaluate AI development companies across five dimensions: demonstrated technical depth in the specific AI capabilities required (not just general software experience), verifiable production delivery track record with measurable outcomes, security and compliance credentials relevant to the industry, a clear engagement model that fits the organisation’s internal capacity, and cultural alignment on communication style and long-term partnership expectations.

What are the biggest mistakes enterprises make when selecting an AI vendor? The most common mistakes are selecting a company based on a polished demo rather than evidence of production deployments, underestimating the importance of post-launch support and model monitoring, not verifying security certifications independently, failing to assess whether the vendor understands the specific regulatory context of the industry, and choosing based on lowest cost without accounting for the total cost of a failed or poorly executed project.

The AI development vendor market has never been more crowded or more confusing. Every software agency now claims AI expertise. Every proposal includes terms such as “machine learning,” “LLM,” and “generative AI.” Many of them mean something quite different by those terms than what your organisation actually needs.

For enterprise teams, the stakes of getting this decision wrong are high. A poorly executed AI project does not just waste budget. It erodes internal trust in AI as a capability, creates technical debt that is expensive to unwind, and in regulated industries can create genuine compliance exposure.

This guide gives you a practical framework for evaluating AI development companies specifically for enterprise engagements. It covers what to look for, what questions to ask, which red flags to take seriously, and how to structure the selection process to improve your chances of a successful outcome.One important note before we start: this guide is intentionally focused on general enterprise AI engagements rather than AI agent development specifically. If you are specifically evaluating companies for an AI agent implementation, our guide on how to choose the best AI agent development company covers that narrower decision in detail.

Why This Decision Is Harder Than It Looks

Five years ago, choosing a software development partner was already complex. You evaluated portfolios, checked references, assessed team size and technical stack alignment, and made a judgment call on communication style and cultural fit.

AI development adds several layers of difficulty to that process.

First, the technical landscape moves faster than the vendor market can keep up with. A company that had genuine expertise in predictive machine learning two years ago may have minimal real experience with LLMs, RAG systems, or agentic AI today, even if their marketing says otherwise. The skills are related, but not the same, and the production deployment patterns are quite different.

Second, AI projects fail in ways that are harder to detect during evaluation. A traditional software project that is behind schedule shows obvious signs: missed milestones, incomplete features. An AI project can appear to work in demos while producing outputs that are unreliable, biased, or non-compliant in ways that only surface under real production conditions. By the time problems become visible, the vendor may be long gone.

Third, the post-launch requirements for AI systems are more demanding than for traditional software. Models drift. Retrieval quality degrades as data volumes grow. Security vulnerabilities in AI systems are more subtle and harder to audit. Choosing a vendor without solid post-launch capabilities locks you into either a fragile system or a painful transition.

Understanding these dynamics shapes what you should be looking for during evaluation.

The Five Evaluation Dimensions That Actually Matter

Most vendor evaluation frameworks for technology projects cover the basics: portfolio, team size, pricing, and communication. For enterprise AI projects, five dimensions go deeper.

1. Technical Depth in the Specific Capability You Need

“AI experience” is not a meaningful differentiator. What matters is demonstrated depth in the specific AI capability your project requires. A company that has built ten predictive analytics models is not necessarily equipped to build a production RAG system or a multi-agent automation pipeline.

When reviewing a vendor’s capabilities, look for:

- Evidence of production deployments (not just proofs of concept) in the specific AI capability area: RAG and LLM development, ML and MLOps, AI agents and automation, data engineering for AI, or AI security and governance

- Named tools and frameworks they work with, not just category labels. “We work with LLMs” is not informative. “We have deployed RAG systems using LlamaIndex, Qdrant, and Cohere Rerank in regulated financial services environments.”

- Published technical content (blog posts, documentation, case studies) that demonstrates genuine understanding. A company whose technical team writes accurately about hybrid search, reranking, and access control architecture knows what they are doing. One whose website only uses marketing language does not.

- Team composition. Who specifically would work on your project? Are these people with AI engineering backgrounds, or primarily web developers who have added “AI” to their service list?

2. Verifiable Production Track Record

Case studies are a starting point, not an endpoint. The question is whether you can verify them and whether the outcomes described are genuinely relevant to your project.

When reviewing case studies:

- Look for specific, measurable outcomes: “reduced document processing time by 60%,” “improved retrieval accuracy from 71% to 94% after introducing reranking,” or “deployed a RAG system handling 15,000 queries per day with 99.8% uptime.” Vague statements like “significantly improved efficiency” tell you nothing.

- Ask whether you can speak directly to a client in a comparable industry or with a comparable use case. A vendor who is proud of their work will facilitate this without hesitation. One who consistently deflects reference requests is giving you a signal worth taking seriously.

- Check third-party platforms. Clutch, GoodFirms, and G2 reviews are not perfect, but a pattern of consistent feedback across multiple independent reviewers is more reliable than testimonials selected by the vendor.

- Look for recency. AI capabilities that were impressive in 2022 may be baseline expectations now. Case studies that are more than two years old should be treated as historical context, not current capability evidence.

3. Security, Compliance, and Data Governance Credentials

For enterprise AI projects, security and compliance are not add-ons to evaluate at the end of the process. They should be evaluated as a baseline requirement that either qualifies or disqualifies a vendor before deeper evaluation begins.

Depending on your industry, the minimum credible certifications to expect from a vendor handling your data as part of an AI engagement include:

- ISO 27001 covers information security management and is the international standard most enterprise procurement teams treat as a baseline requirement.

- CMMI Level 3 or equivalent process maturity certification signals that the vendor’s engineering and delivery processes meet an audited standard, not just a self-reported claim.

- SOC 2 Type II (particularly relevant for US-based or US-market enterprise clients) certifies that the vendor’s systems and processes meet the trust service criteria for security, availability, and confidentiality.

- Industry-specific certifications and compliance alignment (HIPAA for healthcare, FCA compliance awareness for UK financial services, GDPR-aware data handling for EU-scope projects) should be explicitly discussed, not assumed.

Beyond certifications, ask specifically how the vendor handles your data during the engagement. Where are models trained? Where are embeddings stored? Who has access to production data? How is data deleted at engagement end? A vendor who answers these questions clearly and confidently is operating with appropriate data governance discipline.

4. Engagement Model Compatibility

The right vendor for your organisation is not just the one with the best technical capability. It is the one whose engagement model fits your internal capacity, governance structure, and risk tolerance.

Enterprise AI projects can be structured in several ways, each with different implications:

Fixed-scope project delivery works well when requirements are clear, the use case is well-defined, and your internal team has sufficient AI knowledge to specify and accept deliverables. It provides cost certainty but can become adversarial if scope changes emerge mid-project, which is common in AI work where production behaviour is difficult to fully specify upfront.

Time-and-materials (T&M) engagement provides flexibility to iterate as the project evolves, which is often more realistic for AI work. The risk is cost uncertainty if not carefully managed with milestone-based checkpoints and regular scope reviews.

The embedded team model places the vendor’s engineers within your organisation’s development process, working alongside your own team. This works well when you want to build internal capability over time and can accommodate the coordination overhead.

Outcome-based or retainer model ties vendor engagement to defined operational outcomes or ongoing system health. Less common but increasingly available from mature AI vendors who are confident in their own delivery quality.

Understanding your own organisation’s procurement preferences, budget structure, and internal oversight capacity should drive which engagement model you prioritise, and you should be cautious of vendors who push hard for a model that does not fit your situation.

5. Post-Launch Support and Model Operations

An AI system is not a static piece of software that you can deploy and forget. Models drift as data distributions change. RAG retrieval quality can degrade as document corpora grow and become inconsistent. Security vulnerabilities are discovered and need patching. Usage patterns evolve and reveal edge cases that were not tested during development.

The question of what happens after go-live is one of the most important and most overlooked in enterprise AI vendor selection. Ask specifically:

- What does ongoing monitoring look like? Who is responsible for detecting and responding to output quality degradation?

- What is the SLA for production incidents? What constitutes a P1 issue, and what is the response time commitment?

- How are model updates and re-indexing handled? Who initiates them, and what is the approval process?

- What happens at the engagement end? Is there a clear knowledge transfer process? Is the system documented well enough for your internal team to operate and modify?

A vendor who has not thought carefully about these questions has probably not operated AI systems in enterprise production environments for very long.

Red Flags to Take Seriously During Evaluation

Experienced enterprise technology buyers develop an instinct for vendor red flags over time. In AI vendor evaluation specifically, these patterns consistently signal risk:

Demo performance that does not translate to production guarantees. If a vendor is willing to demo an impressive prototype but hedges significantly when you ask about production accuracy, latency commitments, and monitoring, the demo is probably more polished than their delivery would be.

Inability to discuss technical trade-offs honestly. Confident, experienced AI engineers will tell you what their recommended approach cannot do as readily as what it can. A vendor who only tells you what their solution does well and cannot articulate the trade-offs or failure modes is either inexperienced or not being straight with you.

Vague about who actually does the work. Some vendors present senior architects in sales conversations but deliver projects using junior engineers or offshore subcontractors who were never introduced. Ask explicitly: who will be on the delivery team, what are their individual backgrounds, and can you meet them before signing?

No governance or security framework conversation. In 2026, any serious AI vendor should proactively raise data governance, access control, and AI security as part of their discovery process. If you get to a proposal stage without those topics being raised, raise them yourself and pay close attention to how comfortable the response is.

References that are only reachable through the vendor. A vendor who insists on pre-screening all reference conversations, or who can only provide references via email rather than direct calls, is managing what you can find out. Independent references on Clutch or direct referrals from your professional network are more reliable.

Proposals that promise too much, too fast. Realistic AI project scoping is hard. A proposal that promises a production-ready, fully integrated enterprise AI system in six weeks at a surprisingly low price point has either scoped something much simpler than what you described, or is underestimating the work in a way that will surface as cost overruns and quality issues later.

The Evaluation Process: A Practical Timeline

Here is a structured approach to the vendor selection process that enterprise teams can adapt to their own procurement requirements:

Weeks 1 to 2: Define and document your requirements. Before engaging vendors, create a clear requirements document covering the AI capability needed, the data environment (types, volumes, access controls, sensitivities), the expected output quality standards, the compliance requirements, the internal technical resources available for collaboration, and the timeline and budget envelope. Vendors cannot give you meaningful proposals without this information, and proposals built without it will not be comparable to each other.

Weeks 3 to 4: Research and longlist. Identify 6 to 10 potential vendors through a combination of direct referrals from your professional network, third-party platforms like Clutch and GoodFirms, LinkedIn research, and inbound approaches. Filter to a longlist of 4 to 6 based on public portfolio relevance, certifications, and geography/timezone compatibility.

Weeks 5 to 6: Issue an RFP or structured brief. Send the same requirements document to all longlist vendors. Ask for technical approach overview, relevant case studies with outcomes, proposed team composition, engagement model, pricing structure, and security/compliance credentials. Standardising the brief makes responses comparable and saves time.

Weeks 7 to 8: Evaluate proposals and shortlist. Score proposals against the five dimensions covered in this guide. Shortlist 2 to 3 vendors for deeper evaluation. At this stage, request reference conversations with clients from comparable industries and reach out directly rather than through the vendor, where possible.

Weeks 9 to 10: Technical deep-dive and team interview. For shortlisted vendors, conduct a structured technical interview with the engineers who will actually deliver the project (not just the sales team). Present a real scenario from your use case and ask them to walk through their technical approach, including what they would not do and why. Evaluate how they handle uncertainty and disagreement.

Week 11: Commercial and legal negotiation. Before final selection, review the contract carefully for IP ownership clauses, data handling and deletion terms, liability limitations, and exit provisions. Ensure the SLA commitments for post-launch support match your operational requirements.

Week 12: Decision and onboarding. Select your vendor and begin a structured onboarding process that includes a joint discovery phase before any development starts.

Questions to Ask Every Vendor You Evaluate Seriously

These questions separate vendors who have genuine enterprise AI delivery experience from those who do not:

- “Walk me through a RAG or LLM project you delivered to production. What was your chunking strategy and why? What retrieval issues did you encounter and how did you resolve them?”

- “How do you implement access control in AI systems where different user roles should retrieve different data?”

- “What evaluation metrics do you use to verify RAG system quality before and after deployment? Can you show us a sample evaluation report?”

- “What has gone wrong in a past AI project, and how did you handle it?”

- “Who specifically from your team would be working on our project day-to-day, and can we see their profiles and speak with them before signing?”

- “What does your data handling process look like during development? Where is client data processed and stored?”

- “How do you handle model drift and output quality monitoring after go-live?”

The answers to these questions tell you far more than a portfolio review or a demo.

Frequently Asked Questions About Choosing an AI Development Company

How is choosing an AI development company different from choosing a traditional software development vendor? AI projects require additional evaluation of the specific AI capability area (LLMs, ML, agents, RAG), post-launch model operations capability, and data governance practices that are not typical requirements for traditional software vendors. AI systems also fail in less visible ways than traditional software, making production track record and evaluation methodology more important than demo performance.

How much should an enterprise AI project cost? Project cost varies enormously depending on scope, data complexity, integration requirements, and the vendor’s location and seniority mix. A focused proof-of-concept can range from $15,000 to $60,000. A production-grade enterprise AI system with data engineering, retrieval pipeline, LLM integration, access control, and monitoring typically ranges from $80,000 to $400,000 or more for a first deployment. Ongoing maintenance and model operations add to this. Cost should not be the primary selection criterion: a poorly executed AI project at half the price costs significantly more in rework, delay, and organisational trust than a well-executed one at full price.

Should we work with a large consulting firm or a specialist AI development company? Both have trade-offs. Large consulting firms bring governance frameworks, industry knowledge, and enterprise-grade account management, but often deliver AI work through less experienced subteams at a significant margin. Specialist AI development companies tend to have deeper hands-on engineering expertise and faster execution, but may have less depth in change management, enterprise architecture integration, and industry-specific regulatory knowledge. Many successful enterprise AI projects combine both: a specialist company for core AI engineering and a consulting partner for organisational change management.

What certifications should an AI development company have? At a minimum for enterprise work: ISO 27001 for information security management. CMMI Level 3 or equivalent for process maturity. SOC 2 Type II for US-market clients. Industry-specific compliance awareness (HIPAA, FCA, GDPR) relevant to your regulatory context. These should be independently verifiable, not just claimed on a website.

How long should an enterprise AI project take? A proof-of-concept on a single well-defined use case: 4 to 8 weeks. A production-ready first deployment with proper data engineering, retrieval pipeline, access control, and monitoring: 12 to 20 weeks. Full-scale enterprise deployment with integrations across multiple systems, change management, and governance framework: 6 to 18 months as an ongoing programme. Be cautious of vendors who significantly undercut these ranges without a compelling explanation of what they have scoped differently.

What should be in the contract for an enterprise AI engagement? The contract should explicitly cover: IP ownership of all code, models, and data produced during the engagement; data handling, storage, and deletion obligations; accuracy and performance warranties or SLA commitments for post-launch operations; exit provisions including knowledge transfer requirements; liability limitations and indemnification for IP infringement or data breaches; and governance of any use of your data for model training or benchmark testing. These terms are not standard in all AI vendor contracts and should be explicitly negotiated.

How do we avoid vendor lock-in in an AI project? Specify from the start that all code must be delivered in a portable form that your team can maintain and modify. Require documentation of all architecture decisions and model configuration. Avoid vendor-proprietary tools where open-source alternatives exist. Ensure your team has access to all infrastructure accounts and service subscriptions from day one, not just at project end. And build internal capability through embedded team models or knowledge transfer sessions so your organisation is not operationally dependent on the vendor for ongoing support.

Conclusion: The Right Vendor Is the One You Can Trust with Your Data and Your Reputation

Choosing an AI development company for an enterprise project is ultimately a trust decision. You are entrusting a vendor with sensitive data, significant budget, and the credibility of your organisation’s AI initiatives.

The evaluation framework in this guide is designed to help you make that trust decision based on evidence rather than persuasion. Technical depth verified through specific questions, not portfolio slides. Production track record verified through independent references, not selected testimonials. Security credentials verified through documentation, not website claims.

At Moweb, we hold ISO 27001:2022 and CMMI Level 3 certifications, have delivered 900+ projects across enterprises and SMEs globally, and operate dedicated practices across the full AI stack: from AI Strategy & Consulting and Generative AI & LLM development through to AI Security & Governance and AI Agents & Intelligent Automation.

We are happy to answer every question in this guide about our own work. Start the conversation here.

Found this post insightful? Don’t forget to share it with your network!