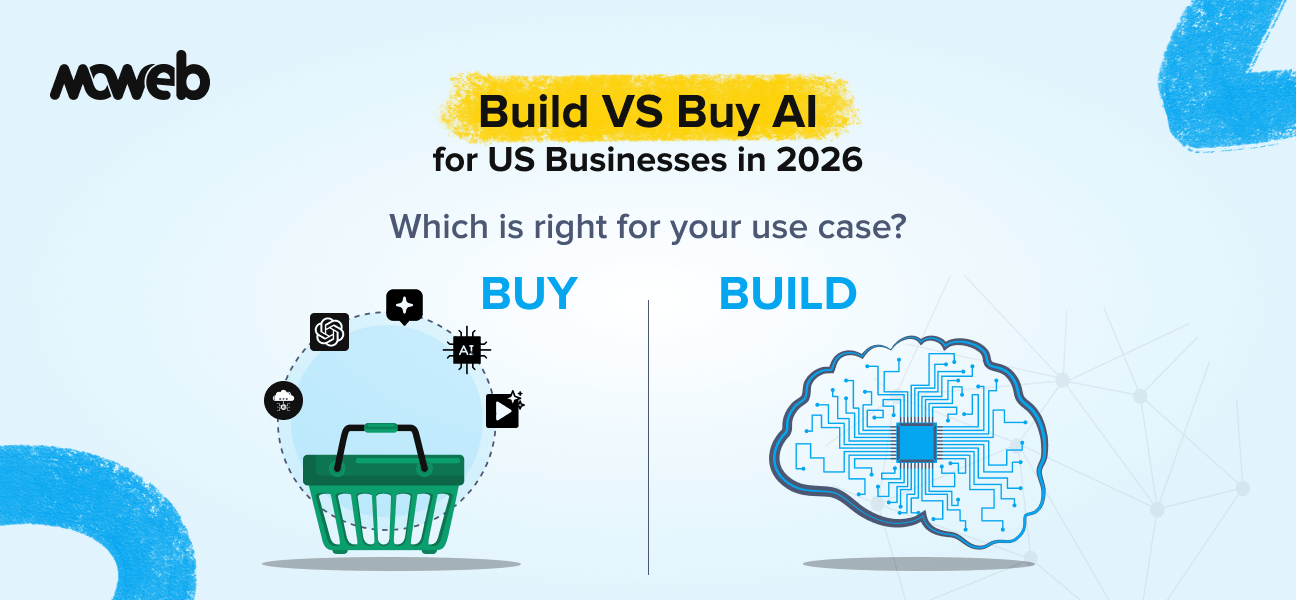

Should US businesses build or buy AI in 2026? The build vs buy decision for AI in US businesses in 2026 is not binary – most successful enterprise AI deployments combine both. The practical decision framework is: buy AI capabilities that are generic, commodity, or available as best-in-class off-the-shelf products (copilots, standard document processing, commodity analytics); build AI systems where the use case is specific to your operations, your data gives you a competitive advantage, your compliance requirements cannot be met by off-the-shelf products, or you need to own and control the full system for governance, security, or sovereignty reasons.

What is the total cost difference between building and buying AI? Buying AI (SaaS tools, API-based LLM access, pre-built copilots) typically has a lower upfront cost but meaningful ongoing subscription and API usage costs that scale with usage. Building custom AI (RAG systems, ML models, AI agents built on your data) has a higher upfront build cost ($80,000 to $180,000 for a first production system with a qualified implementation partner, and up to $400,000 for larger mid-market development) but lower ongoing per-query costs at scale and full control over the system’s capabilities and data handling. For US businesses handling sensitive data, operating in regulated industries, or with use cases that generic tools cannot address, the total cost of ownership over three years often favours building custom systems despite the higher initial investment.

The build vs buy AI decision for US businesses in 2026 is one of the most consequential choices a technology leader can make – and one of the most misunderstood. Your data is the moat. A bought AI product runs on the same model as your competitor’s. A built one runs on yours. That single distinction drives most of the strategic logic in what follows.

AI is different. Not because buying is wrong – there are AI tools worth buying and using broadly. But because the nature of competitive advantage in AI is fundamentally tied to data: your data, your processes, your domain knowledge. A CRM built on Salesforce and a CRM built on a competitor’s Salesforce look different at the interface level, but are essentially the same capability. A demand forecasting model built on your ten years of supply chain data, tuned to your specific product categories and supplier patterns, is a genuinely different capability from a generic demand forecasting tool. The data is the moat.

This guide gives US business leaders a practical enterprise AI decision framework for making the build vs buy decision intelligently – not as an ideological position but as a context-specific analysis of where each approach creates more value. It also covers a third path that most competitors in this space are starting to adopt: buying the foundation and building the edge.

The Landscape of AI Products Available to Buy in 2026

Before evaluating the build vs buy decision, it is worth mapping the current landscape of AI products available to US businesses, because the range and quality of buyable options have changed dramatically in the past two years.

AI-embedded SaaS tools are the dominant category by user count. Microsoft Copilot, Salesforce Einstein, HubSpot AI, Notion AI, Slack AI, and hundreds of similar products embed AI capabilities directly into software your teams already use. These products require no separate AI infrastructure, deploy at the speed of a SaaS onboarding, and cost through your existing vendor relationships. Their limitation is that they operate only on the data within their respective platforms and are optimised for generic use cases rather than your specific operational context.

Standalone AI productivity tools include tools like ChatGPT Teams, Claude for Business, and Gemini for Workspace – general-purpose AI assistants your teams can use for drafting, research, summarization, and analysis. High immediate productivity value. Low specificity to your operations. No connection to your internal data systems unless you build that connection separately.

AI APIs for developers (OpenAI API, Anthropic API, Google Gemini API, AWS Bedrock) provide access to foundational large language models (LLMs) – AI models trained on vast text corpora that can understand and generate language. LLMs that your engineering team can build applications on top of. This is the buy option that sits closest to building: you are buying the model capability, but building the application, integration, and data layer on top of it. Most enterprise AI system development in 2026 uses this approach – buying the underlying model while building the system architecture around it.

Vertical AI SaaS is the fastest-growing category: AI products purpose-built for specific industries (AI for legal review, AI for medical coding, AI for financial compliance, AI for HR screening). These products have the advantage of domain-specific training and pre-built compliance controls. Their limitation is that they serve the generic version of their industry use case, not your organisation’s specific version of it.

Understanding where each of these categories fits your use cases is the first step in the build vs buy analysis.

The Four Questions That Drive the Build vs Buy Decision

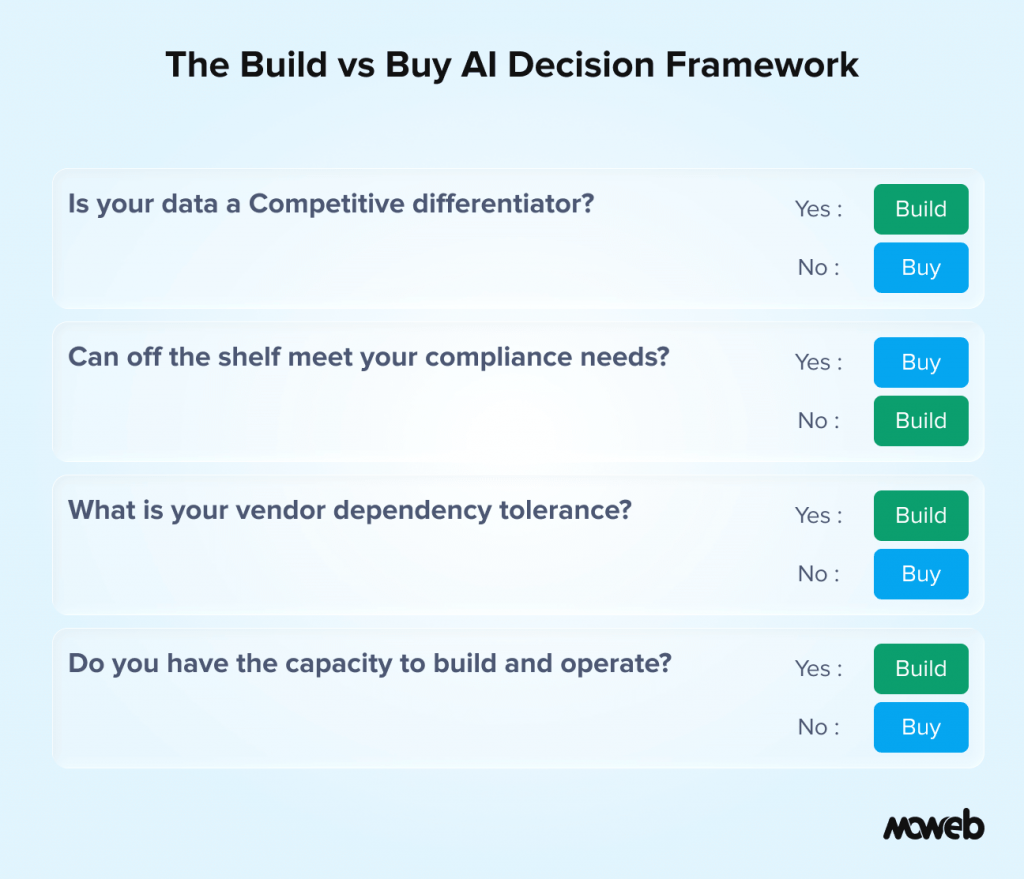

Rather than prescribing a universal answer, this framework gives US business leaders four questions to apply to each specific AI use case they are evaluating.

Question 1: Is Your Data a Competitive Differentiator for This Use Case?

If the AI system’s value comes primarily from understanding and reasoning over data that is specific to your organisation – your historical transactions, your customer relationships, your proprietary documents, your operational patterns – then building (or customising) is almost always the right choice.

The generic AI product does not have your data, cannot be trained on your data in a way you control, and cannot produce outputs that reflect the specific nuances of your business context. A demand forecast built on your supply chain data will outperform a generic demand forecasting tool for your specific products, suppliers, and markets. A customer support AI built on your specific policy documents and product knowledge will outperform a generic support chatbot for your specific customer queries.

Conversely, if the AI system’s value is independent of your specific data – you just need a good English-language grammar checker, a standard code completion tool, or a meeting summary generator – then a bought product will serve you well without the cost and complexity of building.

Question 2: Can an Off-the-Shelf Product Meet Your Compliance Requirements?

US businesses in regulated industries (financial services, healthcare, insurance, pharmaceuticals, government contracting) face compliance requirements that generic AI products frequently cannot meet out of the box. For a comprehensive look at what a full AI governance framework covers, see our guide to US businesses in regulated industries (financial services, healthcare, insurance, pharmaceuticals, government contracting) that face compliance requirements that generic AI products frequently cannot meet out of the box. For a comprehensive look at what a full AI governance framework covers, see our guide to AI governance for LLMs and enterprise agents.

- Data residency requirements may prohibit sending certain data to offshore processing infrastructure

- Regulatory audit trail requirements may demand logging capabilities that off-the-shelf tools do not provide

- Industry-specific security certifications (HIPAA, FedRAMP, SOC 2 Type II) may be required before a tool can handle regulated data

- Model explainability requirements may mandate the ability to explain specific AI-influenced decisions in terms that regulators and affected parties can understand

When a generic AI product cannot meet these requirements without significant customisation, the total cost of customising it often exceeds the cost of building a purpose-designed system with the compliance controls built in from the start.

Question 3: What Is Your Vendor Dependency Tolerance?

Buying AI products creates vendor dependencies that have specific risk profiles in 2026:

Model change risk. LLM providers update their models regularly. An application built on GPT-5.5 may produce different outputs after an undisclosed model update, introducing quality variability that is outside your control. For use cases where output consistency is important, this is a material risk.

Pricing risk. API pricing for AI products changed significantly between 2023 and 2026. For applications that process high volumes of queries, pricing changes can materially affect the unit economics. Building on owned infrastructure gives you predictable costs at scale.

Data handling risk. When you send your data to an AI API, you are operating under the provider’s terms of service for data handling. Most enterprise API tiers exclude your data from model training, but you are still trusting a third party with data that may be sensitive, proprietary, or regulated. For certain data classifications, this is not acceptable regardless of the contractual protections offered.

Model drift risk. AI model drift refers to gradual degradation in output quality over time as the real-world data distribution shifts away from what the model was trained on. With bought products, you have a limited ability to detect or correct drift. With a built system, you own the monitoring and can retrain or recalibrate.

Capability risk. An AI product that meets your needs today may not prioritise the features you need as you grow. Building custom means your roadmap is your own.

The counterargument is that managing your own AI infrastructure has its own risks: the cost of maintaining ML systems, the need for ongoing engineering support, and the challenge of staying current with a rapidly evolving technology landscape. Neither dependency is cost-free.

Question 4: What Is Your Internal Capability to Build and Operate?

Build options require engineering capability to build, operate, and maintain. US businesses without a capable internal engineering team or without a reliable AI implementation partner who can provide post-deployment support are buying a liability, not an asset, when they choose to build.

The honest assessment of internal capability should cover: who will build the system (internal team or implementation partner), who will operate it after deployment (internal team, the implementation partner on retainer, or a managed service), who will monitor quality and handle model drift, and who will make architectural decisions as the system evolves.

Build makes sense when you have answered these questions with specific names and clear plans. It does not make sense when the answer to most of them is “we will figure that out later.”

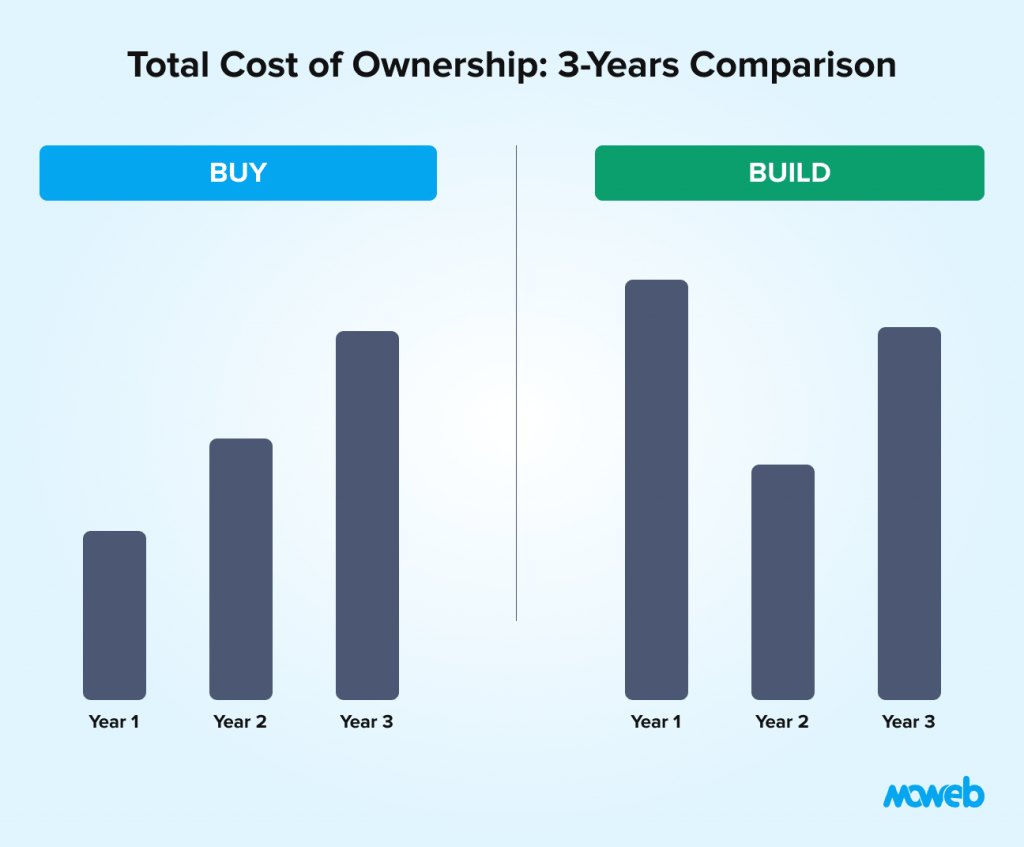

The Real Cost Comparison: Buy vs Build at US Mid-Market Scale

Cost comparisons between buy and build are frequently oversimplified. Here is a more honest accounting across a three-year horizon for a typical US mid-market AI use case – a customer-facing knowledge assistant handling 5,000 queries per day. According to S&P Global research, 42% of companies that launched AI initiatives in 2025 failed to complete them, largely due to an underestimated total cost of ownership. Getting the numbers right before you start matters:

Buy Option: Microsoft Copilot for Customer Service or similar enterprise AI SaaS

Year 1 costs:

- SaaS licensing: approximately $30 to $60 per user per month for 50 relevant staff = $18,000 to $36,000 annually

- Implementation and configuration: $15,000 to $40,000 (vendor professional services or internal time)

- Training and change management: $5,000 to $15,000

Year 1 total: approximately $38,000 to $91,000

Years 2-3: SaaS licensing continues at the same or increasing rate. Customisation work is limited by product capabilities.

Three-year total estimate: $80,000 to $200,000

Limitations: Content is restricted to what the vendor’s product can access and index. Compliance controls are limited to what the vendor provides. Output quality reflects the vendor’s product roadmap decisions. Data handled on vendor infrastructure.

Build Option: Custom RAG-based knowledge assistant on your data

Year 1 costs:

- Initial build (data preparation, RAG pipeline, access controls, monitoring, testing): $80,000 to $180,000 with a qualified AI implementation partner (up to $400,000 for larger mid-market deployments)

- LLM API costs at 5,000 queries/day, average 2,000 tokens per query: approximately $2,000 to $5,000 per month depending on model and volume (varies significantly by model tier — frontier models at the high end, efficient models like GPT-4o mini at substantially lower cost) = $24,000 to $60,000 annually

- Vector database hosting: $500 to $2,000 per month = $6,000 to $24,000 annually

- Monitoring and maintenance: $10,000 to $25,000 annually

Year 1 total: approximately $120,000 to $289,000

Years 2-3: Ongoing API, hosting, and maintenance costs without the initial build expense. If operated by internal staff, factor 0.5–1 FTE of AI engineering time. If operated via implementation partner retainer, add $30,000–$80,000 per year for managed operations.

Three-year total estimate: $175,000 to $430,000

The three-year cost comparison is closer than it first appears – and the build option gives you significantly more capability, control, and compliance assurance for use cases where those factors matter. For use cases where they do not matter, buy wins on simplicity and speed.

The Third Path: Buying the Foundation, Building the Edge

In 2026, the dominant enterprise AI reality is neither pure buy nor pure build. According to MIT research, companies that adopted a hybrid buy-and-build approach to AI achieved successful deployment rates nearly twice those of organisations that committed to pure build strategies. The pattern is: buy the foundation, build the differentiation layer.

This hybrid model looks like this in practice: a US mid-market manufacturer buys GPT-4o or Claude via API (the foundation model – bought) and builds a custom inventory forecasting agent on top of it that ingests their specific ERP data, applies their supplier lead time rules, and integrates with their procurement workflow (the differentiation layer – built). They are not training a model from scratch. They are not locked into a vendor’s product roadmap. They own the system that creates competitive value while paying for the commodity intelligence layer.

What to buy in a hybrid model: the foundational LLM capability (via API), the hosting infrastructure (AWS, Azure, GCP), the observability tooling, and commodity workflow components. What to build: the data pipeline connecting your proprietary data sources to the model, the business logic layer that encodes your specific rules and processes, the compliance and audit controls specific to your regulatory environment, and the user experience layer tuned to your workflows.

The hybrid path is now how most successful mid-market AI deployments in 2026 are structured. If you are planning an AI initiative and the conversation is still framed as a binary choice, that framing is already behind where the market has moved.

When Buy Is Clearly Right

There are scenarios where buying is the clear answer and building would be a waste of resources:

Generic productivity use cases. If you need a meeting summariser, a writing assistant, or a code completion tool, buy products like Microsoft Copilot, ChatGPT Teams, or GitHub Copilot to deliver high value immediately at low cost. Building custom alternatives for these use cases is rarely justified.

Best-in-class vertical tools. If a best-in-class AI product exists for your specific industry use case – a leading legal review AI, a medical coding AI with deep regulatory validation, a financial compliance monitoring AI – and it meets your compliance requirements, buying it is usually faster and cheaper than building a comparable system.

Fast-moving capabilities. In areas where AI capabilities are evolving so rapidly that any system you build today will be obsolete in 12 months (certain generative content applications, rapidly evolving computer vision capabilities), buying gives you access to a vendor’s continuous investment without the overhead of maintaining your own system through rapid capability changes.

Limited engineering capacity. If your organisation does not have and cannot acquire the engineering capability to build and maintain a production AI system, buying a well-supported product is the responsible choice.

When Build Is Clearly Right

Conversely, there are scenarios where building custom is the clear answer:

Proprietary data advantage. If your operational data is your competitive moat – your unique transaction history, your proprietary customer relationships, your domain-specific documents – and the AI use case depends on that data, building is almost always the right answer.

Regulated data that cannot leave your environment. For US healthcare organisations handling PHI, financial services companies with customer data under specific state regulations (CCPA, NYDFS Cybersecurity Regulation), or government contractors with FedRAMP requirements, the data handling constraints may make sending data to a third-party AI product contractually or legally unacceptable. See the NIST AI Risk Management Framework for the federal standard on AI governance controls.

Complex multi-system integration. If the AI system needs to connect to five or six internal systems, execute multi-step workflows, and take actions in your enterprise systems, off-the-shelf products will not provide the integration depth required. You need a custom-built system using APIs and integration patterns specific to your technology stack.

Long-term strategic capability. If AI is a core part of your product or operational competitive strategy – not just a productivity tool but a genuine capability differentiator – owning the system architecture, the data pipeline, and the model infrastructure gives you the control to iterate, improve, and evolve in ways that vendor products cannot match.

Frequently Asked Questions About Build vs Buy AI for US Businesses

Is it cheaper to build or buy AI for a US mid-market company? It depends on the use case, the time horizon, and what “cheaper” includes. Over a three-year horizon for a customer-facing knowledge assistant handling 5,000 queries per day, the total cost of a SaaS solution and a built custom system are closer than most people expect – roughly $80,000 to $200,000 for buy versus $175,000 to $430,000 for build. The build option costs more but delivers significantly more control, compliance, and capability for use cases where those factors matter. For generic productivity use cases, buying is almost always cheaper and faster. Time-to-value is also a meaningful factor: bought products typically deliver value in weeks versus 3–6 months for a production build..

Can we start with a bought product and build later? Yes, and this is a common and sensible approach. Many US mid-market companies use bought AI productivity tools (Copilot, ChatGPT Teams) for immediate employee productivity gains while building custom AI systems for the operational use cases where proprietary data and deep integration matter. The two approaches coexist and serve different purposes. The challenge is ensuring that the decision to build is made proactively based on strategic analysis, not reactively because the bought product failed to meet requirements.

What happens to our data when we use a bought AI product? For consumer-facing products like the free tier of ChatGPT, your data may be used for model training. For enterprise API tiers and business subscriptions from major providers (OpenAI, Anthropic, Google, Microsoft), data is typically explicitly excluded from training and processed only for your query. You should verify the data handling terms for any AI product handling regulated, sensitive, or commercially confidential data. For the highest-sensitivity data classifications, the only fully safe option is a self-hosted or fully managed private deployment.

Does choosing a bought AI product create lock-in? Yes, and the nature of the lock-in varies by product type. SaaS AI tools create dependency on the vendor’s continued existence, pricing decisions, and product roadmap. API-based products create dependency on the provider’s model updates and pricing. The counterargument is that building custom also creates lock-in to the architecture decisions, the implementation partner, and the infrastructure choices made at build time. No approach is lock-in-free; the question is which dependencies are more manageable given your specific strategic context.

How should US businesses evaluate AI vendors for a buy decision? Evaluate on five dimensions: data handling and security (where is your data processed, stored, and retained?), compliance certifications relevant to your industry (SOC 2 Type II, HIPAA, FedRAMP), contractual data rights (who owns data derived from your inputs?), model stability and change management (how are model updates communicated and managed?), and exit provisions (can you export your data and configurations if you switch vendors?). A vendor who cannot answer all five questions clearly should not handle your sensitive business data.

When should a US business work with an implementation partner for a build decision? When your internal engineering team does not have production AI deployment experience. Building a demo or a PoC is within the reach of most engineering teams. Building a production AI system with proper access controls, audit trails, monitoring, and governance is a different discipline. An implementation partner with a genuine production track record in your industry and use case type reduces delivery risk significantly. Look for a partner who will transfer knowledge to your team rather than create a long-term dependency. Moweb operates this way: our engagements include architecture documentation, operational handover, and team capability building so your organisation can operate independently.

Conclusion: Build Where Data Is Your Advantage, Buy Where It Is Not

The build vs buy decision in enterprise AI is not a philosophical position. It is a use-case-specific analysis of where your data creates competitive advantage, where your compliance requirements demand control, and where your engineering capacity can support ownership.

The US businesses that are getting this decision right in 2026 are not those that have adopted a blanket policy (always build, always buy). They are those applying the four-question framework to each use case: Is our data a differentiator? Can a bought product meet our compliance requirements? What is our vendor dependency tolerance? Do we have the capability to build and operate? For the use cases where building custom is the right answer, Moweb’s Generative AI & LLM development and AI Agents & Intelligent Automation practices deliver production systems with the security, compliance, and operational quality that US mid-market enterprises need. Our New Jersey office means we operate in your time zones. Talk to us about your specific use case.

Found this post insightful? Don’t forget to share it with your network!