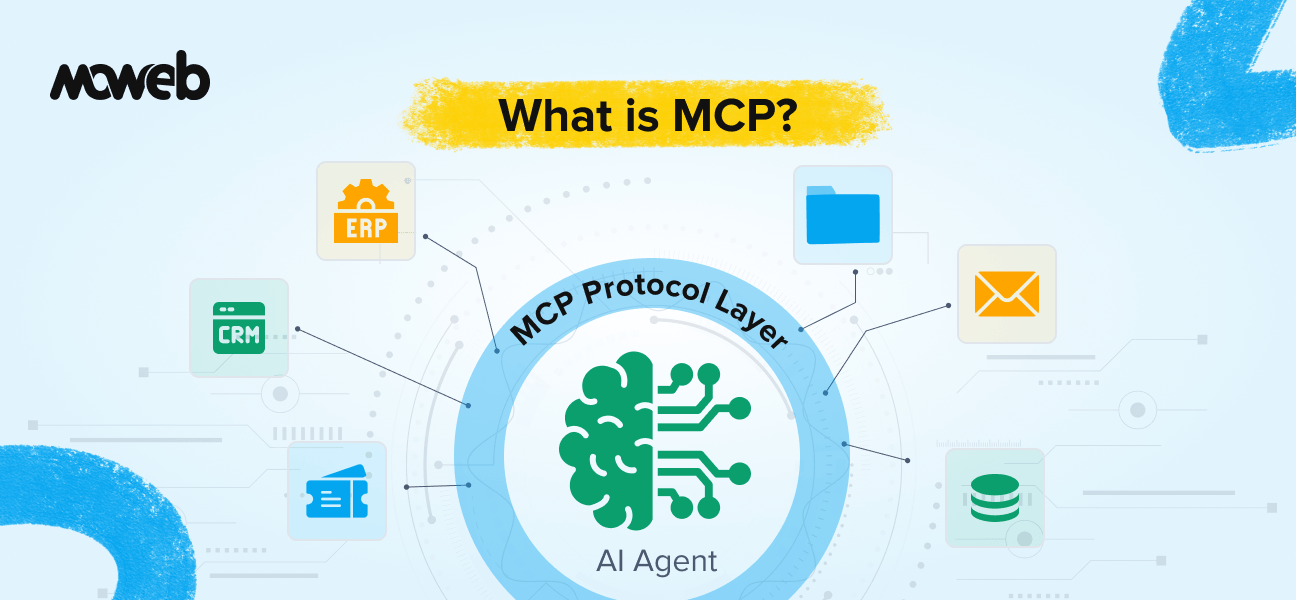

What is MCP (Model Context Protocol)? Model Context Protocol (MCP) is an open standard introduced by Anthropic in late 2024 that defines a universal way for AI models and agents to connect to external tools, data sources, and services. Instead of building a custom integration for every tool an AI agent needs to use, developers implement MCP once and the agent can connect to any MCP-compatible tool or data source without additional integration work.

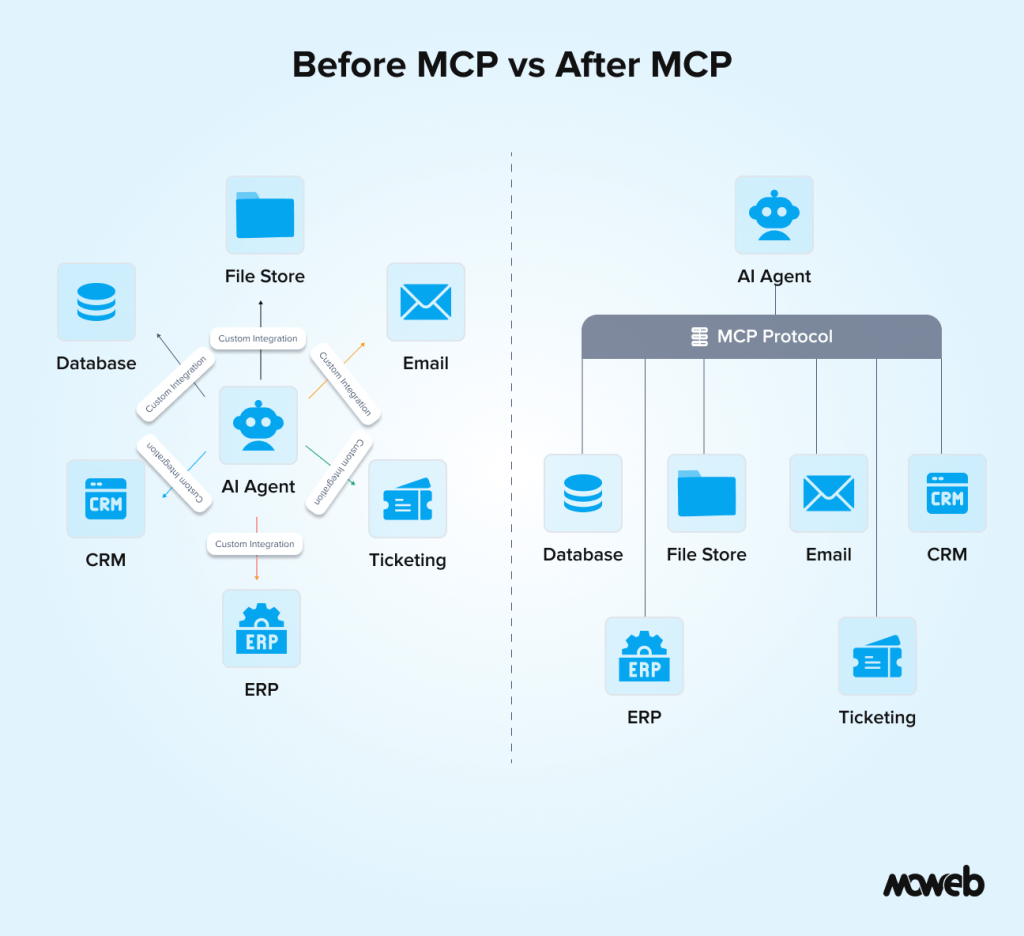

Why does MCP matter for enterprise AI agents? Before MCP, connecting an AI agent to enterprise systems like CRMs, databases, ticketing systems, or internal APIs required a separate bespoke integration for each system. This made agentic AI expensive to build and difficult to maintain. MCP standardises this connection layer, making it significantly faster and more reliable to build enterprise AI agents that can genuinely act across an organisation’s full tool ecosystem rather than a handful of manually integrated services.

For the past two years, the enterprise AI conversation has been dominated by a single question: how do we get AI to actually do things, not just answer questions?

Chatbots that answer from documents are useful. But the real value organisations are chasing is AI that can complete tasks: pull a report from the CRM, update a ticket in Jira, query a database, trigger a workflow, write and send a summary, check inventory, and flag an anomaly, all within a single conversation or automated pipeline.

This is what AI agents promise. And for a long time, the gap between the promise and the production reality was enormous, partly because the technical infrastructure for connecting an AI model to enterprise tools was fragmented, bespoke, and brittle.

Model Context Protocol (MCP) is the standardisation layer that changes this. It is one of the most significant infrastructure developments in enterprise AI over the past twelve months, and it is still not well understood outside of engineering teams building agentic systems. This guide explains what it is, how it works, why it matters specifically for enterprise deployments, and what you need to know if your organisation is building or evaluating AI agent systems.

The Problem MCP Was Built to Solve

To understand why MCP matters, you need to understand what building an enterprise AI agent looked like before it existed.

An AI agent needs access to tools and data to be useful. A sales agent needs to query the CRM, check availability in the inventory system, and send emails. A finance agent needs to pull data from multiple reporting systems, query a document repository, and write outputs to a shared drive. A support agent needs to read ticket histories, query a knowledge base, update records, and trigger escalation workflows.

Each of these connections required a custom integration. A developer would write a function that wrapped the CRM’s API, another function that wrapped the inventory system, another for email, and so on. These functions would be described to the LLM as “tools” in a format specific to whichever LLM provider was being used. If you switched LLM providers, you had to rewrite the tool descriptions. If a third-party service updated its API, you had to update your integration. If you wanted to add a new tool, you started from scratch.

The result was that building an AI agent with access to even five or six enterprise systems was a significant engineering project. Maintaining that agent over time, as systems changed and LLM providers updated their APIs, was an ongoing operational burden. And the integrations were rarely reusable: work done for one agent could not easily be transferred to another.

This is the fragmentation problem MCP was designed to solve.

What MCP Is: A Clear Explanation

Model Context Protocol is an open standard that defines a universal communication layer between AI models and external tools or data sources. Think of it as the USB standard for AI agent integrations.

Before USB, every peripheral device used a different connector. Printers had one port type, keyboards had another, and mice had another. Every device required a specific cable and driver. USB created a single universal interface: one connector type, one protocol, and any device that implemented it would work with any computer that implemented it.

MCP does the same thing for AI agents. An enterprise system (a CRM, a database, a file store, a ticketing system, an API) that implements an MCP server becomes instantly accessible to any AI agent that implements an MCP client, without any additional custom integration work. The protocol defines exactly how the AI model requests context from the tool, how the tool responds, and how results are passed back into the model’s reasoning process. Think of it also as a staffing agency model for enterprise tool access – you define the role once (the MCP server), and any qualified agent (any MCP client) can fill it without retraining.

MCP was introduced by Anthropic in November 2024. By April 2026, it has been adopted across the entire major AI development ecosystem. OpenAI added MCP support in April 2025, Microsoft integrated it into Copilot Studio in July 2025 and into Azure AI Agent Service, AWS Bedrock added support in November 2025, and all major providers were on board by March 2026. The ecosystem has grown to over 5,800 MCP servers covering developer tools, business applications, web and search, and AI automation — with 97 million monthly SDK downloads as of March 2026. The specification is open-source and available for any organisation to implement.

The Three Core Components of MCP

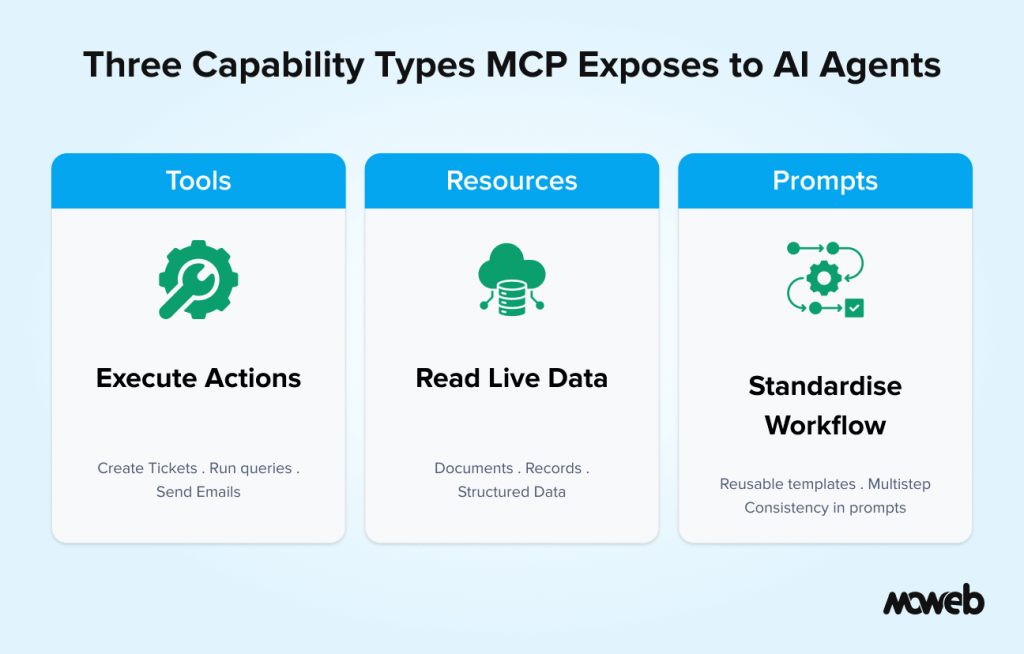

MCP defines three types of capabilities that a server can expose to an AI model. Understanding these helps clarify what MCP actually enables versus what it does not.

Tools are executable functions that the AI agent can call to take an action or retrieve real-time information. A tool might be “search the CRM for contacts matching these criteria,” “create a new support ticket,” “send an email,” or “run this SQL query against the reporting database.” Tools are the action layer of MCP. When an AI agent calls a tool, it is executing a real operation in an external system.

Resources are data that the AI model can read to inform its responses. Unlike tools (which execute operations), resources are static or semi-static content exposed to the model as context. A resource might be a specific document, a configuration file, a record from a database, or a structured data object. Resources are the equivalent of giving the model access to a piece of context it could not have generated itself.

Prompts are reusable prompt templates that can be invoked by the model or the application to standardise common interactions. They are less fundamental than tools and resources for enterprise use cases, but provide useful consistency for complex multi-step workflows.

In practice, most enterprise AI agent implementations rely primarily on tools and resources. The tools give the agent the ability to act; the resources give it the context to act intelligently.

Under the hood, MCP runs on JSON-RPC 2.0, with two supported transport layers: stdio for local agent-tool communication and HTTP with Server-Sent Events (SSE) for remote, cloud-deployed servers. This transport flexibility is what makes MCP viable across both local development environments and production enterprise deployments.

MCP vs. Traditional Function Calling: What Is Different

If you are familiar with how LLMs use function calling or tool use, you might be wondering how MCP is different from what was already possible. It is a fair question, and the distinction matters.

Traditional function calling in LLMs (the tool uses APIs offered by OpenAI, Anthropic, Google, and others) allows developers to define a set of functions the model can call during a conversation. The model decides when to call a function, passes the appropriate parameters, receives the result, and incorporates it into its response. This works and is powerful. But it has three limitations that matter at enterprise scale.

First, the tool definitions are tightly coupled to the specific LLM provider’s format. OpenAI’s function calling schema is different from Anthropic’s tool use schema, which is different from Google’s. Building agents that can run on multiple LLM providers requires maintaining separate tool definitions for each.

Second, the integrations are not shareable across agents or teams. A function that connects to your CRM, written for one agent, cannot be easily discovered or reused by another agent built by a different team. Every agent starts with its own bespoke set of integrations.

Third, there is no standardised server-side component. The integration logic lives entirely on the client side in the agent code, meaning every agent has to manage its own connection logic, authentication, error handling, and API versioning for every tool it uses.

MCP addresses all three limitations. Because MCP is a protocol rather than a provider-specific API, a tool implemented as an MCP server works with any MCP-compatible LLM client regardless of provider. Because MCP servers are standalone services, they can be built once and shared across multiple agents and teams. And because the server-side architecture is standardised, the connection logic, authentication, and error handling are handled at the server level rather than duplicated in every agent.

The practical result is that MCP shifts tool development from a per-agent, per-provider effort into a shared infrastructure layer that the whole organisation can build on.

Why This Is Specifically Important for Enterprise Environments

The benefits of MCP become more significant at enterprise scale, and for specific reasons that matter to organisations with complex existing tool ecosystems.

Legacy system integration. Large enterprises typically have a mix of modern SaaS tools, legacy on-premises systems, and custom internal applications. Getting an AI agent to work across all of these previously meant writing custom integrations for each, with all the maintenance overhead that implies. MCP-compatible wrappers can be built around legacy systems once, and any future AI agent can then access those systems without additional integration work. This dramatically reduces the technical debt associated with scaling agentic AI across an organisation.

Multi-agent architectures. As organisations move from single agents to networks of specialised agents working together (an orchestrating agent that delegates to a research agent, a writing agent, a data retrieval agent, and an approval agent, for example), the connections between agents and tools multiply rapidly. Without a standardised protocol, this quickly becomes unmanageable. MCP provides a consistent interface that all agents in a network can use to access shared tools and resources, making multi-agent systems significantly easier to build and maintain.

Governance and access control. In enterprise environments, tool access needs to be controlled. Not every agent should have access to every system. MCP’s server-based architecture makes it easier to implement centralised access control: permissions are managed at the MCP server level, and the agent client receives only the tools and resources it is authorised to use. This is cleaner and more auditable than access control scattered across bespoke integration code.

Vendor independence. One of the strategic risks in enterprise AI adoption is lock-in to a single LLM provider. If your agent infrastructure is built on provider-specific function calling APIs, switching providers requires rebuilding all your tool integrations. MCP-based integrations are provider-agnostic: the same MCP servers work with any MCP-compatible model. This preserves optionality as the LLM market continues to evolve.

MCP in Practice: What Enterprise AI Agents Can Do With It

The difference MCP makes becomes clearest when you look at what enterprise AI agents can realistically do once MCP servers are in place for the key systems they need to access.

Consider a sales intelligence agent that a mid-market enterprise deploys to support its account management team. Without MCP, building this agent requires custom integrations for the CRM (Salesforce), the communication tools (email, calendar), the internal knowledge base, the product database, and the contract repository. Each integration is a separate engineering effort.

With MCP servers in place for each of these systems, the agent can:

- Query the CRM in natural language to surface account history, open opportunities, and relationship context for a specific client

- Search the internal knowledge base for relevant case studies, pricing precedents, and competitive intelligence

- Pull recent email and meeting activity related to the account from the communication tools

- Check contract status and renewal dates from the contract repository

- Draft a tailored account briefing document and save it to the shared drive

All of this happens within a single agent interaction, using standardised tool calls through MCP rather than a tangle of bespoke API integrations. And when a new agent is needed for a different use case, the same CRM MCP server, knowledge base MCP server, and communication MCP server are already available for it to use.

This is the compounding value of MCP as an infrastructure layer: the first agent is still expensive to build (because you are building the MCP servers), but each subsequent agent becomes significantly cheaper because it inherits a growing library of reusable, maintained MCP server connections.

MCP and RAG: How They Work Together

MCP and RAG address different problems but are highly complementary in enterprise AI architectures, and it is worth being clear about what each does.

RAG gives an AI agent the ability to retrieve relevant content from a document corpus at query time. It is the memory layer. MCP gives an AI agent the ability to connect to live tools and services to take actions or retrieve real-time structured data. It is the action layer.

A well-designed enterprise AI agent typically needs both. The RAG system handles queries that require a nuanced understanding of documents and unstructured content. MCP handles operations that require structured data retrieval from live systems or execution of actions in external tools.

For example, a procurement agent might use RAG to search the contract knowledge base and understand the terms of a specific supplier agreement, and then use MCP tool calls to query the ERP system for current inventory levels, check the supplier’s performance scorecard in the CRM, and create a purchase order in the procurement system, all within a single automated workflow. For more on how RAG architecture works and when it is the right choice, our guides on what RAG is and when enterprises should use it and RAG development for enterprise knowledge systems cover those decisions in detail.

What Enterprise Teams Should Know Before Implementing MCP

MCP is a significant architectural improvement, but it is not a silver bullet. Here are the practical considerations that enterprise teams should factor into their planning.

MCP servers need to be built and maintained. The protocol is open, but MCP servers for your specific enterprise systems need to be implemented by your engineering team or an implementation partner. An MCP server for Salesforce is available as an open-source community project, and so are servers for popular tools like GitHub, Slack, Google Drive, and Jira. For custom internal systems or legacy applications, you will need to build and maintain your own MCP servers. This is a one-time investment per system, but it is still an investment.

Security needs careful design. Because MCP gives AI agents the ability to take actions in real systems (not just retrieve information), the security implications are more significant than for a read-only knowledge retrieval system. Your MCP server design must include authentication, scope-limited permissions for each agent, audit logging of all tool calls, and rate limiting to prevent runaway agent behaviour. Implementing MCP without these controls creates real operational risk.

Ecosystem maturity. While the ecosystem has matured rapidly with enterprise-grade MCP servers now available from Microsoft, IBM, Salesforce, and others, and MCP appearing on the Thoughtworks Technology Radar in the Trial category as of late 2025, your internal or legacy systems will still require a custom MCP

It works best as part of a broader agentic architecture. MCP solves the tool integration problem, but a well-functioning enterprise AI agent also needs a sound orchestration layer, a well-designed system prompt, appropriate guardrails, and a human-in-the-loop process for high-stakes actions. MCP is necessary but not sufficient. Moweb’s AI Agents & Intelligent Automation practice designs and builds enterprise agent architectures that incorporate MCP as a core component of the integration layer, alongside governance frameworks and orchestration design.

To help enterprise teams assess where they stand and what to build toward, Moweb defines three stages of MCP readiness:

- Pre-MCP: AI agents connect to tools via bespoke, provider-specific function calling integrations. Each agent has its own integration layer. No reuse across agents or teams. High maintenance overhead.

- MCP-Enabled: Core enterprise systems (CRM, ticketing, data stores) have MCP servers in place. New agents inherit this integration layer. Tool access is standardised and auditable. Teams are actively building MCP server coverage.

- MCP-Native: All enterprise tooling is MCP-accessible. Multi-agent architectures run on a shared MCP infrastructure. Security, governance, and observability are built into the MCP layer. Agent development time is dominated by orchestration and reasoning design, not integration work.

Most enterprises beginning their agentic AI journey in 2025–2026 are at the Pre-MCP or early MCP-Enabled stage. The path to MCP-Native is measured in months, not years, for teams with clear integration prioritisation and the right implementation support.

Frequently Asked Questions About MCP and Enterprise AI Agents

What does MCP stand for, and who created it? MCP stands for Model Context Protocol. It was introduced by Anthropic in November 2024 as an open standard for connecting AI models and agents to external tools, data sources, and services. The specification is open-source and has since been adopted by a wide range of AI development tools and platforms beyond Anthropic’s own products.

Is MCP only for Anthropic’s Claude models? No. MCP is an open protocol that any AI model or agent framework can implement. While it was introduced by Anthropic, it has been adopted across the broader AI development ecosystem and works with any LLM that implements the MCP client specification. This provider-agnostic nature is one of its key advantages for enterprise environments.

How is MCP different from an API integration? A standard API integration is a bespoke, point-to-point connection between your agent code and a specific service. Every system needs its own integration, and those integrations are typically tied to a specific LLM provider’s tool calling format. MCP is a standardised protocol where tools and data sources are exposed through MCP servers that any MCP-compatible agent can use without additional integration work. The difference is similar to the difference between a proprietary connector and a USB port.

What enterprise systems have MCP servers available? The MCP ecosystem includes open-source community servers for a wide range of commonly used enterprise tools, including Salesforce, GitHub, Jira, Slack, Google Drive, Notion, Postgres, and many others. For systems not already covered, custom MCP servers can be built by your engineering team or an implementation partner. The list of available servers is growing rapidly.

Do we need MCP if we are only building a document-answering chatbot? Not necessarily. If your AI use case involves only reading from a document knowledge base and generating responses (a classic RAG application), MCP is not required. MCP becomes relevant when your AI agent needs to take actions or retrieve real-time structured data from live enterprise systems, not just answer from static documents. If you expect your AI system to evolve from a read-only knowledge tool into an agent that can take actions, designing for MCP from the beginning saves significant rework later.

What are the security risks of MCP in enterprise environments? The primary risks are unauthorised tool access (an agent using an MCP server it should not have access to), privilege escalation (an agent performing actions beyond its permitted scope), and audit gaps (no record of which agent called which tool with what parameters and what the result was). Additional risks include tool poisoning, where MCP server descriptions are manipulated to redirect agent behaviour and prompt injection via malicious tool outputs. These require server-side validation and regular security audits of your MCP server registry. These risks are manageable with proper MCP server design: authentication between agent and server, scope-limited permissions per agent, comprehensive audit logging, and rate limiting. Treating the MCP server security as an afterthought is the most common mistake in early enterprise implementations.

How does MCP relate to AI agent orchestration frameworks like LangChain or LangGraph? MCP is a communication protocol, not an orchestration framework. LangChain and LangGraph define how agents plan, reason, and sequence their actions. MCP defines how agents communicate with external tools and data sources. The two are complementary: LangGraph (or another orchestration framework) manages the agent’s decision-making and workflow, while MCP handles the standardised connections to the tools and systems the agent needs to use. Most production enterprise agent architectures use both.

Google’s Agent-to-Agent (A2A) protocol, introduced in April 2025, is designed to complement MCP rather than replace it. Where MCP standardises how agents connect to tools and data sources, A2A standardises how independent AI agents discover each other, establish secure communication, and coordinate tasks. In enterprise architectures deploying multiple specialised agents, MCP handles the agent-to-tool layer and A2A

Conclusion: MCP Is the Infrastructure Layer Enterprise AI Has Been Waiting For

The gap between what AI agents promise and what most enterprise deployments have delivered comes down, in large part, to the difficulty and fragility of tool integrations. MCP does not solve every problem in enterprise agentic AI, but it solves a foundational one: the connection layer between AI models and the enterprise systems they need to be genuinely useful.

For organisations that are serious about building AI agents that can operate across their full tool ecosystem rather than a curated handful of manually integrated services, MCP is not an optional nice-to-have. It is the architectural foundation that those agents need to be maintainable, scalable, and provider-independent over time.

If your organisation is planning an AI agent implementation or evaluating an existing architecture, the question of whether MCP is part of the design is worth asking early. Moweb’s AI Agents & Intelligent Automation team builds enterprise agentic systems with MCP as a standard component of the integration architecture. Talk to us about what that looks like for your use case.

Found this post insightful? Don’t forget to share it with your network!