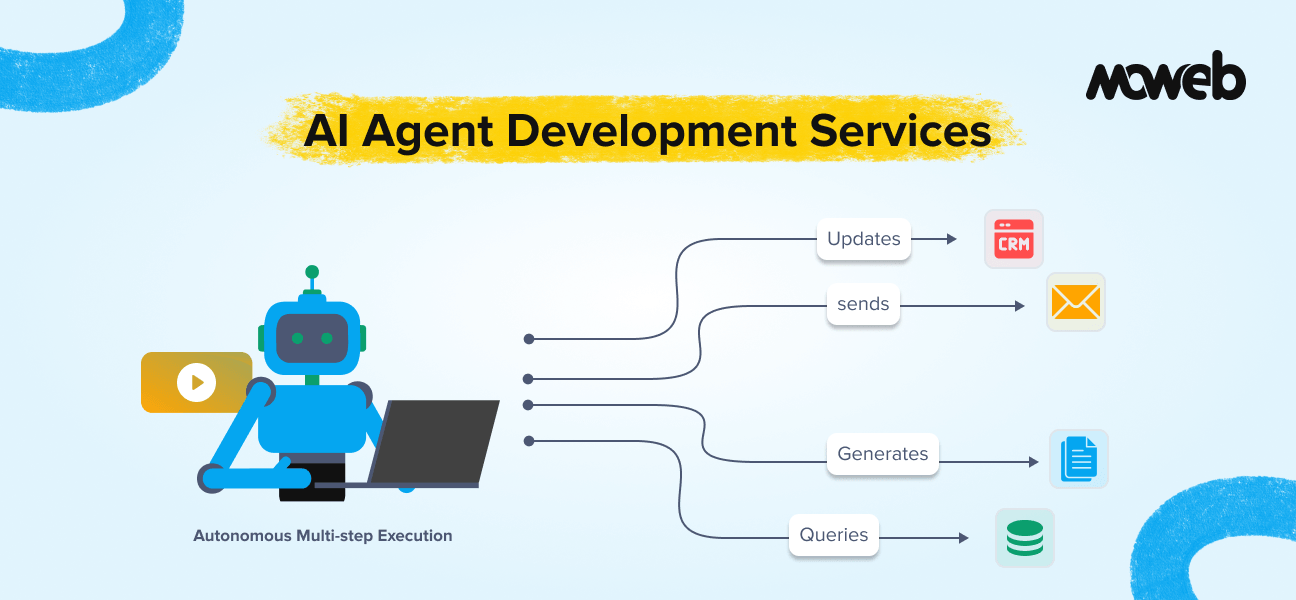

What are AI agent development services? AI agent development services involve designing, building, testing, and deploying autonomous AI systems that can perceive context, make decisions, execute multi-step tasks, and interact with external tools and enterprise systems without requiring step-by-step human instruction for each action. According to Gartner, 40% of enterprise applications will be integrated with task-specific AI agents by the end of 2026, up from less than 5% in 2025. These services typically cover use case definition, architecture design, tool integration (including MCP-based connections), orchestration framework setup, testing, governance controls, and post-deployment monitoring.

What can enterprise AI agents actually do? Enterprise AI agents can autonomously execute workflows that previously required significant human coordination: researching and summarising intelligence from multiple sources, processing and routing documents through approval pipelines, querying CRMs and databases to surface account context, generating and sending structured communications, triaging and escalating support tickets, monitoring data pipelines and flagging anomalies, and coordinating across multiple specialised sub-agents to complete complex multi-step tasks. The key distinction from earlier automation tools is that agents can adapt their behaviour based on context and handle situations that were not explicitly programmed. A LangChain survey of 1,300+ practitioners (December 2025) found 57% of organisations already have AI agents in production, with quality of outputs and governance infrastructure identified as the primary barriers to broader deployment.

There is a meaningful difference between AI that answers questions and AI that gets things done.

The first category, broadly, is what most enterprise AI deployments look like today: chatbots, search assistants, document summarisers, and decision-support tools that produce outputs a human then acts on. Useful, but still fundamentally a tool that requires a person to complete the loop.

The second category is what AI agents make possible. An agent does not just produce an output and wait. It takes the output, decides what to do with it, executes the next step, assesses the result, and continues until the task is complete – or until it encounters a situation that requires human judgment, at which point it escalates appropriately.

This shift from AI-as-tool to AI-as-actor is the most significant transition in enterprise AI right now. It is also one of the most frequently misunderstood, overpromised, and under-engineered. This guide covers what enterprise AI agent development actually involves, what agents can genuinely do well, where the real risks are, and how to approach implementation in a way that produces reliable outcomes rather than impressive demos. Gartner predicts that 40% of enterprise applications will integrate task-specific AI agents by the end of 2026, up from less than 5% in 2025, making the window to build and deploy production-grade agentic systems now, not later.

What Makes Something an AI Agent (and What Does Not)

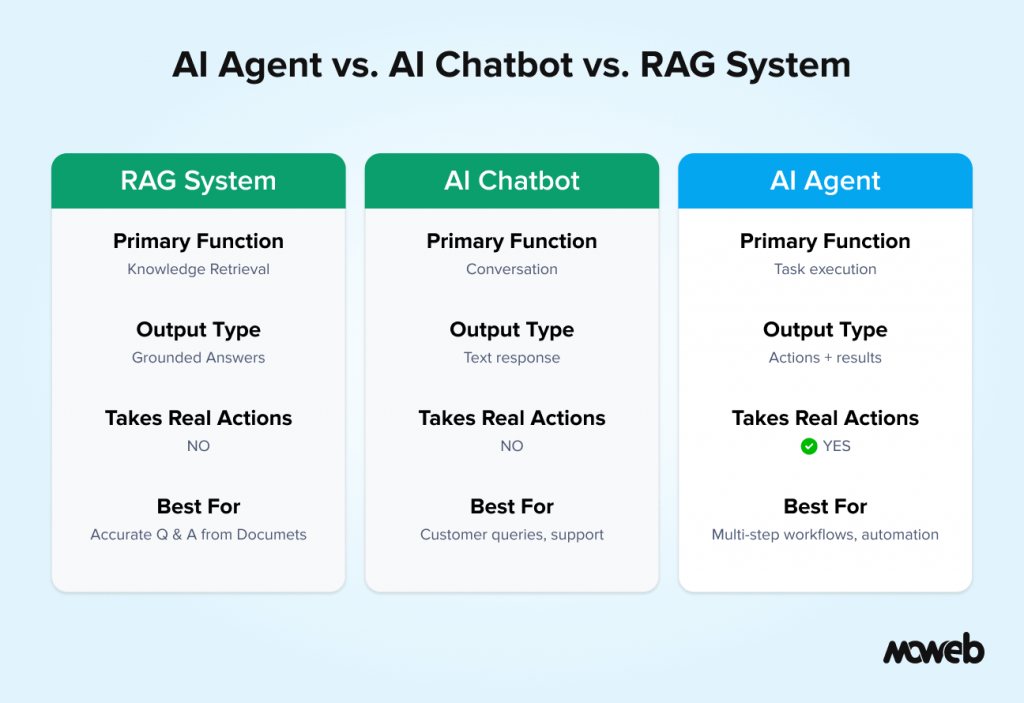

Before getting into use cases and implementation, it is worth being precise about what an AI agent actually is, because the term is being applied to everything from a simple chatbot with a few tool calls to a genuinely sophisticated autonomous system.

An AI agent has three characteristics that distinguish it from a standard LLM application:

Autonomy over multi-step processes. An agent can decompose a goal into sub-tasks, execute those sub-tasks sequentially or in parallel, and adapt based on intermediate results. It does not require a human to specify each step. A chatbot that answers a question is not an agent. A system that receives a brief, searches multiple sources, synthesises findings, identifies gaps, requests additional information where needed, and produces a final structured report – that is an agent.

Tool use and external action. An agent connects to external systems and takes real actions: querying databases, creating records, sending communications, triggering workflows, and calling APIs. An LLM that only produces text is not an agent. An LLM that can query your CRM, update a ticket, and send an email based on the result is operating agentically.

Goal-directed behaviour with adaptive decision-making. An agent works toward an objective and makes decisions about how to achieve it based on context. When it encounters an obstacle, it tries an alternative approach rather than stopping. It has a form of operational judgment within the scope it has been given.

These three characteristics together define what separates a genuine AI agent from a well-prompted chatbot. Understanding this distinction helps you evaluate what vendors are actually building and whether it matches what you need.

Enterprise AI Agent Use Cases: Where They Create Real Value

The most reliable way to evaluate whether AI agents are right for a specific use case is to ask: Does this task require multi-step execution, tool access, and adaptive decision-making? If yes, agents are a good fit. If the task is essentially a single-step question-and-answer interaction, a well-built RAG system is probably more appropriate.

Here are the enterprise use cases where AI agents are producing demonstrable value in 2026:According to a 2026 mid-year report, 80% of organisations now report measurable economic impact from AI agents, and 57% already have agentic AI running in production (G2, 2025). The AI agents market reached $7.6 billion in 2025 and is projected to exceed $47 billion by 2030, driven largely by enterprise adoption of the use cases below.

Sales Intelligence and Account Research

A sales intelligence agent receives a brief (company name, meeting context, deal stage) and autonomously executes a research workflow: querying the CRM for account history and contact relationships, searching the internal knowledge base for relevant case studies and pricing precedents, pulling recent news and public financial information about the account, checking email and calendar for previous interactions, and producing a structured account briefing document within minutes of the request.

The value is not that an agent can do this instead of a human. A skilled sales analyst could do it too. The value is that an agent can do it in two minutes instead of two hours, for every account, every time, with a consistent structure. Across a sales team of thirty people, this is hundreds of hours per week returned to higher-value work.

Document Processing and Routing

In legal, compliance, finance, and operations functions, large volumes of documents arrive continuously and require classification, data extraction, routing to the right team or workflow, and in some cases initial assessment. An agent can read an incoming document, classify it by type and priority, extract key data fields, check against existing records or policies, route it to the appropriate queue or approver with a structured summary, and flag anything that requires immediate human attention.

This is particularly high-value in environments where document volumes make human processing a bottleneck: insurance claims, supplier onboarding, contract review intake, regulatory filings, and grant applications are common deployment contexts.

IT Operations and Incident Management

An IT operations agent monitors system health metrics, log streams, and alerting systems continuously. When it detects an anomaly, it does not just page an engineer with a raw alert. It investigates: correlating the anomaly with recent deployment history, checking dependency services, searching incident history for similar patterns, running automated diagnostic checks, and producing a structured incident summary that includes probable cause, affected services, and recommended remediation steps. The on-call engineer receives context, not noise.

More advanced implementations allow the agent to execute certain remediation actions autonomously (restarting services, scaling resources, rolling back deployments) within predefined safe boundaries, escalating to humans only when the situation exceeds its authorised scope.

Customer Support Tier-1 and Escalation Triage

A support triage agent handles incoming support requests by reading the customer’s message, querying the customer record in the CRM, searching the knowledge base for relevant resolution content, checking for open tickets from the same customer, and either resolving the issue directly (if a clear resolution exists in the knowledge base), providing a detailed response with referenced sources, or preparing a structured escalation package for a human agent that includes full context, relevant history, and suggested resolution steps.

The agent does not replace human support. It eliminates the information-gathering overhead that consumes the majority of handling time for tier-1 issues, so human agents spend their time on genuine judgment calls rather than searching for context.

Financial and Operational Reporting

A reporting agent can execute a defined reporting workflow autonomously: pulling data from multiple source systems, running standard calculations and variance analysis, generating narrative commentary based on the numbers, formatting the output against a standard template, and distributing the report to defined recipients. For weekly operational reports, monthly management packs, and exception-based monitoring reports that follow a consistent structure, this replaces hours of manual work with a reliable automated process.

Procurement and Vendor Management

A procurement agent can process purchase requests by checking policy compliance, searching for approved vendors, comparing pricing against historical records, generating a compliant purchase order, routing for approval based on value thresholds, and updating the relevant systems once approval is received. For routine procurement below a certain value threshold, this can operate with minimal human involvement. For higher-value or non-standard procurement, the agent handles the information gathering and routing, with humans retaining decision authority.

The Real Risks of Enterprise AI Agent Deployment

The risk profile of AI agents is meaningfully different from traditional software and from simpler AI applications. Understanding these risks before building is the difference between a system that creates value and one that creates liability.

Cascading Errors in Autonomous Workflows

In a standard application, a bug produces an incorrect output. In an autonomous agent, a mistake at step three of a twelve-step workflow can propagate through subsequent steps, each building on the flawed output, until the final result is significantly wrong in ways that are difficult to trace. Unlike a chatbot that produces a bad answer a user can simply ignore, an agent that has taken real actions in real systems (updated records, sent communications, triggered payments) has created consequences that require active remediation.

The mitigation is deliberate design of checkpoints and human-in-the-loop gates at high-stakes decision points, combined with comprehensive logging of every action taken and every intermediate output produced.

Scope Creep and Unintended Tool Use

AI agents are instructed through natural language, and natural language is imprecise. An agent given broad tool access and an ambitious goal will sometimes take actions that are technically within its permitted scope but were not intended. An agent with write access to a CRM might update records it was only supposed to read. An agent with email send permissions might send a message to an unintended recipient.

The mitigation is the principle of least privilege: agents should have access only to the tools and data they specifically need for their defined tasks, with explicit permission boundaries rather than broad access grants. Every action an agent can take should be explicitly enumerated and authorised, not inferred from broad access.

Prompt Injection Through External Data

An agent that reads external content (web pages, emails, documents, database records) as part of its workflow is vulnerable to prompt injection: malicious instructions embedded in that external content that attempt to override the agent’s instructions or cause it to take unintended actions. This is not a theoretical risk. It is an active exploitation pattern that has been demonstrated against real deployed systems.

The mitigation requires treating all externally retrieved content as untrusted input, implementing input sanitisation at the retrieval layer, and designing agent prompts to be resistant to instruction overrides from content in the context window.

Data Exfiltration Through Agent Actions

An agent with broad data access and external communication capabilities (email, API calls, file exports) could, if compromised or manipulated, become a mechanism for exfiltrating sensitive data. This risk is particularly acute for agents that handle confidential commercial or personal data.

The mitigation requires audit logging of all external data transfers, rate limiting on communication actions, anomaly detection on agent behaviour patterns, and regular security review of agent tool permissions as the system evolves.

Governance and Accountability Gaps

When an agent takes an action that causes a problem, who is responsible? The engineer who built it? The business owner who approved the use case? The vendor who implemented it? In enterprise environments with regulatory obligations, unclear accountability for AI agent actions is a genuine compliance risk.

The mitigation requires explicit governance documentation: a defined owner for every agent deployment, a documented scope of authorised actions, a clear escalation path for out-of-scope situations, and an audit trail that allows reconstruction of every decision and action the agent took. For a complete governance framework covering LLMs and agent deployments, including role-based access control, explainability requirements, and regulatory compliance, see our guide to AI governance for LLMs and enterprise agents.

Model Hallucination and Stale Context

Unlike a chatbot, where a hallucinated response is visible and easily dismissed, an agent that fabricates a data point, a policy number, a pricing figure, a compliance threshold, and then acts on it can trigger a chain of real-world consequences before anyone notices the error. The risk compounds when agents operate on stale context: information retrieved from enterprise systems that has since been updated, leading the agent to make decisions based on an outdated state.

The mitigation requires grounding agents in live data sources rather than cached snapshots, implementing retrieval validation steps that confirm data freshness before high-stakes actions, and designing agent prompts to explicitly flag uncertainty rather than confabulate when information is missing or ambiguous.

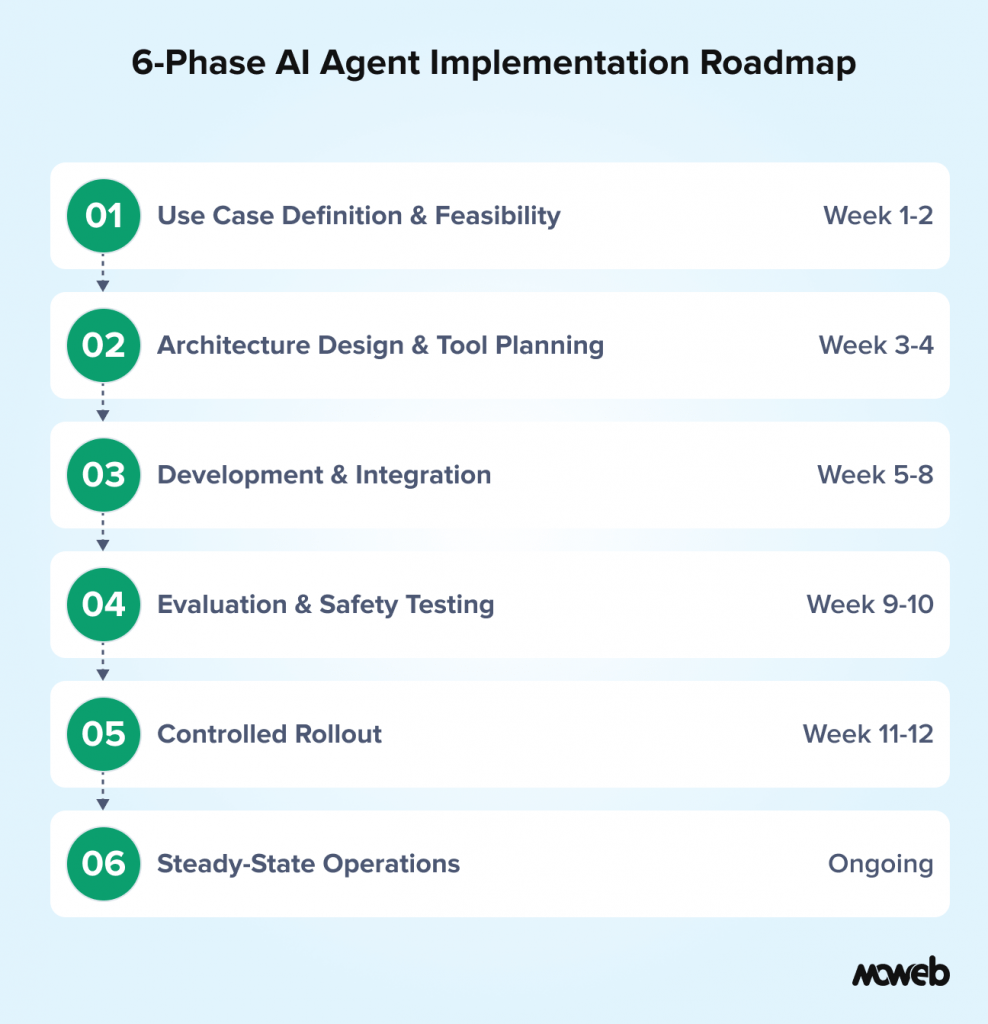

Implementation Roadmap: Six Phases to Production

Building enterprise AI agents reliably requires a structured approach. Here is the implementation roadmap Moweb uses across agent engagements, adapted to different use cases and organisational contexts.

Phase 1: Use Case Definition and Feasibility Assessment (Weeks 1 to 2)

Before any engineering begins, the use case needs to be precisely defined. This means documenting: the specific task the agent will perform, the tools and systems it needs access to, the decision boundaries within which it operates autonomously versus escalating to humans, the success criteria and how they will be measured, and the data and system access required.

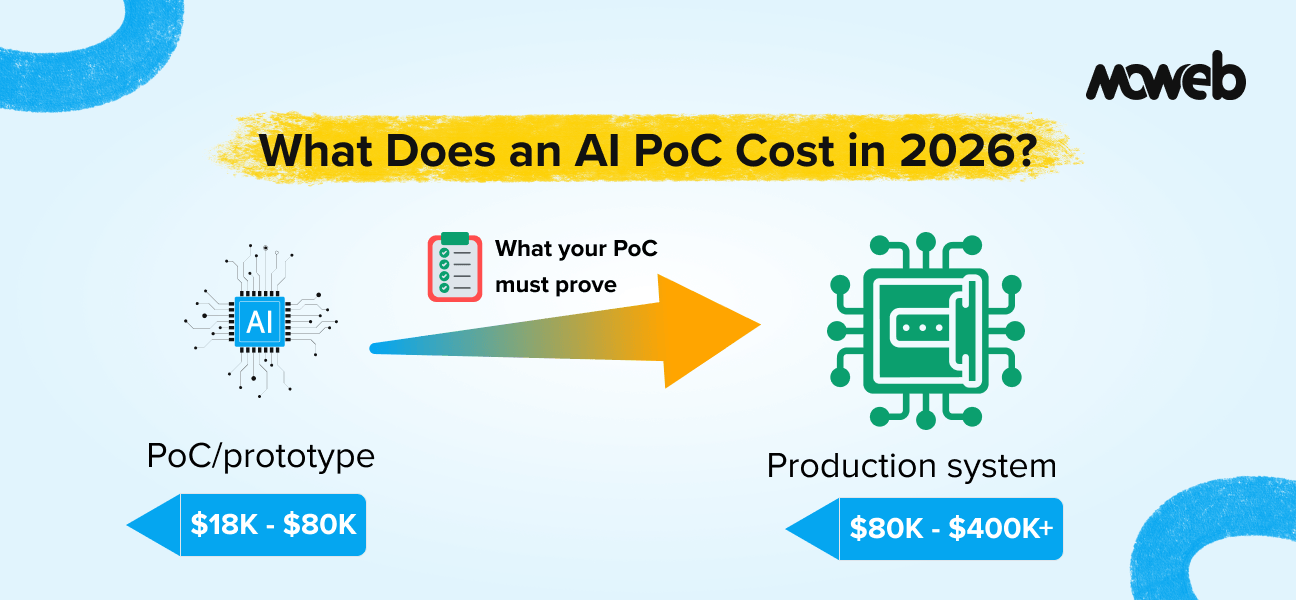

At this phase, a feasibility assessment identifies the technical dependencies (API availability, data quality, integration complexity) and the governance requirements (access controls, audit logging, approval workflows) that the implementation must address. For a breakdown of what an AI proof-of-concept typically costs in 2026, including tooling, engineering, and evaluation overhead, see our guide to AI PoC costs for enterprises.

Phase 2: Architecture Design and Tool Integration Planning (Weeks 2 to 4)

With the use case defined, the architecture layer covers: orchestration framework selection (LangGraph, AutoGen, CrewAI, or custom), tool integration design (MCP-based connections where applicable, custom integrations where not), memory and state management approach, and the human-in-the-loop design (where checkpoints exist, who reviews, what triggers escalation).

Tool integration planning at this stage identifies which MCP servers exist for the required enterprise systems and which need to be built. As covered in our guide on what MCP is and why it matters for enterprise AI agents, MCP significantly reduces the integration engineering burden for agents that need to connect to multiple enterprise systems.

Phase 3: Development and Integration (Weeks 4 to 10)

The development phase builds the agent system iteratively: starting with the core workflow on clean test data, adding tool integrations progressively, implementing error handling and fallback behaviours, building the logging and audit infrastructure, and adding access controls at the tool layer.

Testing during this phase includes adversarial testing for prompt injection and unintended tool use, not just functional testing of the happy path. A system that works correctly on clean inputs but breaks on adversarial ones is not production-ready.

Phase 4: Evaluation and Safety Testing (Weeks 10 to 12)

Before any production deployment, the agent system undergoes a structured evaluation:

- Functional accuracy testing against a representative set of real task examples, with human review of outputs

- Boundary testing: what happens when the agent encounters input it was not designed for?

- Adversarial testing: can the agent be manipulated through prompt injection in retrieved content?

- Performance testing: latency, throughput, and cost per task at expected production volumes

- Access control audit: Does the agent access only what it is permitted to access?

Phase 4 produces a documented evaluation report that becomes part of the governance record for the deployment.

Phase 5: Controlled Rollout (Weeks 12 to 16)

Production deployment begins with a controlled rollout: a limited set of real tasks, with human review of all agent outputs before they take effect. Shadow mode operation, running the agent in parallel with existing human workflows without taking binding actions, is the recommended starting position before full controlled rollout. It validates real-world behaviour, edge case handling, and tool call patterns against production data before any autonomous actions are taken. This is not a pilot — it is production with enhanced oversight…

The purpose is to identify failure modes and edge cases that evaluation did not surface, before those failures have operational consequences. The controlled rollout period continues until the agent’s performance on real tasks meets the defined acceptance criteria.

Phase 6: Steady-State Operations and Monitoring (Ongoing)

Once the agent reaches steady-state operations, ongoing monitoring covers: output quality metrics, tool call patterns (anomaly detection for unexpected behaviour), task completion rates, escalation frequency and patterns, and cost per task. Monitoring results feed back into regular review cycles where the agent’s scope, instructions, and tool access are assessed and updated as the operational context evolves.

How to Evaluate an AI Agent Development Partner

Not every company that offers AI agent development services has genuine production deployment experience. Here are the specific questions to ask when evaluating a development partner for an agent implementation:

- “Can you describe an AI agent you have deployed to production? What orchestration framework did you use, what tools did the agent access, and what monitoring is in place?”

- “How do you handle prompt injection risk in agents that access external content?”

- “What does your access control design look like for agents with write permissions to enterprise systems?”

- “What is your approach to human-in-the-loop design? Where do you recommend checkpoints in multi-step workflows?”

- “What does a typical evaluation phase look like before production deployment?”

A vendor who has not operated agents in production will struggle to give specific, confident answers to these questions. One who has will answer them naturally and in detail. Moweb’s AI Agents & Intelligent Automation practice builds enterprise agent systems across the full six-phase roadmap described here, with security, governance, and MCP-based integrations as standard components. For a broader guide on evaluating AI development partners, our post on how to choose an AI development company for enterprise projects covers that decision in detail.

Frequently Asked Questions About AI Agent Development Services

What is the difference between an AI agent and an AI chatbot? A chatbot is designed for conversation: a user asks a question, the system responds, and the interaction ends. An AI agent is designed for task execution: given a goal, it plans a sequence of actions, uses tools to carry them out, adapts based on intermediate results, and completes a workflow that may span many steps and multiple systems. A chatbot produces outputs for humans to act on. An agent acts on them directly.

What are the most common enterprise AI agent use cases in 2026? The highest-adoption use cases in enterprise environments currently include sales intelligence and account research, document processing and routing, IT operations monitoring and incident triage, customer support triage and escalation, financial and operational reporting automation, and procurement workflow automation. The common thread is tasks that are repetitive, multi-step, tool-dependent, and currently consume significant human time without requiring genuine human judgment for the majority of cases.

What orchestration frameworks are used to build enterprise AI agents? The most widely deployed frameworks in enterprise production environments are LangGraph (from LangChain), which is suited to complex stateful workflows; AutoGen (from Microsoft), which is designed for multi-agent collaboration patterns; and CrewAI, which is well-suited to role-based multi-agent systems. For simpler single-agent implementations, LangChain’s agent abstractions or custom implementations using direct LLM APIs are also common. The right choice depends on the complexity of the workflow, the need for state management, and the team’s existing familiarity.

How long does it take to build and deploy an enterprise AI agent? A focused single-agent deployment for a well-defined use case with clear tool integrations typically takes 8 to 14 weeks from use case definition to production, using the six-phase roadmap. More complex multi-agent systems with custom tool integrations, sophisticated governance requirements, or enterprise-wide deployment scope typically take 16 to 30 weeks. Timelines are heavily influenced by the availability and quality of the tool integrations (APIs, MCP servers) and the organisation’s internal decision-making speed.

What is the biggest risk in deploying AI agents in enterprise environments? Based on production experience, the most consistently underestimated risk is cascading errors in autonomous workflows. When an agent makes a mistake in an early step of a multi-step process, subsequent steps build on that mistake. The final output can be significantly wrong in ways that are difficult to trace, and if the agent has taken real actions along the way (updating records, sending communications), those actions require active remediation. Robust checkpointing, comprehensive logging, and human-in-the-loop gates at high-stakes decision points are the primary mitigations.

Do enterprise AI agents need a human in the loop? Yes, for any action with significant business consequences. The design principle is that autonomous execution should be reserved for actions that are reversible, low-risk, and well within the agent’s tested capability. Irreversible actions (sent communications, financial transactions, record deletions) or high-stakes (customer-facing responses to sensitive situations, compliance-related decisions) should require human approval at a defined checkpoint. The right human-in-the-loop design is specific to each use case and should be explicitly documented as part of the governance framework.

How does MCP improve enterprise AI agent development? MCP (Model Context Protocol) provides a standardised communication layer between AI agents and external tools and data sources. Without MCP, each tool connection requires a bespoke integration specific to the agent’s LLM provider. With MCP, tools are exposed through standardised servers that any MCP-compatible agent can use without additional integration work. For enterprises building multiple agents that need access to the same enterprise systems (CRM, ticketing, databases, file stores), MCP significantly reduces total integration engineering cost and makes individual agent implementations more maintainable. See our guide on what MCP is and why it matters for a fuller explanation.

What is the difference between an AI agent and RPA (Robotic Process Automation)? RPA automates rule-based, deterministic processes that follow a fixed script — it cannot adapt if the process changes or if an unexpected input is encountered. An AI agent can handle variation, interpret unstructured content, make context-dependent decisions, and adapt its approach when a step does not go as expected. RPA is appropriate for highly structured, stable processes. AI agents are appropriate for workflows that require judgment, handling of variable inputs, or multi-step coordination across systems that do not have a fixed script.

What is memory poisoning in AI agents, and how is it mitigated? Memory poisoning occurs when malicious or corrupted data is stored in an agent’s long-term memory either through a compromised data source the agent processes or through a deliberate prompt injection attack that causes the agent to write adversarial instructions to its own memory store. Unlike session-level prompt injection, memory poisoning persists across sessions and can corrupt agent behaviour until the tainted memory is identified and removed. Mitigation requires treating agent memory stores as sensitive infrastructure: regular audits of stored memory content, access controls on memory write operations, and sanitisation pipelines for content before it is stored.

Conclusion: Build for Reliability, Not Just Capability

The most capable AI agents deployed carelessly create more problems than they solve. The most carefully built AI agents, deployed on the right use cases with proper governance, create genuine and compounding operational value.

The distinction comes down to how seriously you take the engineering rigour required: precise use case definition, deliberate access control design, adversarial testing before production, comprehensive logging, and human oversight at the decision points that matter.

If your organisation is evaluating AI agent development as a capability to invest in, the questions in this guide give you a framework for assessing whether you have the right use case, the right risk posture, and the right delivery partner to build something that works reliably at enterprise scale.

Moweb’s AI Agents & Intelligent Automation team builds enterprise agent systems with production reliability as the design constraint, not the afterthought. Talk to us about your use case.

Found this post insightful? Don’t forget to share it with your network!