What is AI used for in fintech in 2026? AI in fintech is applied across six primary use cases: real-time fraud detection (blocking suspicious transactions in under 50 milliseconds using behavioural and network pattern analysis), credit risk scoring and underwriting automation (processing loan applications in minutes using 1,600+ variables including alternative data), AML and KYC automation (reducing false-positive rates in transaction monitoring from 90%+ to under 20%), regulatory compliance monitoring (tracking regulatory change and flagging breaches before they occur), personalised financial products (tailoring offers and pricing to individual risk profiles and behaviour), and AI-powered customer service (handling 60-80% of service interactions without human agents). In 2026, a seventh dimension is emerging: autonomous AI agents for financial crime investigation systems that do not just flag suspicious activity but execute multi-step investigative workflows, draft SAR submissions, and coordinate cross-channel fraud responses without human prompting. McKinsey estimates AI’s annual value potential in banking alone at $200 to $340 billion.

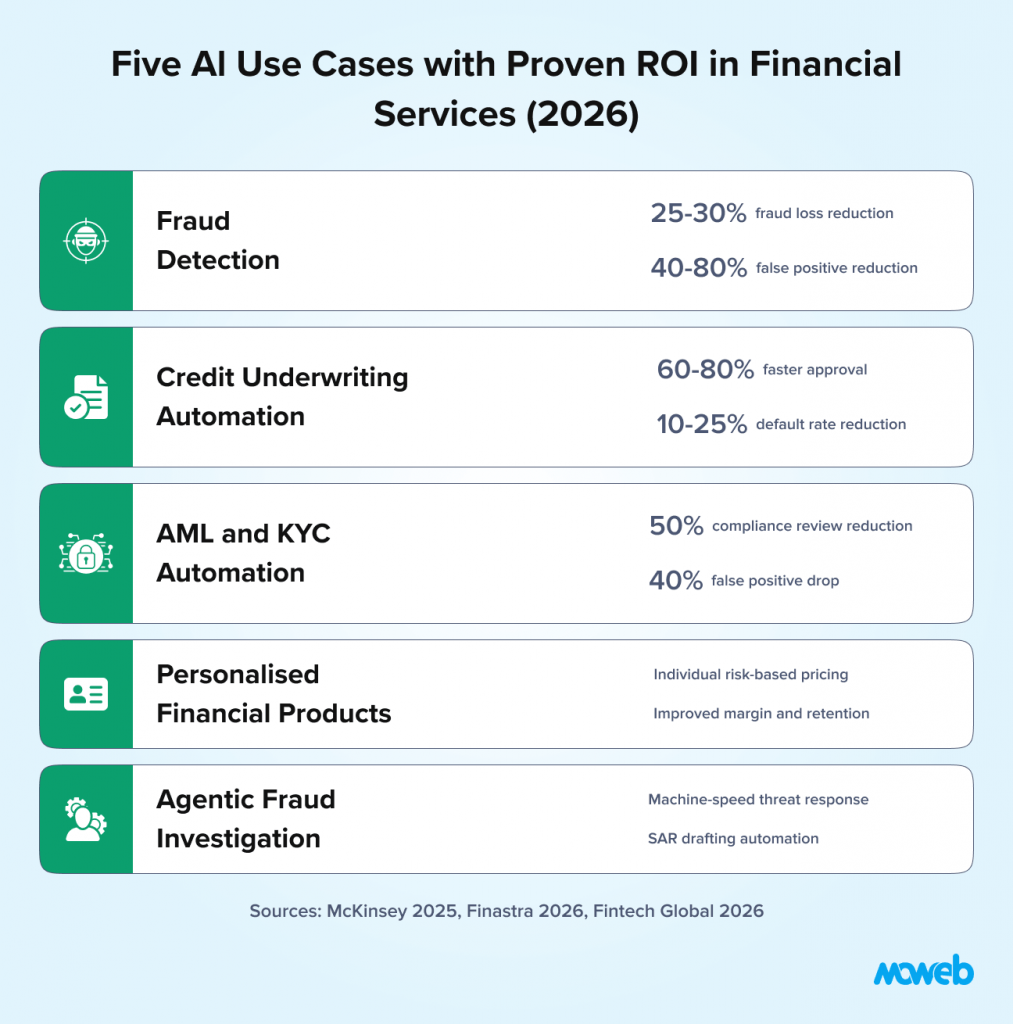

What is the ROI of AI in financial services? Financial institutions deploying AI in fraud detection typically see a 25-30% reduction in fraud losses within 12-18 months. Independent data shows AI-based fraud detection reduced financial losses by 40% for major platforms in 2025 (Wezom/Finastra, 2026). Compliance automation generates 30-40% cost savings in AML and KYC operations. Loan processing automation reduces approval times by 60-80%, compressing multi-day underwriting to minutes. The global AI in finance market stood at $17.7 billion in 2025 and is projected to reach $73.9 billion by 2033 at a 19.5% CAGR (Acropolium / market research, 2026). Gartner’s 2026 data shows 59% of finance functions now use AI, but ROI concentration is significant: institutions that tie AI to specific revenue outcomes and build governance before scaling consistently outperform those that deploy broadly without measurement frameworks.

Financial services is the sector where AI’s potential is largest and where getting it wrong carries the most severe consequences.

The value opportunity is significant. McKinsey estimates AI’s annual value potential in banking at $200 to $340 billion, from operational efficiency, fraud reduction, better credit decisions, and personalised products. The global AI in finance market stood at $17.7 billion in 2025 and is projected to reach $73.9 billion by 2033 at a 19.5% CAGR (Acropolium / market research, 2026). According to Finastra’s 2026 research, 65% of US financial institutions are actively implementing AI, compared to a 61% global average, with credit underwriting (35%), document processing (41%), and data analysis (47%) as the top current applications.

But fintech is also the sector with the most demanding regulatory environment for AI deployment. Model decisions must be explainable to regulators and to customers. Bias in credit scoring models violates the Equal Credit Opportunity Act and its international equivalents. AML systems must meet specific detection thresholds or invite regulatory sanction. Data handling requires compliance with GDPR, CCPA, and sector-specific frameworks depending on jurisdiction. An AI system that works technically but fails these compliance tests is not deployable, regardless of its performance metrics.

This reality – high ROI potential combined with high regulatory complexity – means fintech AI implementation requires a fundamentally different approach than AI in less regulated sectors. This guide covers the four highest-ROI fintech AI use cases, their specific compliance requirements, and a realistic implementation sequence that builds regulatory confidence alongside technical capability.

Why Fintech AI Is Fundamentally Different from General Enterprise AI

Before examining specific use cases, it is worth understanding why fintech AI carries compliance requirements that materially shape architecture decisions rather than just documentation requirements.

For US-based financial institutions evaluating AI partners, understanding what American buyers expect from an enterprise AI partner covers the compliance credential requirements in detail.

Model decisions have legal consequences. When an AI model denies a loan application, that denial must be explainable in terms the applicant can understand, and that satisfy adverse action notice requirements under ECOA (US), the Consumer Credit Act (UK), and equivalent frameworks globally. “The model’s score was insufficient” is not a compliant explanation. “Your application score was primarily affected by a high debt-to-income ratio and limited credit history.” This explainability requirement shapes which model architectures are viable for credit decisions – it is not a reporting add-on that can be retrofitted after deployment.

Bias in models is a regulatory violation, not just an ethical concern. A credit scoring model that produces systematically lower scores for protected demographic groups – even if those groups are not used as model inputs – may violate fair lending law if the proxy variables used (zip code, certain types of employment history, social connections) correlate with protected characteristics. This means pre-deployment bias testing is a compliance requirement, not an optional quality check, for any model influencing credit, insurance, or employment decisions.

AML obligations create specific detection requirements. Financial institutions are legally required to maintain AML programmes that detect specific patterns of suspicious activity. An AI system that reduces AML false positives but also reduces true positive detection rates below regulatory thresholds exposes the institution to enforcement action. The goal is not optimising a single metric – it is maintaining all required detection thresholds while improving the signal-to-noise ratio of alerts requiring human review.

Data handling requirements are more stringent. Financial data is among the most sensitive data classifications in most jurisdictions. The data governance requirements for training a credit model on customer transaction histories – consent frameworks, data minimisation, retention limits, subject access rights – are materially more demanding than for training a model on anonymised operational data.

These four realities do not make fintech AI impossible. They make design decisions more consequential and the implementation sequence more important.

Use Case 1: Real-Time Fraud Detection

Fraud detection is the most widely deployed and best-evidenced fintech AI use case. It also has the clearest ROI model: fraud losses prevented minus system cost equals net value. The math is straightforward, the baseline is measurable, and the improvement is attributable.

The evolution from rule-based to AI-driven fraud detection has been dramatic. Rule-based systems – “flag any transaction over $5,000 from a new merchant in a foreign country” – are transparent and auditable but inherently static. Fraudsters learn the rules and design around them. They are also binary: a transaction either triggers a rule or it does not, with no ability to weigh the combination of many small signals that together indicate suspicious behaviour.

AI fraud detection systems operate differently. They learn the normal transaction behaviour for each individual account holder and flag deviations from that baseline rather than from a population-wide rule. They analyse hundreds of signals simultaneously: transaction amount, merchant category, geolocation relative to home address, time of day, device fingerprint, velocity of recent transactions, and network connections to accounts with known fraud history. And they adapt continuously as fraud patterns evolve, updating their detection models on recent fraud signals rather than waiting for a human to identify a new pattern and write a new rule.

In 2026, however, AI fraud detection faces a materially more sophisticated threat landscape than in prior years. The fraud vectors that legacy and even first-generation AI systems were designed for, stolen credentials, card-present skimming, and basic account takeover, have been eclipsed by AI-driven attacks that require AI-powered defences to counter:

- Synthetic identity fraud: criminal networks use AI agents to construct synthetic identities combining real and fabricated data, then programmatically build credit history over 6–18 months before executing coordinated credit line drawdowns. Synthetic identity fraud costs businesses an estimated $20–40 billion globally each year (Fintech Global, 2026)

- Deepfake-driven KYC bypass: fraudsters use real-time voice cloning and video manipulation to bypass biometric liveness checks. Deepfake fraud jumped 1,100% in the US between 2025 and 2026 (Fintech Wrap Up, March 2026)

- Agentic fraud attacks: autonomous AI fraud agents probe defences, test synthetic identities, and adapt tactics at machine speed, scaling successful attack methods across thousands of targets simultaneously. Rule-based controls and human review cycles cannot match this pace

Effective AI fraud detection in 2026 must match the sophistication of AI-powered attacks. This means graph-based network analysis for synthetic identity detection, multimodal liveness verification that accounts for deepfake techniques, and, for institutions at higher risk, agentic oversight frameworks that investigate and respond to coordinated fraud campaigns at the same speed they are executed.

The measurable outcomes from AI fraud detection in production are consistent: financial institutions deploying AI in fraud detection see a 25-30% reduction in fraud losses within 12-18 months. AI systems block suspicious transactions in under 50 milliseconds – fast enough to operate within real-time card authorisation workflows where the window for intervention is 200 to 300 milliseconds total.

Equally important is the false positive reduction. Traditional rule-based fraud systems generate enormous false positive rates – in some cases, flagging more than 90% of alerts as non-suspicious after manual review. This is not just inefficient; it creates customer experience damage when legitimate transactions are declined and generates massive analyst overhead. AI-driven systems consistently reduce false positive rates by 40% to 60% while maintaining or improving true positive detection rates.

The compliance consideration for AI fraud detection is primarily model explainability for disputed decisions. When a customer disputes a declined transaction or a fraud flag, the institution must be able to explain the basis for the decision in terms that the customer and regulators can evaluate. Building an explanation layer – which signals triggered the alert, which factors were most influential – is a deployment requirement, not an optional feature.

Use Case 2: Credit Risk Scoring and Underwriting Automation

Credit underwriting is where AI’s capability advantage over traditional approaches is most dramatic, and where the regulatory requirements are most demanding.

Traditional credit scoring uses a small number of variables (payment history, credit utilisation, length of credit history, credit mix, new credit inquiries) assembled into a score that was designed for interpretability and auditability at the cost of predictive accuracy. The FICO model is effective, but it was built for an era when computational power and data access were limited. It leaves a significant predictive signal on the table.

AI credit models use 1,600+ variables, including alternative data sources that traditional scoring ignores: bank transaction patterns (income stability, spending behaviour, bill payment timing), device and digital behaviour signals, employment verification data, and in some markets, rental payment history and utility payment records. These additional signals are particularly valuable for assessing borrowers with thin or no traditional credit files – an important market that rule-based scoring systematically excludes.

The performance improvement from AI underwriting is substantial. Loan processing automation reduces approval times by 60-80% – compressing multi-day or multi-week underwriting processes to minutes for standard applications. Default prediction accuracy improves meaningfully over traditional models, with documented implementations showing 15% to 25% reduction in default rates at equivalent approval volumes. McKinsey’s 2024 credit decisioning research documents a 10–25% approval rate lift at constant default rates for thin-file segments using AI cash-flow underwriting models.

The regulatory requirements for AI credit models are, however, the most complex of any fintech AI use case:

Adverse action notice compliance requires that any declined application receive a notice explaining the specific reasons for the decision in terms the applicant can understand and act on. This is legally required under ECOA, FCRA, and equivalent international frameworks. AI models using hundreds of variables must be able to produce meaningful, specific adverse action reasons – not generic statements – for every declined decision.

Fair lending compliance requires pre-deployment testing and ongoing monitoring for disparate impact across protected demographic groups. A model that produces systematically different outcomes for applicants of different races, genders, or national origins – even using facially neutral variables – may violate fair lending law. The disparity testing methodology, documentation, and remediation process must be auditable. The EU AI Act (Annex III) classifies AI-based credit scoring as a high-risk AI use case requiring mandatory conformity assessment, human oversight, and detailed technical documentation. For US financial institutions with EU operations or EU customer exposure, this creates cross-jurisdictional compliance obligations for AI credit models.

Model validation requirements under SR 11-7 (US banking) and equivalent frameworks require independent validation of credit models before production deployment and ongoing performance monitoring post-deployment. For a fuller treatment of these requirements, our guide to MLOps best practices for regulated industries covers the validation framework in detail.

Use Case 3: AML, KYC, and Regulatory Compliance Automation

Anti-money laundering and know-your-customer obligations represent the single largest compliance cost centre for most fintechs and digital banks. The scale of the problem is significant: US firms alone deployed over 1,200 regulatory AI models in 2024, the majority concentrated in AML, KYC, fraud detection, and transaction screening.

The core problem with traditional AML systems is the false positive rate. Rule-based transaction monitoring systems generate enormous volumes of alerts, the majority of which prove non-suspicious on manual review. In some institutions, more than 90% of AML alerts require no action after review. This means compliance analysts spend the majority of their time processing alerts that will not lead to suspicious activity reports – an enormously expensive use of skilled human resources that also reduces focus on the genuine suspicious activity buried in the noise.

AI-driven AML systems address this in two ways. First, they use machine learning models trained on confirmed suspicious activity patterns to score transactions and customer behaviour more accurately, reducing the volume of alerts that reach human reviewers while maintaining detection rates for genuine suspicious activity. Second, they use network analysis to detect patterns that rule-based systems miss: structuring transactions across multiple accounts to stay below reporting thresholds, layering transactions through shell entities, and coordination patterns between accounts that individually appear normal but collectively exhibit suspicious network behaviour.

The measurable outcomes are significant. A leading global bank piloted an AI-based regulatory engine in 2025 and reduced compliance review time by 50%, cutting manual analyst workload by 60%. A Singaporean institution combined NLP and anomaly detection to achieve a 40% drop in transaction monitoring false positives while maintaining AML detection rates required by their regulatory programme.

Beyond AML, AI is transforming two other compliance workflows with documented ROI:

KYC and customer onboarding have been a significant operational bottleneck for digital financial services. Traditional KYC requires manual document review, database checks, and analyst judgment on edge cases. AI-powered KYC automation combines computer vision for document authentication, NLP for extracting and verifying information, and risk-scoring models that assess onboarding risk in real time. Over 40% of banks initiated pilots of AI-driven compliance automation in customer onboarding during 2024 alone. Automated KYC reduces onboarding time from days to minutes for standard customers while routing genuine complexity cases to human reviewers with pre-populated analysis. In 2026, deepfake-resistant liveness detection will be a mandatory component of any KYC system standard document verification. AI is no longer sufficient, given the 1,100%+ increase in deepfake-driven identity fraud documented in the US market.

Regulatory change management uses NLP models to monitor regulatory publications, track changes in requirements, and flag the internal policies and procedures that require updating in response to regulatory changes. For institutions operating across multiple jurisdictions – where regulatory changes in 30 or 40 markets need to be tracked simultaneously – this is a genuinely transformative capability. AI systems can now predict compliance breaches weeks before they occur by monitoring the gap between current practices and incoming regulatory requirements. In 2026, the EU’s PSD3 and PSR introduced stricter fraud prevention, customer authentication, and incident reporting requirements that NLP-based regulatory monitoring systems are now specifically tracking for affected institutions.

Use Case 4: Personalised Financial Products and Pricing

The fourth high-value fintech AI use case operates at the intersection of customer experience and revenue optimisation: using AI to tailor financial products, pricing, and offers to individual customer risk profiles, behaviour, and needs.

Traditional financial product pricing uses population-level risk segmentation: customers in a risk tier receive the same rate, regardless of individual signals that would justify differentiation. This leaves money on the table at both ends – pricing too high for the lower-risk customers within a tier (who switch to competitors) and too low for the higher-risk customers (who generate disproportionate losses).

AI-driven personalisation replaces population segmentation with individual risk models. Every customer’s rate, limit, and product recommendation reflects their specific risk profile, income stability indicators, and behavioural signals. The commercial outcome is improved margin from better risk-based pricing and improved retention from products that more closely match individual customer needs.

The regulatory consideration in AI-driven personalisation is the same fair lending and fair insurance compliance framework that applies to underwriting: differential pricing must not produce discriminatory outcomes across protected demographic groups. The individual risk model must demonstrate that pricing differences reflect genuine risk differences, not proxies for protected characteristics.

For financial services deploying AI-powered customer service as part of their personalisation layer, our guide to building secure enterprise chatbots with audit trails and compliance covers the security and compliance architecture.

Use Case 5: Agentic Fraud Investigation

The four use cases above describe AI systems that detect, score, flag, and price. The fifth describes AI systems that act: autonomous agents that do not wait for a human analyst to begin investigating suspicious activity, but execute the full investigative workflow themselves at machine speed, across every channel simultaneously.

This distinction defines 2026’s most consequential shift in fintech AI. Generative AI summarises data. Analytical AI identifies patterns. Agentic AI plans, executes, adapts, and files. It is the difference between a tool that writes a summary and a digital worker that investigates the case end-to-end.

The scale of the problem this addresses is structural. In traditional AML operations, up to 95% of alerts are false positives, and building a single Suspicious Activity Report can take four or more days. When fraud volumes grow faster than analyst headcount can scale, and in 2026 they are, with fraud scams growing at 19.3%, nearly twice the rate of traditional bank fraud, institutions face a capacity problem that adding human reviewers cannot solve. Agentic investigation addresses the residual that better detection alone does not: compressing the time each genuine case requires from hours to minutes, without reducing investigative quality or regulatory defensibility.

When a triggering alert fires, an agentic system does not create a queue entry. It begins working: pulling transaction history across linked accounts, cross-referencing against known fraud network graphs, querying sanctions and watchlist databases, correlating identity signals across channels, and assembling the evidence base required for regulatory submission. For clear false positives, the agent clears the transaction automatically. For complex cases, it assembles the full evidentiary trail before routing to a human investigator with a pre-built case file. Throughout, actions are logged, decisions are traceable, and policy guardrails remain intact.

SAR drafting automation is the highest-value output. Agents compile audit-ready documentation and generate regulator-ready SAR submissions from assembled evidence that human analysts review and approve rather than produce from scratch, which is the design pattern that preserves compliance while compressing analyst time per case.

The production outcomes are consistent: agentic AI reduces total investigation time by up to 60%, automating data gathering, transaction analysis, and case summarisation. Major institutions, including HSBC, Capital One, and DBS, report a 39% reduction in false positives using agentic fraud monitoring across 90% of daily transactions. McKinsey estimates agentic AI could reduce bank operational workloads by 50–60% across functions.

The compliance architecture for agentic systems requires particular attention to two requirements. Human-in-the-loop oversight for consequential decisions is non-negotiable: agentic systems prepare and recommend, with human analysts retaining final authority on SAR submissions, account restrictions, and law enforcement escalations. And every agent action, every data source queried, every sub-analysis run, every draft produced must generate an immutable record available for regulatory examination. An agentic system that cannot reconstruct its investigative chain is not deployable in a regulated financial services context, regardless of its detection accuracy.

For institutions earlier in their fintech AI programme, agentic fraud investigation belongs in the Month 12 and beyond phase, built on governance infrastructure established through fraud detection, AML, and credit underwriting deployments. For those who have built that foundation, it is the highest-leverage next step: compressing investigation time, improving SAR quality and consistency, and matching the operational tempo of the AI-powered threats that now define financial crime.

The Compliance Architecture That Makes Fintech AI Deployable

A fintech AI system that performs well technically but fails regulatory requirements is not deployable – and retrofitting compliance controls onto a system that was not designed for them is significantly more expensive and less effective than building them in from the start.

The compliance architecture that needs to be in place before production deployment of fintech AI:

Model explainability framework: For every model that makes or influences a decision about a customer, a mechanism must exist to produce a specific, meaningful explanation of that decision. SHAP values are the most widely accepted methodology for credit models, producing feature-level attribution that can be translated into plain-language adverse action notices.

Bias testing and monitoring: Pre-deployment disparate impact testing across all protected demographic groups is required before production launch. Post-deployment monitoring on a defined cadence (quarterly minimum for high-risk models) tracks whether bias metrics remain within acceptable bounds as the model encounters real-world data distributions.

Model validation documentation: Independent validation of model performance, assumptions, and limitations must be completed and documented before production. The validation record must be retained and available to regulators on request.

Data lineage and governance: Every training dataset must be documented – what data was used, from what time period, with what preprocessing applied. This documentation supports both reproducibility (can you reproduce the model if asked?) and audit (was the training data appropriate and handled in compliance with consent and retention requirements?)

Audit trail for decisions: Every model-influenced decision must generate an immutable record: what data was available to the model, what the model produced, what the human review outcome was (if applicable), and what the final decision was. This audit trail is the evidence base for regulatory examination.

Deepfake-resistant identity verification layer: For any model involved in customer onboarding or identity-linked decisions, the verification layer must now be designed to detect AI-generated synthetic identities and deepfake document or biometric submissions. Standard liveness checks are no longer sufficient. Multimodal verification combining behavioural biometrics, document forensics, and network-level identity consistency checks is the 2026 standard for KYC-grade identity verification.

For the broader governance framework that sits above these technical controls, our guide to AI governance for LLMs and enterprise agents covers the policy, accountability, and oversight structures that regulated AI deployments require.

Implementation Sequence: Where to Start in Fintech AI

The correct implementation sequence for fintech AI programmes is not determined by which use case is most strategically interesting. It is determined by which use case has the most accessible data, the clearest ROI model, and the lowest regulatory complexity – which almost always means building confidence and governance discipline before tackling the most demanding use cases.

Months 1 to 3: Fraud detection pilot. Fraud detection and AML monitoring deliver the fastest time-to-value in fintech AI – they run on structured transaction data, have clear success metrics (fraud loss reduction, false positive rate reduction), and address an existing cost centre with a straightforward ROI calculation. Start with a contained fraud detection pilot on a defined transaction type or customer segment. Establish the explainability layer and the audit trail as part of the initial deployment, not as additions later. Ensure the fraud model’s detection architecture accounts for synthetic identity and deepfake-driven attacks, not only historical fraud patterns. For realistic cost expectations before committing to a fintech AI pilot, see our breakdown of what an AI proof of concept costs in 2026.

Months 3 to 6: AML/KYC automation. With the fraud detection infrastructure and governance discipline in place, AML automation is the natural second step. The data infrastructure overlaps significantly, and the compliance documentation approach established in the fraud detection phase provides the template. Target the highest-volume, most routine AML review workflows first – transaction monitoring alert triage before complex network analysis. Include deepfake-resistant liveness verification in the KYC automation component if this was not addressed in Phase 1.

Months 6 to 12: Credit underwriting pilot. Credit underwriting has the highest ROI potential but the most demanding regulatory requirements. By Month 6, the governance framework established in fraud detection and AML phases provides a tested foundation for the more complex model validation, bias testing, and adverse action notice requirements of credit AI. Launch with a contained underwriting automation pilot on a well-defined loan product with clean historical data.

Month 12 and beyond: Personalisation and advanced capabilities. With governance discipline, model validation processes, and compliance architecture established across three deployed systems, the organisation has the infrastructure to expand into more complex applications: personalised pricing, alternative data credit models, agentic compliance investigation workflows, LLM-assisted SAR drafting, autonomous transaction monitoring escalation, and advanced behavioural analytics.

Frequently Asked Questions About AI in Fintech

What is the difference between AI fraud detection and rule-based fraud detection? Rule-based fraud detection applies fixed thresholds and conditions to every transaction – if a transaction meets defined criteria, it is flagged; if not, it passes. Rules are transparent but static: fraudsters learn and circumvent them. AI fraud detection learns the normal behaviour pattern for each individual account and flags deviations from that baseline, analysing hundreds of simultaneous signals, including behavioural and network patterns that rules cannot capture. AI detection also adapts continuously as fraud patterns evolve, without requiring manual rule updates. In 2026, AI fraud detection must also counter AI-powered attacks: synthetic identity fraud built by automated agents, deepfake-driven KYC bypass, and coordinated cross-channel fraud executed at machine speed. These attack vectors require graph-based identity analysis and multimodal verification capabilities that are distinct from standard transactional anomaly detection.

What does explainable AI mean in the context of credit decisions? Explainable AI in credit decisioning means the model can produce a specific, meaningful explanation of why a particular application received a particular decision – not just a score. Under ECOA and equivalent fair lending laws, declined applicants must receive adverse action notices stating the principal reasons for the decision in terms they can understand and act on. SHAP values are the most widely used technical approach for producing these explanations from complex ML models, attributing each decision to the contribution of specific input features.

How does AI reduce AML false positives without reducing detection rates? AI AML systems reduce false positives by replacing binary rule matching with probabilistic risk scoring. Rather than flagging every transaction that meets a rule threshold, the model scores each transaction or customer behaviour pattern against learned indicators of genuine suspicious activity. Alerts are generated only above a defined risk score threshold, with the threshold calibrated to maintain required true positive detection rates while reducing the volume of low-probability alerts requiring human review. Network analysis layers add detection of coordinated suspicious activity patterns that individual transaction rules miss entirely.

What fair lending compliance requirements apply to AI credit models? AI credit models must meet the same fair lending requirements as traditional models: no disparate treatment of applicants based on protected characteristics, and no disparate impact producing systematically different outcomes for protected demographic groups without business necessity justification. For AI models, this requires pre-deployment disparate impact testing across all protected groups, documentation of the business necessity justification for any variables producing proxy effects, adverse action notice capability for declined decisions, and ongoing post-deployment monitoring of demographic outcome distributions. For financial institutions with EU market exposure, the EU AI Act’s Annex III classification of AI credit scoring as high-risk creates additional mandatory conformity assessment, human oversight, and technical documentation requirements.

What is RegTech, and how does AI fit into it? RegTech (Regulatory Technology) refers to the use of technology to automate and improve regulatory compliance processes. AI in RegTech covers automated transaction monitoring for AML and sanctions screening, AI-powered KYC and customer onboarding, NLP-based regulatory change monitoring, model risk management automation, and reporting automation for regulatory submissions. The common thread is using machine learning to process the data volumes and pattern recognition requirements of financial compliance at a scale and speed that manual processes cannot match cost-effectively.

How do fintech companies manage model risk for AI systems? Model risk management for fintech AI follows frameworks like SR 11-7 (US Federal Reserve) and equivalent international guidance. Core requirements include independent model validation (a function separate from the development team that validates performance, assumptions, and limitations before deployment), ongoing performance monitoring with defined alert thresholds for degradation, formal change management processes for material model updates, and documentation of the full model lifecycle from development through retirement. See our guide to MLOps best practices for regulated industries for the technical implementation of these requirements.

What is the threat posed by AI-powered fraud attacks in fintech, and how should institutions respond? In 2026, financial institutions face a qualitatively different fraud threat: AI-driven attacks that operate at machine speed and scale. These include synthetic identity fraud (AI agents that build realistic credit profiles over months before coordinated drawdown), deepfake-driven identity verification bypass (voice cloning and video manipulation that defeats standard liveness checks), and autonomous fraud agents that probe and adapt to defences faster than human review cycles can respond. Deepfake fraud grew 1,100% in the US between 2025 and 2026 (Fintech Wrap Up, 2026). Defending against these threats requires graph-based identity network analysis, multimodal liveness verification with deepfake detection, and, for high-risk institutions, agentic oversight frameworks that can investigate and respond to coordinated attacks at machine speed.

Conclusion: Fintech AI Rewards Those Who Build Compliance In, Not On

The fintech organisations extracting the most value from AI in 2026 are not the ones that deployed fastest. They are the ones who built regulatory compliance into the architecture from the first deployment – and are now able to deploy additional AI capabilities on a proven governance foundation rather than rebuilding compliance controls for each new system.

The compliance architecture described in this guide is not a constraint on AI adoption. It is the infrastructure that makes AI adoption sustainable in regulated financial services. A fraud detection system that the compliance team trusts is worth ten times more than one they cannot explain to regulators. A credit model that satisfies fair lending requirements is the only kind that can scale to full commercial deployment.

In 2026, the urgency of this compliance-first approach is amplified by the AI-powered threat landscape. The institutions that built robust, explainable, governance-aligned AI systems in 2023 and 2024 are now extending those systems with deepfake detection, synthetic identity graph analysis, and agentic investigation workflows, building on a proven compliance foundation. Those who did not are rebuilding from scratch while the threat landscape accelerates around them.

Moweb’s AI & ML development and AI Security & Governance practices work with fintech companies, banks, and financial services organisations to build AI systems with compliance architecture as a first-class design requirement. Our teams have production experience with explainability frameworks, bias testing, model validation documentation, and regulatory-aligned audit trail design. Talk to us about your fintech AI programme.

Found this post insightful? Don’t forget to share it with your network!