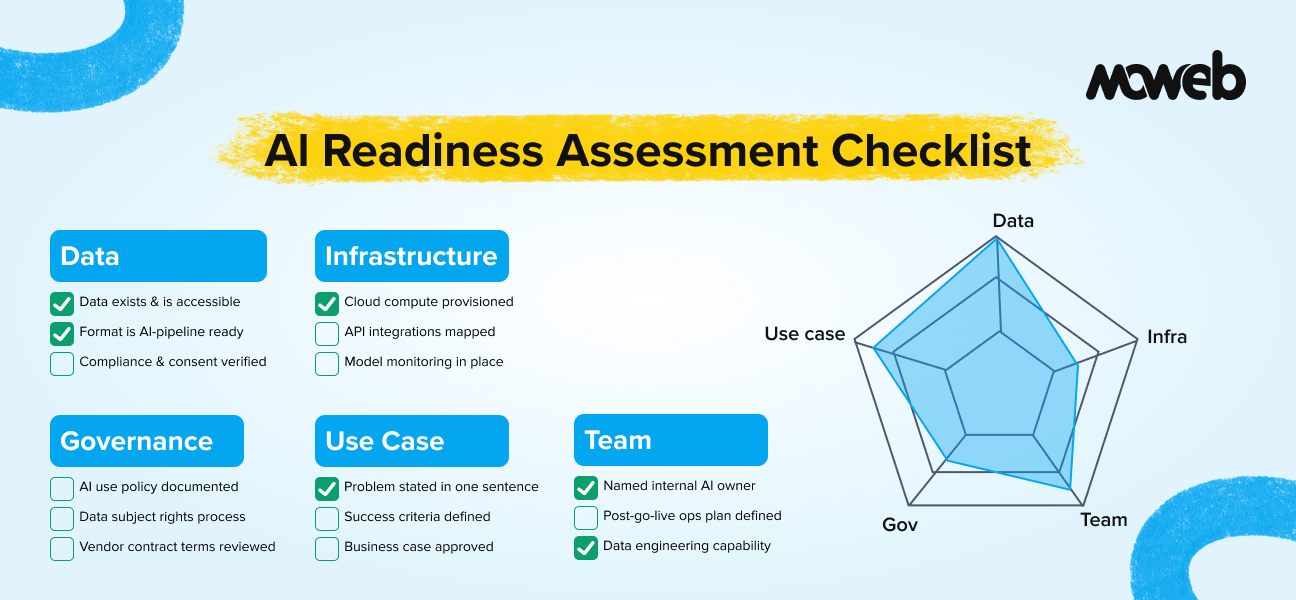

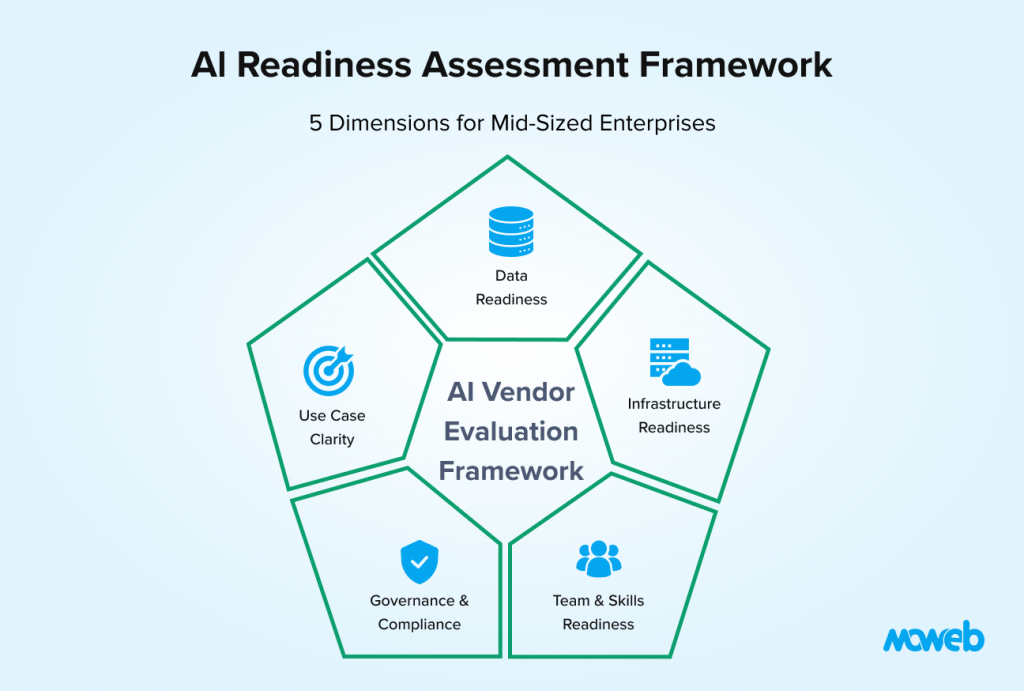

What is an AI readiness assessment? An AI readiness assessment is a structured evaluation of an organisation’s current state across the five dimensions that determine whether an AI initiative will succeed: data quality and availability, technology infrastructure, team capability, governance and compliance frameworks, and use case definition clarity. The output is an honest picture of where the organisation is ready to move forward, where gaps need to be addressed first, and what sequence of investments makes sense given the current state.

What does an AI readiness checklist cover for enterprises? A comprehensive AI readiness checklist for enterprises covers: whether usable, accessible, sufficiently clean data exists for the intended use case; whether the current technology infrastructure can support AI workloads; whether internal teams have the skills to implement, operate, or oversee AI systems; whether governance policies for data handling, model accountability, and compliance are in place; and whether the specific AI use case is defined clearly enough to build and evaluate against meaningful success criteria.

Running a structured AI readiness assessment before committing budget is the single most reliable predictor of whether a mid-sized enterprise’s AI initiative succeeds or stalls. Most mid-sized enterprises are no longer asking whether to adopt AI. The question now is: are we actually ready to do it properly?

That distinction matters more than it might seem. The organisations that are getting genuine value from AI in 2026 are not necessarily the ones that moved fastest. They are the ones that moved thoughtfully – that understood where their data was usable and where it was not, that had clear use cases before they started building, and that had enough internal governance to ensure AI outputs were trusted rather than ignored.

The scale of failure is significant: according to RAND Corporation’s 2025 analysis, 80.3% of AI projects fail to deliver intended business value, and Deloitte’s 2026 State of AI in the Enterprise report found that 42% of companies abandoned at least one AI initiative in 2025, with an average sunk cost of $7.2 million per abandoned project. The organisations that struggle share a common pattern: they hired a vendor, pointed them at a general goal like “improve customer experience with AI,” and discovered six months later that the data was messier than expected, the use case was too vague to evaluate against, and nobody internally had been empowered to make decisions about quality standards or failure modes.

This checklist is designed to help you avoid that second path. It covers the five dimensions of AI readiness that determine whether an initiative succeeds or stalls, with specific questions you can answer honestly before committing budget.

Work through this with your technical lead, your data or analytics team lead, and at minimum one business stakeholder who understands the target use case. If you cannot answer a large proportion of these questions, that itself is important information about what needs to happen before an AI project starts.

How to Use This Checklist

For each item, assess your current state honestly using one of three ratings:

- Ready: This is in place and would not be a constraint on an AI project right now.

- Partial: This exists but has significant gaps that would need to be addressed. Note what the gap is.

- Not ready: This does not exist or is too immature to support an AI initiative right now.

At the end of each dimension, count your Ready, Partial, and Not Ready ratings. The pattern across all five dimensions gives you a composite readiness picture and a prioritised list of what to address before starting.

A score of mostly Ready across all five dimensions means you are well positioned to begin. A score of mostly Partial means you can likely proceed on a focused use case while addressing gaps in parallel. A score of mostly Not Ready in two or more dimensions means the most valuable investment right now is not an AI project – it is fixing the foundations that will determine whether any AI project succeeds.

Dimension 1: Data Readiness

Data is the single most common reason enterprise AI projects underperform or fail outright. Most organisations significantly overestimate how usable their data is for AI. This dimension helps you assess the gap between where your data actually is and where it needs to be.

Data availability

- The data needed for the target AI use case exists within the organisation (it is not hypothetical or aspirational)

- That data is accessible from a technical standpoint (it is not locked in a legacy system with no export capability or API)

- You know where the data lives and who is responsible for maintaining it

- The data is available in a format that can be ingested by an AI pipeline (structured database tables, accessible documents, clean exports)

Data quality

- The data has been audited for completeness – you know what percentage of records have missing values in critical fields

- The data is reasonably consistent in format and labelling across sources and time periods

- Duplicate records, outdated entries, and known corruptions have been identified (even if not yet cleaned)

- You understand the provenance of the data well enough to assess whether it is representative of the problem you are trying to solve

Data volume

- There is sufficient historical data to train or evaluate an AI model (the minimum varies by use case: ML classification typically needs thousands of labelled examples; RAG systems need a meaningful document corpus; LLM fine-tuning needs domain-specific text in volume)

- The data is recent enough to be relevant (data that was accurate three years ago may not represent current patterns)

Data governance

- You know which data can legally and contractually be used for an AI project (customer data with consent limitations, third-party licensed data with usage restrictions, and employee data with privacy obligations are common constraints)

- There is a defined process for requesting data access and tracking who has accessed what

- Sensitive data has been identified and classified (PII, financial data, health data, commercially sensitive data)

Honest assessment prompt: If you asked your data team right now to extract a clean, representative dataset for a specific AI use case and deliver it within two weeks, could they do it? If the honest answer is “probably not without significant effort,” data readiness is your first constraint.

Dimension 2: Infrastructure Readiness

AI workloads have different infrastructure requirements from traditional software. This dimension assesses whether your current technology environment can support the AI capabilities you are considering.

Compute and cloud

- You have access to cloud compute resources sufficient for AI workloads (GPU instances for model training if required, scalable CPU instances for inference)

- You have an established cloud provider relationship (AWS, Azure, or Google Cloud) or a credible plan to establish one

- Your current infrastructure team has experience provisioning and managing cloud AI services or is willing to upskill

Data infrastructure

- Your data is stored in systems that can be accessed programmatically (APIs, database connections, or structured export processes exist)

- You have or can quickly establish a data warehouse or data lake where processed data can be centralised for AI use

- ETL or data pipeline tooling exists (or can be procured) to move data from source systems to AI infrastructure

Integration capability

- The enterprise systems the AI project needs to connect to (CRM, ERP, ticketing, document stores, email) have APIs or integration points that can be used

- Your IT team understands the authentication and access control requirements for those integrations

- There is no active moratorium on new system integrations in your IT governance process that would block the project

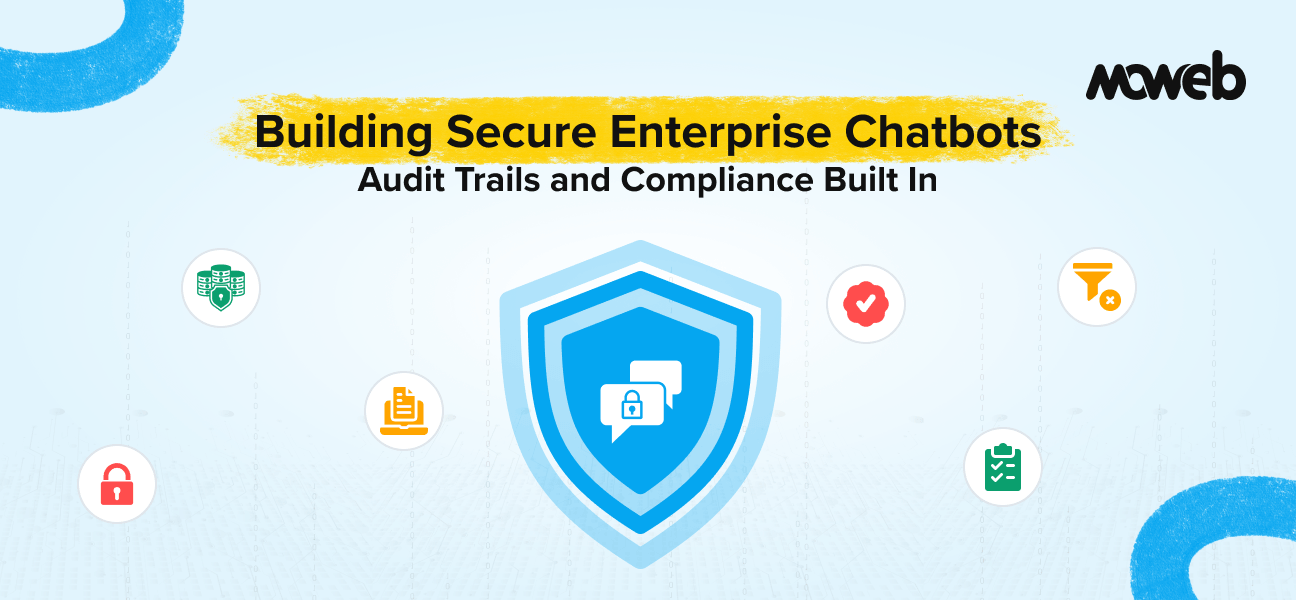

Security and network

- Your network security policies permit data to be processed in the cloud environment required (this is often a constraint for highly regulated industries or government-adjacent organisations)

- Encryption at rest and in transit is standard practice for your data infrastructure

- You have experience with managing access controls and audit logging in your current infrastructure

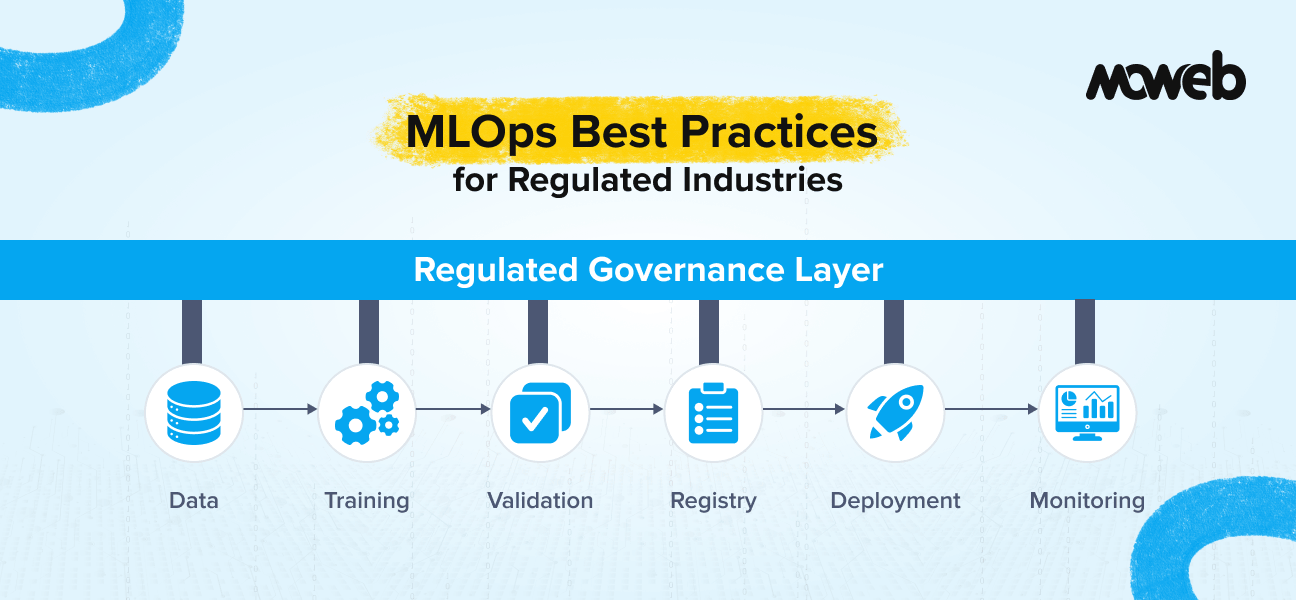

MLOps and model lifecycle

A model versioning and deployment pipeline can be established for your AI project. This may use platforms such as MLflow, SageMaker Pipelines, Azure ML Pipelines, or Vertex AI Pipelines, or a simpler deployment pipeline appropriate to the scale of the use case.

Model monitoring infrastructure exists or can be provisioned to track performance degradation and data drift post-deployment AI models degrade as real-world data patterns shift, and unmonitored models are a common source of silent production failures.

A rollback mechanism for deployed models is defined if a model update introduces quality regression, you have a tested process for reverting to a prior version without extended service disruption.

Honest assessment prompt: Have you successfully integrated a new cloud-based service into your enterprise data environment in the last twelve months? If so, you have the infrastructure baseline. If not, infrastructure readiness may need attention before an AI project starts.

Dimension 3: Team and Skills Readiness

AI projects fail when organisations do not have the right internal capability to commission, oversee, and eventually operate the systems being built. This does not mean you need a full internal AI team. It does mean you need specific roles covered.

Internal ownership

- There is a named internal owner for the AI initiative – someone with the authority to make decisions about scope, quality standards, and go/no-go milestones

- That person has sufficient technical fluency to engage meaningfully with a delivery team on architecture and quality decisions (they do not need to be an engineer, but they need to understand enough to ask the right questions)

- There is a named business stakeholder who has committed to participating in use case definition, evaluation, and acceptance decisions

Data and engineering capability

- You have internal data engineering or analytics capability (even one person) who understands your data well enough to support a vendor in accessing and preparing it

- You have internal engineering capability who can review vendor code and architecture decisions, manage system access and credentials, and handle basic troubleshooting after deployment

- If you are planning to build internal AI capability over time, there is a budget and intent to develop it (not just a vague aspiration)

Operational readiness

- Your team understands that AI systems need ongoing monitoring and maintenance after go-live, and there is a plan (or willingness to plan) for who handles that

- There is an established process for internal teams to report quality issues with AI outputs and have them investigated and resolved

- Change management has been considered – the teams whose workflows will change when AI is deployed have been identified and will be involved in the implementation

Honest assessment prompt: If your AI vendor went dark tomorrow and you had to operate the deployed system yourself for thirty days, could you? If the honest answer is no, operational readiness is a gap to address in the project design.

Dimension 4: Governance and Compliance Readiness

Governance gaps are the most common cause of AI deployment delay in regulated industries and enterprise environments. Addressing them after a system is built is far more expensive than addressing them during design.

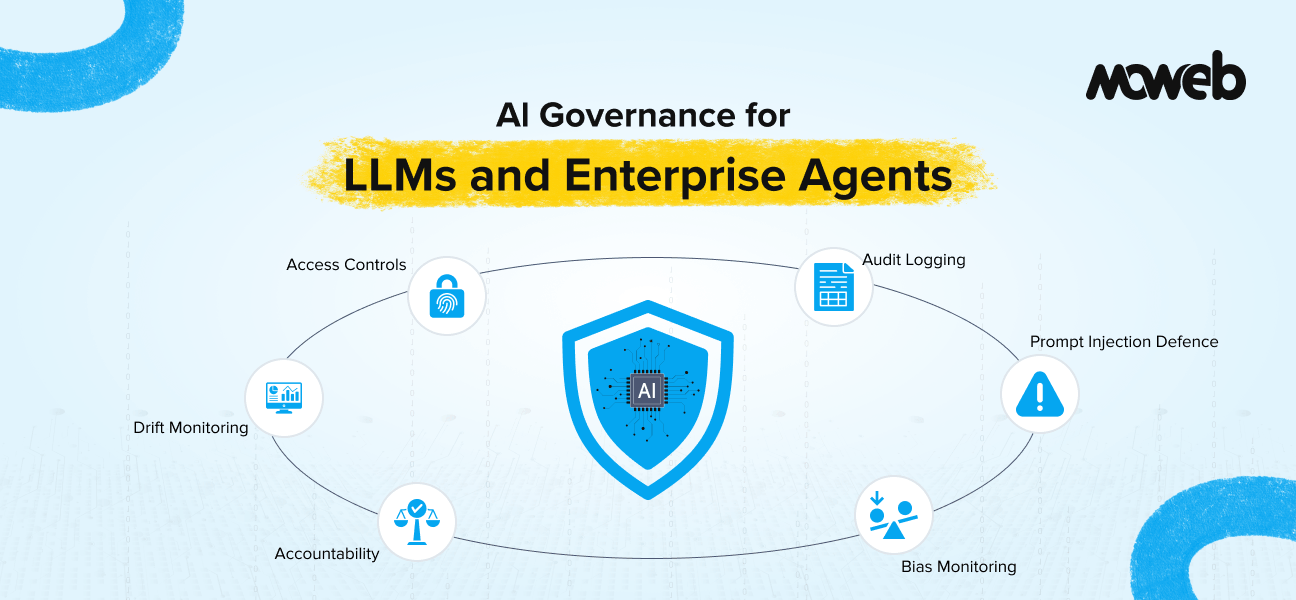

AI governance policy

- Your organisation has or is actively developing a policy on acceptable AI use (what it can and cannot be used for, what oversight is required)

- There is a defined process for approving new AI use cases before development begins

- Senior leadership has visibility of and accountability for AI initiatives underway

Data compliance

- You have assessed which data protection regulations apply to the AI use case you are considering (GDPR for EU-scope data, CCPA for California-scope data, HIPAA for US healthcare data, POPIA for South Africa-scope data)

- You understand whether the AI use case involves personal data and have assessed the legal basis for processing it

- You have a mechanism for handling data subject requests (right of access, right to deletion) for data that may be included in AI training or retrieval systems

Model accountability

- You have thought through who is responsible if the AI system produces an incorrect output that leads to a harmful business decision

- There is a process for human review of AI outputs in high-stakes decisions (credit decisions, medical information, legal guidance, performance evaluations)

- You have considered whether the AI system’s decisions or outputs need to be explainable to regulators, customers, or internal audit

Vendor management

- Your procurement and legal teams are prepared to review AI-specific contract terms (IP ownership, data handling, liability for model outputs)

- You have a process for assessing the security credentials of AI vendors before giving them access to enterprise data

- There is a clear exit process in your vendor contracts – you own the code, models, and data produced during an engagement, and you can operate independently at contract end

Honest assessment prompt: Could you explain your AI governance approach to your compliance team or an external auditor today? If the honest answer is “we would need several weeks to prepare for that conversation,” governance readiness needs attention before a production AI deployment.

Dimension 5: Use Case Clarity

Vague use cases are the most avoidable cause of AI project failure. A team that starts building without a precise definition of what they are building and how they will know when it works will discover, weeks or months in, that different stakeholders had different things in mind. This dimension ensures your use case is defined clearly enough to build against.

Problem definition

- You can state the problem the AI system will solve in a single, specific sentence (not “improve customer experience” but “reduce time for tier-1 support agents to find relevant policy answers from an average of 8 minutes to under 90 seconds”)

- You understand what the current state looks like (how the task is done today, by whom, how long it takes, what the error rate or quality issue is)

- You have identified who the primary users of the AI system will be and have spoken to at least some of them about their actual pain points

Success criteria

- You have defined specific, measurable success criteria before building begins (not “it should be accurate” but “answers must be accurate on 85% of test queries drawn from real support tickets”)

- You know what a bad outcome looks like and have thought through what happens if the system does not meet the success criteria

- There is agreement among stakeholders on the success criteria – it is not just the technical team’s definition

Scope boundaries

- You have defined what the AI system will and will not do (the explicit boundaries of the use case)

- You have identified the edge cases that are most likely to cause problems and have a plan for how they will be handled (graceful fallback, human escalation, or explicit out-of-scope messaging)

- The use case is narrow enough to be delivered and evaluated in a reasonable timeframe (6 to 12 weeks for a PoC, 3 to 6 months for a first production deployment)

Business case

- You have estimated the business value of the use case in concrete terms (hours saved per week, error reduction percentage, cost avoidance, revenue impact)

- That business case has been reviewed and accepted by a budget holder who can approve the investment

- You have thought through the adoption pathway – what would need to change in workflows, incentives, or training for the AI system to actually be used once it is deployed?

Honest assessment prompt: Could you write a one-page brief for a vendor that describes exactly what you need built, how you will evaluate it, and what success looks like? If not, use case clarity work is needed before an AI project engagement begins.

Interpreting Your Results: What to Do With Your Assessment

Once you have worked through the checklist, you should have a clear picture of where you are. Here is how to interpret common patterns:

Strong across all five dimensions: You are well positioned to move forward on a focused AI project. Prioritise use case scope and vendor selection. A proof-of-concept is a reasonable first step. See our guide on what an AI proof of concept costs in 2026 for realistic budget expectations.

Strong on data and use case, gaps on infrastructure and governance: This is a common profile for mid-sized enterprises with good data practices but less mature cloud and compliance infrastructure. The right approach is usually to run a contained PoC on existing infrastructure while building the infrastructure and governance foundations in parallel, rather than waiting until everything is perfect before starting.

Gaps on data quality: This is the most common constraint and the hardest to shortcut. Data quality work needs to happen before AI development starts, not during it. An AI strategy engagement focused on data readiness – auditing what you have, identifying gaps, and designing the remediation – is often the right first investment.

Gaps in use case clarity: The fastest and cheapest fix. Run a facilitated use case definition workshop with technical and business stakeholders before engaging a vendor. A half-day workshop with the right people can take a vague idea to a well-defined brief.

Gaps in team capability: Consider an embedded delivery model where the vendor works alongside your team and explicitly includes knowledge transfer, rather than a traditional project delivery model where you receive a system you then have to operate without context.

Gaps on governance: Engage your legal, compliance, and IT security stakeholders early. Frame the governance work as enabling the AI initiative, not blocking it. A governance framework developed during a PoC is much easier to build than one retrofitted onto a production system.Moweb’s AI Strategy & Consulting practice includes a structured readiness assessment as a standard first phase of enterprise AI engagements. If you would like to work through your organisation’s readiness with an experienced team, get in touch to start that conversation.

Frequently Asked Questions About AI Readiness for Enterprises

How long does a proper AI readiness assessment take? A structured internal assessment using a checklist like this one typically takes two to four weeks for a mid-sized enterprise, depending on how much cross-functional alignment is needed and how accessible the relevant information is. A formal external AI readiness assessment delivered by a specialist firm typically takes three to six weeks and includes data quality auditing, stakeholder interviews, infrastructure review, and a written recommendations report. The internal self-assessment is a valuable starting point; an external assessment adds independent validation and is particularly useful before a significant AI investment.

What is the minimum data requirement to start an AI project? There is no universal minimum, but rough benchmarks by use case type are: for a RAG knowledge retrieval system, a corpus of at least 200 to 500 documents that are representative of the queries you expect; for a supervised ML classification model, at least 1,000 to 5,000 labelled examples per class (more for complex or imbalanced problems); for LLM fine-tuning, typically thousands to tens of thousands of high-quality domain-specific examples. If your data falls well below these thresholds, a data collection and preparation phase before model development is needed. Trying to build on insufficient data produces a system that appears to work in testing and fails in production.

Can we start an AI project while still addressing data quality gaps? Sometimes. The right answer depends on the severity of the gaps. Minor quality issues (some missing values, inconsistent capitalisation, minor formatting inconsistencies) can usually be addressed as part of the data preparation phase of an AI project without significant delay. Fundamental quality issues (widespread incorrect labels, major gaps in coverage, data that does not actually reflect the real-world phenomenon you are trying to model) cannot be addressed mid-project without rebuilding from scratch. An honest data assessment at the start of a project surfaces which category your data falls into.

What is the most common AI readiness gap in mid-sized enterprises? Based on patterns across enterprise AI projects, the three most common gaps in mid-sized enterprises are: data that is less clean and accessible than initially assumed; governance frameworks that exist in policy documents but are not operationalised; and use cases that are too broad to evaluate against specific success criteria. Interestingly, technical infrastructure and team skills are less commonly the binding constraint in mid-sized enterprises than the above three, because cloud infrastructure is accessible and skills can be supplemented through vendor partnerships.

How do we prioritise which AI use case to start with? The best first AI use case for a mid-sized enterprise has three characteristics: the data for it already exists and is reasonably clean; the problem it solves is clearly defined and measurable; and the risk of a suboptimal output is low (meaning a human can review and correct AI outputs without significant business consequence). Avoid starting with a use case that involves customer-facing outputs, safety-critical decisions, or heavily regulated data on your first AI project. Build confidence and internal capability on a lower-stakes use case first.

Should we hire an internal AI team or work with an external AI development company? For most mid-sized enterprises, the right answer for initial AI projects is to work with an external AI development company while building limited internal AI expertise in parallel. A fully internal AI team requires 12 to 18 months to recruit and reach delivery maturity, and the AI tooling landscape is moving fast enough that even experienced teams need ongoing learning investment. An experienced external partner can deliver a first production AI system within the quarter while your internal team learns through participation. As AI becomes more central to your operations, gradually shifting toward more internal ownership makes sense – but it is rarely the right starting point for a mid-sized enterprise.

How do we know when we are ready to move from PoC to production? You are ready to move from PoC to production when: the PoC has demonstrated output quality that meets your pre-defined success criteria on representative real data; the production architecture has been documented and reviewed; a governance framework for the production system has been defined (ownership, monitoring, escalation paths, access controls); internal stakeholders have been trained to use and oversee the system; and budget for the production build and ongoing operations has been approved. Moving to production before any of these conditions are met is a reliable path to a production system that nobody trusts.

Conclusion: Readiness Is Not About Perfection – It Is About Honest Assessment

No mid-sized enterprise is perfectly ready for AI across all five dimensions before their first project. That is not the standard you are working toward.

The standard is honest self-knowledge: understanding where you are strong enough to proceed, where you have manageable gaps to address in parallel, and where you have fundamental constraints that need to be resolved before any AI investment makes sense.

The organisations that get lasting value from AI are the ones that answered these questions honestly before they started spending. They chose use cases that matched their data reality. They fixed data quality before building models. They defined success criteria before writing code. They built governance alongside the system, not after it.

The checklist in this guide will not tell you what AI to build. But it will tell you whether you are ready to build it well.If you would like help working through your organisation’s AI readiness in a structured way, Moweb’s AI Strategy & Consulting team facilitates readiness assessments as a first step in enterprise AI engagements globally, from the USA and UK to Tanzania and Australia. Talk to us here.

Found this post insightful? Don’t forget to share it with your network!