How are US mid-market companies adopting AI in operations in 2026? US mid-market companies in 2026 are primarily adopting AI in three operational areas: internal knowledge management and productivity (using RAG-based assistants to reduce information search time), customer support and service operations (using AI triage and chatbots to reduce handling time and increase first-contact resolution), and process automation in finance, HR, and supply chain operations (using AI agents to automate repetitive workflows). The pattern across successful deployments is consistent: they start with a single, clearly defined use case on clean data, demonstrate measurable ROI within the first quarter, and use that success to build internal confidence for broader adoption.

What is stopping US mid-market companies from getting value from AI?The three most common barriers to AI value at US mid-market companies are: pilot purgatory (The state where AI experiments show promising results but never reach production because the conditions for real deployment were not designed in from the start.), data quality gaps that surface only after development begins, and governance and accountability structures that are absent when compliance or quality questions arise. According to the 2026 WRITER Enterprise AI survey, 79% of organizations face challenges in adopting AI – a double-digit increase from 2025 – despite the majority investing over $1 million annually in AI technology.

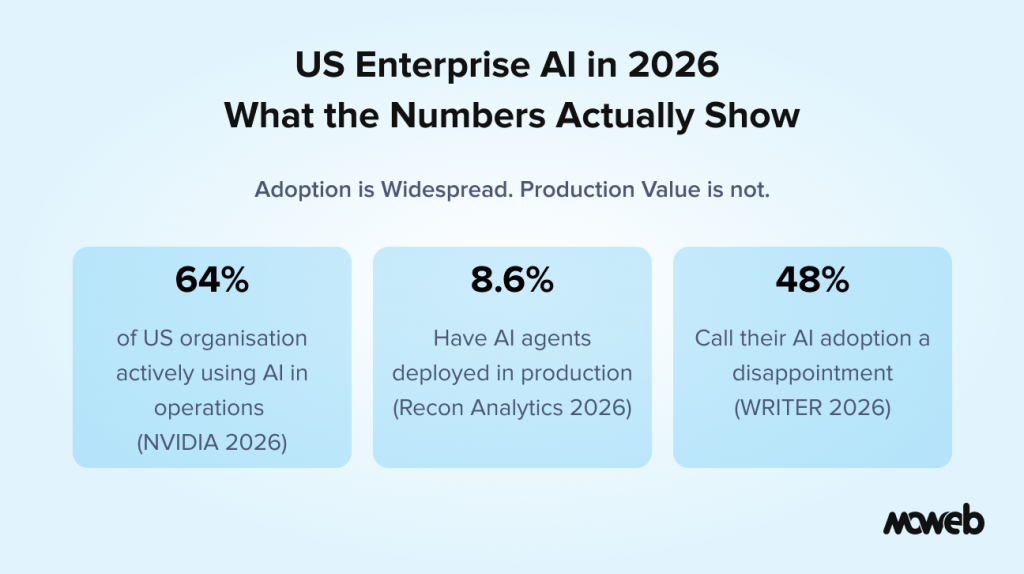

The headline numbers on enterprise AI in 2026 are striking. According to Deloitte’s State of AI in the Enterprise report, worker access to AI rose 50% in 2025 alone. NVIDIA’s 2026 State of AI report found that 64% of organisations are now actively using AI in their operations. Two-thirds of organisations report improved productivity and efficiency as a direct result.

But headline adoption numbers mask a more complicated story, especially for US mid-market companies – organisations with 200 to 2,000 employees, substantial operations, and real AI ambitions, but without the AI-dedicated infrastructure budgets of the Fortune 500 or the agility of a ten-person startup.

The reality in the US mid-market is one of significant momentum paired with significant friction. Many companies have deployed AI tools. Fewer have deployed AI systems that are producing reliable, measurable operational value. And a meaningful portion are stuck in what the industry now calls “pilot purgatory” – running controlled experiments that never make it to production because the foundational conditions for a real deployment were not in place when the project started.

This piece looks honestly at what is working, what is not, and what separates the US mid-market companies that are extracting genuine operational value from AI from those still waiting for their pilots to graduate.

US Mid-Market AI Adoption in 2026: What the Data Actually Shows

It is worth being specific about where the US mid-market is in the adoption cycle, because the picture varies significantly by company size, sector, and operational maturity.

According to data from the Federal Reserve’s monitoring of AI adoption in the US economy, adoption rates are meaningfully higher at larger firms – but the gap between mid-market and enterprise is closing faster than many expected. The most important trend is not whether companies are using AI but whether they are using it in ways that actually change operational outcomes.

The WRITER Enterprise AI survey from early 2026 puts the challenge sharply: nearly half (48%) of organisations call their AI adoption a massive disappointment, up from 34% the previous year. Few report significant ROI from generative AI (29%) or AI agents (23%). For context on what successful deployments can achieve, a Forrester Total Economic Impact study commissioned by WRITER found that enterprises actively using their platform saw an average 333% ROI with a six-month payback period, illustrating the gap between average adoption results and what well-executed deployments deliver.

What the same data shows, however, is that the minority of organisations that have successfully operationalised AI – moved from individual productivity tools to enterprise-wide systems that change how work gets done – are compounding their advantage at a rate that competitors cannot easily replicate. The gap between AI leaders and AI laggards in the US mid-market is widening, and 2026 appears to be the year that gap becomes commercially significant.

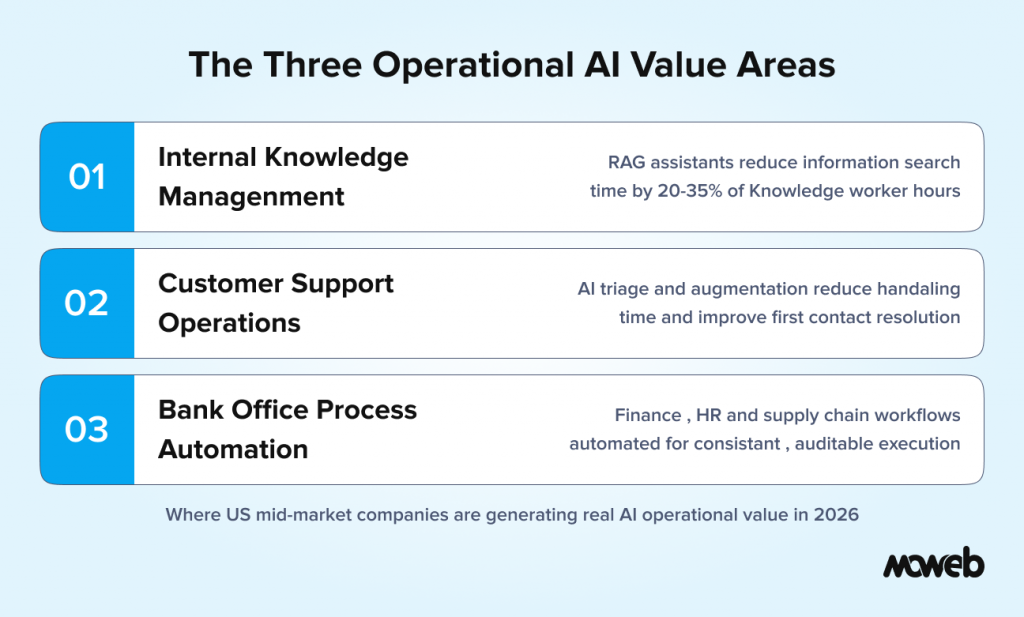

The Three Operational Areas Where US Mid-Market Companies Are Getting Real AI Value

Across the US mid-market, three operational areas are seeing disproportionate AI value creation in 2026. These are not theoretical use cases – they are the patterns that appear consistently when you talk to operations leaders at companies that have moved past pilots.

Internal Knowledge Management and Productivity

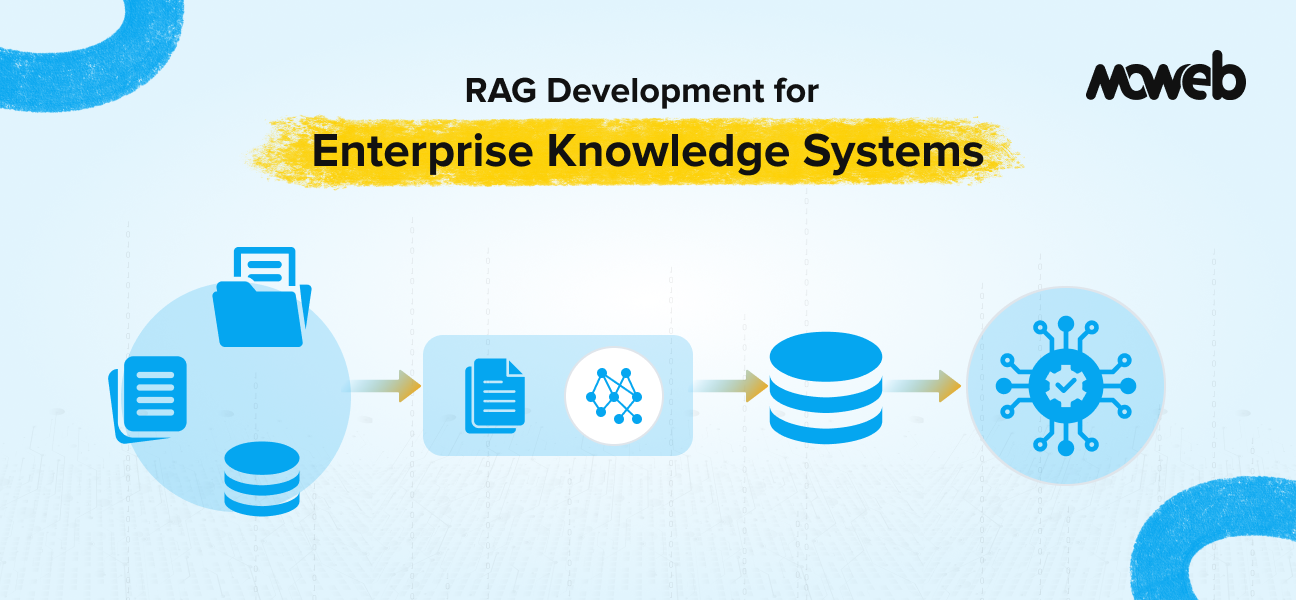

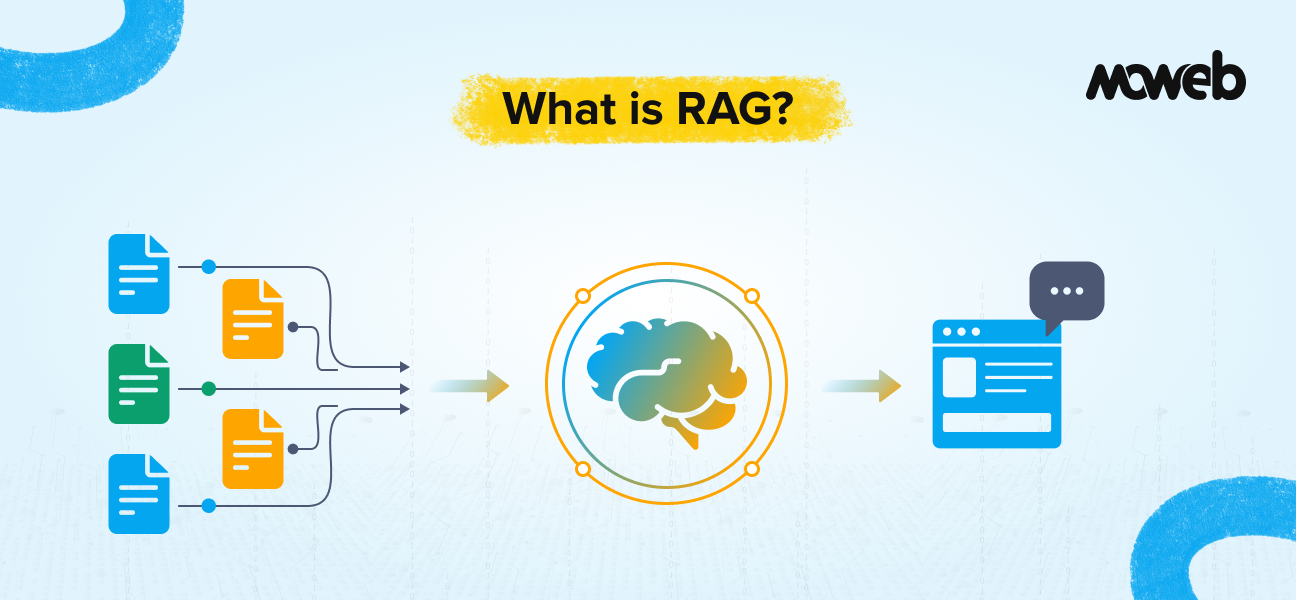

The single most widely deployed AI capability at US mid-market companies is some form of internal knowledge assistant: a system that allows employees to ask questions in natural language and receive accurate, sourced answers from the company’s internal documents, policies, procedures, and knowledge base.

The operational value is straightforward. Most knowledge workers spend between 20% and 35% of their working week searching for information – finding the right policy, locating the correct procedure, tracking down a previous decision or document. A well-built RAG-based knowledge assistant compresses this search time significantly. The productivity gains are immediate, visible, and easy to measure.

The critical success factor in this use case is that the knowledge corpus – the documents the AI system answers from – must be accurate, accessible, and maintained. Companies that deploy a knowledge assistant on top of an outdated or inconsistent document library quickly discover that the AI is amplifying the problem rather than solving it. Data quality work before deployment is not optional.

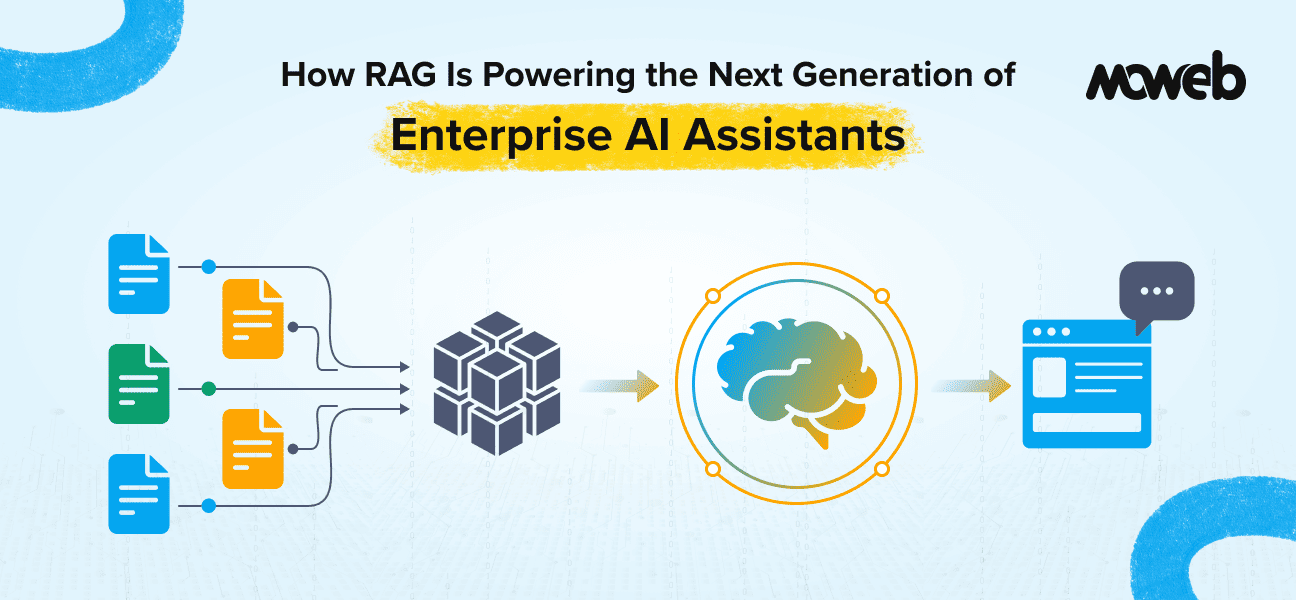

Customer Support and Service Operations

The second high-value operational area is AI-augmented customer support. US mid-market companies in B2B services, financial services, healthcare adjacent businesses, and technology are deploying AI in their support operations to reduce average handling time, increase first-contact resolution rates, and manage volume spikes without proportional headcount increases.

The most effective deployments are not replacing support agents. They are augmenting them – providing agents with real-time context from CRM records and knowledge base retrieval, drafting suggested responses for agent review, triaging incoming tickets by urgency and category, and handling fully automated resolution of the most common, most clearly defined issue types.

The distinction between augmentation and replacement matters operationally as well as culturally. Companies that frame AI deployment as a replacement typically face resistance that slows implementation. Companies that frame it as giving agents better tools achieve faster adoption and better outcomes on both efficiency and quality metrics.

Finance, HR, and Supply Chain Process Automation

The third area is back-office process automation: using AI agents and intelligent workflows to handle the repetitive, rule-governed tasks that consume significant time in finance, HR, and supply chain operations without requiring genuine human judgment for most cases.

Common examples in the US mid-market include: automated invoice processing and exception flagging, AI-assisted contract review for standard clause identification, automated payroll query handling and benefits information retrieval, demand forecasting in procurement and supply chain, and automated onboarding document processing and routing.

These use cases share a common characteristic: they are multi-step processes that previously required human coordination but follow defined logic that AI can learn and execute reliably. The ROI is measured in hours per week returned to higher-value work, error rate reduction, and processing time compression.

Pilot Purgatory: Why US Mid-Market AI Projects Stall Before Production

Understanding the failure patterns is as important as understanding the success patterns, because the same failure points appear repeatedly across US mid-market AI deployments.

Pilot purgatory is the most common failure mode. A contained AI experiment is run successfully in a controlled environment. The results are positive. Then nothing happens. The project does not scale because the conditions that made the pilot work – clean data, a dedicated team, a patient timeline – do not replicate easily across the broader organisation. According to data from Recon Analytics cited in TechRepublic’s 2026 enterprise AI adoption report, only 8.6% of companies have AI agents deployed in production. The vast majority are still in pilot or have no formalised initiative at all.

The exit from pilot purgatory requires a different kind of planning than the pilot itself: real data (not curated test data), real system integrations (not mocked connections), and real governance (not informal review). Companies that plan for production from day one of the pilot avoid the most common scaling failures.

Data quality problems surface late and disrupt timelines. The pattern is consistent: a company starts an AI project assuming its data is in better shape than it is. Three to six weeks into development, the actual data quality becomes apparent. The project either stops for data remediation or continues on inadequate data, producing a system that fails to meet quality expectations in production.

The fix is a data quality assessment before the project begins, not during it. This is not glamorous work, but it is the single highest-leverage investment a US mid-market company can make before an AI project starts.

Governance is treated as a post-deployment problem. US mid-market companies, unlike large enterprises, typically do not have dedicated model risk management or AI governance functions. When a deployed AI system produces an unexpected output, or when a compliance question arises about how the system is using customer data, there is often no defined owner, no escalation path, and no documented decision trail.

Building governance – named owners, defined quality thresholds, escalation procedures, audit logging – into the system design rather than retrofitting it after deployment is the difference between a system that survives its first compliance review and one that gets quietly withdrawn.

What US Mid-Market AI Leaders Are Doing Differently

The companies in the US mid-market that are producing consistent operational value from AI share a pattern of behaviours that distinguishes them from the majority.

They define success criteria before they build. The question is not “can we build a chatbot?” but “can we reduce first-contact resolution time by 30% on our top 10 issue categories within 90 days?” Specific, measurable, time-bound criteria make the decision to proceed to production straightforward rather than political.

They treat their first AI deployment as infrastructure, not a one-off project. The data pipelines, access controls, monitoring frameworks, and governance structures built for the first deployment become the foundation for the second and third. Each subsequent deployment is faster and cheaper because the infrastructure is already in place.

They involve their compliance and legal teams early. For US mid-market companies in regulated sectors (financial services, healthcare adjacent, insurance), involving compliance before deployment rather than after it avoids the delays and rework that compliance-as-afterthought invariably produces.

They choose an implementation partner with genuine production experience, not just AI enthusiasm. The US mid-market is served by hundreds of vendors who can build an impressive demo. Fewer have the experience to build a system that performs reliably under real production conditions, survives a compliance review, and can be operated and maintained by an internal team after the engagement ends.

What American Buyers Should Ask an AI Implementation Partner

If you are a US mid-market company evaluating an AI implementation partner for an operations project, these are the questions that separate vendors with genuine production experience from those with impressive slides:

- Can you describe a production deployment – not a pilot – at a company of similar size and sector? What were the accuracy metrics at launch, and what are they now?

- How do you handle data quality issues discovered during the project? What is your process, and what does it cost?

- What does your governance framework look like? Specifically, how do you implement audit logging, access controls, and model monitoring for a production system?

- Who from your team will be on this project day-to-day, and can we speak with them before we sign?

- What does the transition look like at engagement end? Can our team operate and maintain the system independently?

A vendor with genuine production experience will answer all five questions specifically and confidently. One without it will deflect, generalise, or redirect to the demo. Moweb has delivered AI implementations for enterprises and mid-market companies across the USA, UK, India, and East Africa. Our US office is based in Secaucus, New Jersey, and our team works across Eastern, Central, and Pacific time zones. If you are a US mid-market company planning an AI operations project and want an honest conversation about what it takes to do it right, schedule a conversation with our team.

Frequently Asked Questions About US Mid-Market AI Adoption

What percentage of US mid-market companies are using AI in operations in 2026? Adoption rates vary significantly by how “use” is defined and by company size within the mid-market. Across US enterprises broadly, 64% report actively using AI in operations according to NVIDIA’s 2026 State of AI report. However, production deployment of AI systems that materially change operational outcomes is considerably less common – Recon Analytics data from early 2026 shows only 8.6% of companies have AI agents deployed in production, while the majority are still in pilot or assessment stages.

What is the ROI of AI in operations for US mid-market companies? ROI varies widely by use case and quality of implementation. Deloitte’s 2026 AI report found that two-thirds of organisations report improved productivity and efficiency from AI adoption. The WRITER Enterprise AI survey found that organisations using AI effectively see an average 333% ROI with a payback period of six months. However, the same survey found that nearly half of organisations (48%) call their AI adoption a massive disappointment – highlighting the gap between well-executed and poorly-executed deployments. ROI is heavily dependent on use case definition, data quality, and implementation rigour, not on the AI technology itself.

What are the most common AI use cases in US mid-market operations? The highest-adoption operational use cases in the US mid-market are internal knowledge management and productivity (RAG-based assistants for information retrieval), customer support augmentation (AI triage, response drafting, automated resolution of common issues), and back-office process automation in finance, HR, and supply chain. These use cases share common characteristics: they have clear, measurable success criteria; they operate on data that already exists within the organisation; and they augment human workers rather than replacing them wholesale.

How long does it take a US mid-market company to see ROI from an AI operations project? For well-scoped, well-executed projects, initial ROI is typically visible within the first quarter of production deployment. The caveat is “well-scoped” and “well-executed” – projects that start with vague use cases, inadequate data, or no governance framework frequently do not reach a production state where ROI can be measured. The path from project start to production value typically runs 12 to 20 weeks for a focused first deployment with a qualified implementation partner.

What is the biggest mistake US mid-market companies make with AI? Pilot purgatory – running AI experiments that never make it to production because they were not designed to scale. The second most common mistake is starting AI projects without an honest assessment of data quality. Companies consistently overestimate how clean and accessible their data is, and this gap surfaces as timeline and cost overruns mid-project. The third most common mistake is treating governance as a post-deployment problem, which creates compliance exposure and erodes organisational trust in AI when issues arise.

Do US mid-market companies need a US-based AI implementation partner? Not necessarily, but there are practical considerations. Time zone alignment matters for communication-intensive early-stage engagements. Understanding of the US regulatory context (HIPAA, SOC 2, CCPA, sector-specific requirements) is necessary for regulated use cases. And for engagements that involve onsite workshops or stakeholder alignment sessions, geographic proximity matters. The best outcome is typically an implementation partner with genuine US market presence and understanding, combined with the engineering depth that offshore delivery enables at a cost.

Conclusion: The US Mid-Market AI Window Is Open – but Not Indefinitely

The data from 2026 makes one thing clear: US mid-market companies that operationalise AI effectively are building competitive advantages that compound over time. The gap between AI leaders and AI laggards in the mid-market is real, measurable, and widening.

The window to establish that advantage is open right now. The companies that will look back on 2026 as the year they got serious about AI operations are the ones making deliberate, well-governed investments today rather than waiting for the technology or the market to decide for them.

The path is not complicated: a clearly defined use case, honest data quality assessment, a qualified implementation partner with production experience, and governance built in from the start. Every US mid-market company that follows that path has a credible route to operational AI value within a single financial quarter. Moweb’s AI Strategy & Consulting team works with US mid-market companies to define that path, assess AI readiness, and deliver production systems that create measurable operational value. Our US presence in New Jersey means we work in your time zones and understand your regulatory context. Start the conversation here.

Found this post insightful? Don’t forget to share it with your network!