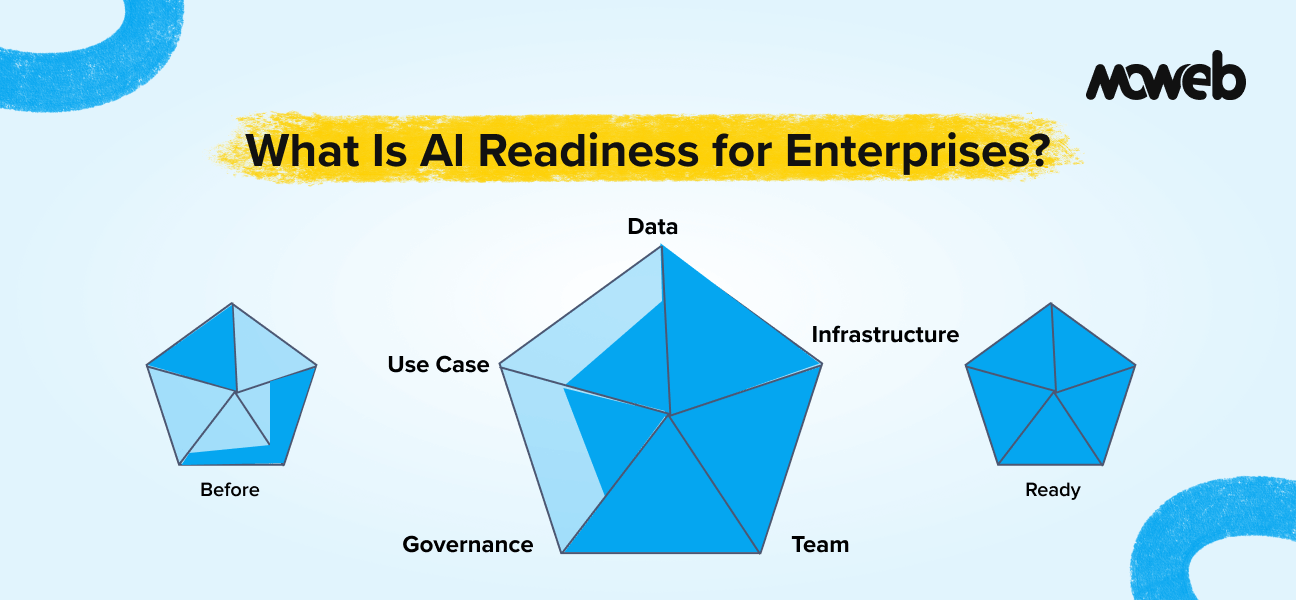

What is AI readiness for enterprises?AI readiness for enterprises is the degree to which an organisation has the data quality, technology infrastructure, team capability, governance frameworks, and use case clarity required to implement and sustain AI systems that produce reliable, accurate, and compliant outputs in a production environment. An enterprise that is AI-ready is not necessarily one that has already deployed AI – it is one whose current state across these five dimensions means that an AI project is unlikely to fail due to avoidable foundational gaps.

Why does AI readiness matter? Most enterprise AI failures are not caused by limitations of the AI technology itself. They are caused by foundational gaps that existed before the project started: data that was less clean or accessible than assumed, use cases too vague to build and evaluate against, governance frameworks absent when compliance questions arose, or teams without the capability to oversee and maintain the system after deployment. AI readiness assessment is the process of identifying and addressing those gaps before they become the reason a project fails.

Every enterprise AI project starts with optimism. A use case looks promising, a vendor demo is compelling, and a board approves the budget. What separates the minority that succeeds from the 80% that does not is rarely the AI model. It is whether the organisation was genuinely ready before the project began.

There is another version that accounts for far too many enterprise AI projects. The use case starts vague and gets vaguer during the project. The data turns out to be less clean than anyone admitted. The vendor builds something that works in demos but degrades in production. Governance questions arise after launch and create compliance anxiety. The system gets quietly shelved six months later.

The difference between these two outcomes is not the technology. In 2026, the underlying AI, including large language models, retrieval-augmented generation (RAG) pipelines, and agentic AI workflows, is mature enough to support a wide range of enterprise use cases reliably. The difference is almost always organisational: whether the organisation had the data quality, team capability, governance, and use case clarity in place before the first line of code was written.

AI readiness is the concept that captures this difference. This guide defines what it means precisely, explains the framework for assessing it, and provides concrete examples of what ready and not-ready look like in practice.

Defining AI Readiness: What It Actually Means

AI readiness is not a binary state. An organisation is not simply “AI ready” or “not AI ready.” It is a spectrum across multiple dimensions, and an organisation can be strong on some dimensions and weak on others.

The most useful definition is this: AI readiness is the degree to which an organisation’s current state across five dimensions – data, infrastructure, team capability, governance, and use case clarity – is sufficient to implement and sustain an AI system that produces reliable, accurate, and compliant outputs without requiring fundamental remediation mid-project.

Several things in this definition are worth unpacking:

“Current state” emphasises that readiness is an assessment of where you are now, not where you aspire to be. An organisation that plans to improve its data quality next year is not currently data-ready, regardless of its intentions.

“Sufficient to implement and sustain” emphasises that readiness covers the full lifecycle, not just the build phase. An organisation that can build an AI system but cannot operate and maintain it reliably after deployment is not fully ready, even if the initial build succeeds.

“Without fundamental remediation mid-project” is the practical test. The question is not whether everything is perfect – it rarely is – but whether any gaps are serious enough to require a project to stop and fix foundational issues before continuing. Mid-project remediation is significantly more expensive and disruptive than pre-project remediation.

One more critical framing: AI readiness is always use-case-specific, not a global organisational attribute. The data required for a customer support AI assistant is different from the data required for a predictive maintenance model. Assessing readiness in the abstract produces findings that cannot be acted on.

Why AI Readiness Is Not the Same as Digital Maturity

A common misconception is that digital maturity and AI readiness are the same thing. An organisation that has successfully digitised its operations, moved to the cloud, and adopted modern SaaS tools is often assumed to be ready for AI. This is sometimes true but often not.

Digital maturity describes how effectively an organisation uses existing digital tools. AI readiness describes whether the organisation can introduce a new category of system – one that learns from data, makes probabilistic outputs, and requires a different kind of oversight than deterministic software.

An organisation can be highly digitally mature and still have significant AI readiness gaps. A company that runs all of its operations on well-configured cloud software may still have data scattered across systems in incompatible formats, no internal team with AI engineering or data science skills, no governance policy for AI model accountability, and no clearly defined AI use case beyond “we should do something with AI.”

Conversely, an organisation can have relatively modest digital infrastructure but strong AI readiness on the dimensions that matter most for their first use case: clean, accessible data, a clear problem definition, and a willing team.

This distinction matters for budget allocation. Investments in digital transformation, cloud migration, SaaS consolidation, and ERP upgrades do not automatically move the needle on AI readiness. Organisations that conflate the two tend to underinvest in the dimensions where AI readiness gaps are most likely to cause project failure: data quality and governance.

The Five Dimensions of AI Readiness

The AI readiness framework that produces the most actionable assessments in enterprise contexts covers five dimensions. Each dimension has a different weight depending on the specific AI use case, but all five need to be at least minimally sufficient for any AI project to succeed.

Dimension 1: Data Readiness

Data readiness is consistently the dimension where enterprises most overestimate their current state. It is also the dimension that causes the most mid-project disruptions when the gap is not identified upfront.

Data readiness covers four sub-dimensions: availability (does the data that the AI system needs actually exist and can it be accessed?), quality (is it clean, consistent, complete, and representative enough to train or evaluate an AI system?), volume (is there enough of it for the intended AI approach?), and governance (do you know what data you can legally use and have you classified its sensitivity?).

In 2026, a fifth sub-dimension is increasingly critical for organisations deploying generative AI or RAG-based systems: lineage and auditability, the ability to trace which data sources contributed to which model outputs. Regulatory frameworks, including the EU AI Act, now require this for high-risk AI deployments.

What ready looks like: A financial services firm wanting to build a document classification system has five years of labelled transaction documents stored in a central document management system accessible via a documented API, with data quality audits run quarterly and a clear data classification policy covering which documents contain PII.

What not ready looks like: A manufacturing company wanting to build a predictive maintenance model has sensor data distributed across three different legacy systems in incompatible formats, with no documented data dictionary, two years of known labelling errors in historical maintenance records, and no clear owner responsible for data governance.

Moweb’s Data Engineering & Foundations practice works with enterprises to assess and address data readiness gaps as a precursor to AI project development.

Dimension 2: Technology Infrastructure Readiness

Infrastructure readiness is usually the easiest dimension to address, because cloud infrastructure can be provisioned relatively quickly compared to fixing data quality or building team skills. But it is worth assessing honestly because infrastructure gaps can create project delays even when everything else is in order.

Infrastructure readiness covers compute access (can you run AI workloads?), data infrastructure (can data be moved to where it needs to be for AI processing?), integration capability (can the AI system connect to the enterprise tools it needs to work with?), and security configuration (does your infrastructure meet the security requirements for the data the AI system will handle?).

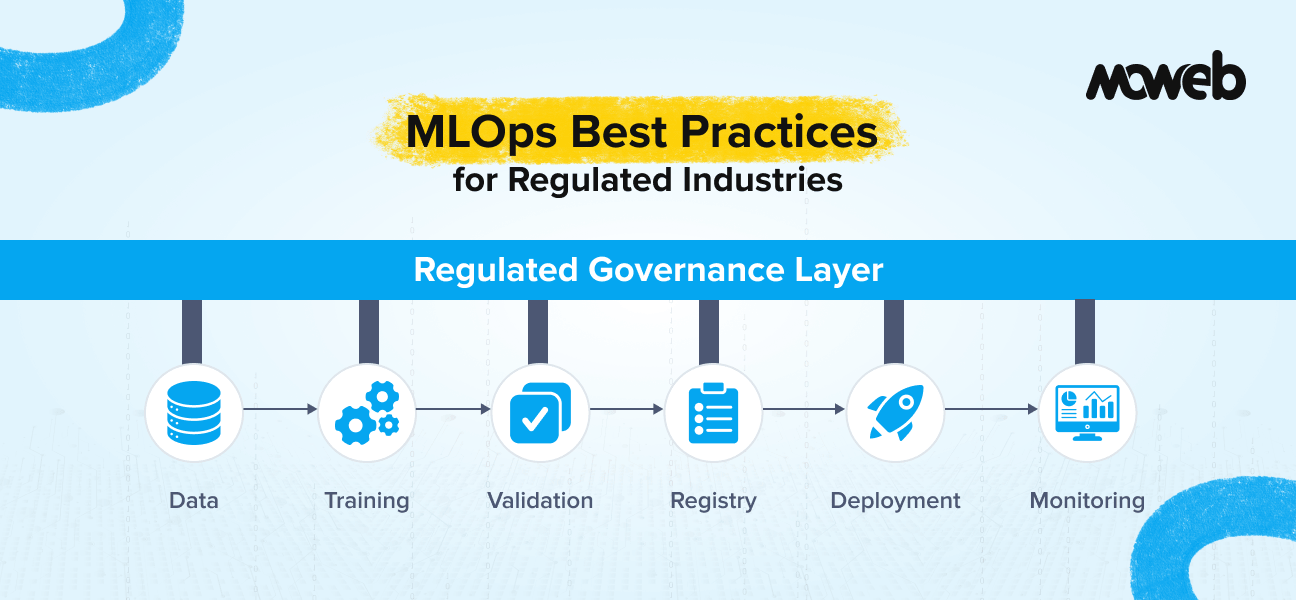

In 2026, infrastructure readiness must also account for MLOps maturity: the pipelines, monitoring tools, and deployment automation required to maintain AI systems in production. Organisations without MLOps foundations can build AI, but cannot reliably sustain it, which makes production readiness a distinct and separate question from build readiness. Additionally, 85% of enterprises now use multi-cloud strategies (OvalEdge, 2025), meaning infrastructure assessment must account for cross-cloud data movement, latency, and governance across cloud providers.

What ready looks like: A retail company wanting to build a customer support AI assistant has an established AWS account with appropriate compute resources, a Snowflake data warehouse where customer and order data is already centralised, REST APIs available for its CRM and order management system, and standard security controls (encryption at rest and in transit, VPC configuration, IAM roles) already in place.

What not ready looks like: A government-adjacent organisation wanting to build an AI system for document processing has no cloud presence (all infrastructure is on-premises), no established data pipeline tooling, strict network security policies that have never been evaluated for cloud AI workloads, and no existing API layer for its document management system.

Dimension 3: Team and Skills Readiness

Team readiness is the dimension that most organisations underestimate – not because they lack talented people, but because they underestimate how different the skills required for AI systems are from those required for traditional software.

Deloitte’s 2026 State of AI in the Enterprise report identifies the AI skills gap as the single biggest barrier to AI integration, with 52% of organisations lacking sufficient AI talent. Critically, the report found that education, not role redesign, was the primary talent response, which means most organisations are upskilling existing teams rather than hiring. This makes the internal ownership question more important: who leads and governs the AI project when no dedicated AI team exists?

What ready looks like: A logistics company wanting to build a route optimisation AI system has a Head of Technology who has led two previous data projects and understands model evaluation, a data engineering team that manages the company’s existing BI infrastructure, and has already allocated 0.5 FTE for ongoing system monitoring and maintenance.

What not ready looks like: A professional services firm wanting to build an AI knowledge assistant has no internal technical stakeholder above developer level, a small IT team focused entirely on infrastructure support with no data or AI experience, and no plan for who will own the system after the vendor delivers it.

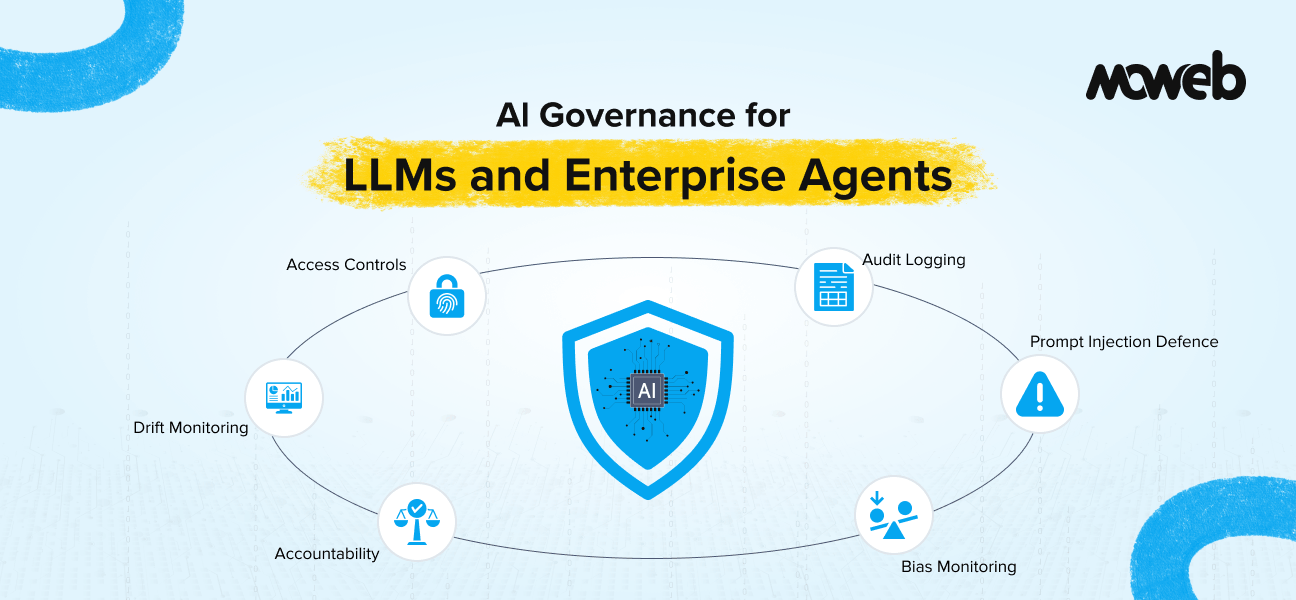

Dimension 4: Governance and Compliance Readiness

Governance readiness is the dimension most frequently underinvested in until a problem forces the issue. An AI system deployed without adequate governance is not production-ready, regardless of its technical quality — because the moment a compliance question arises, a data subject request comes in, or an incorrect high-stakes output occurs, the organisation has no framework to respond within.

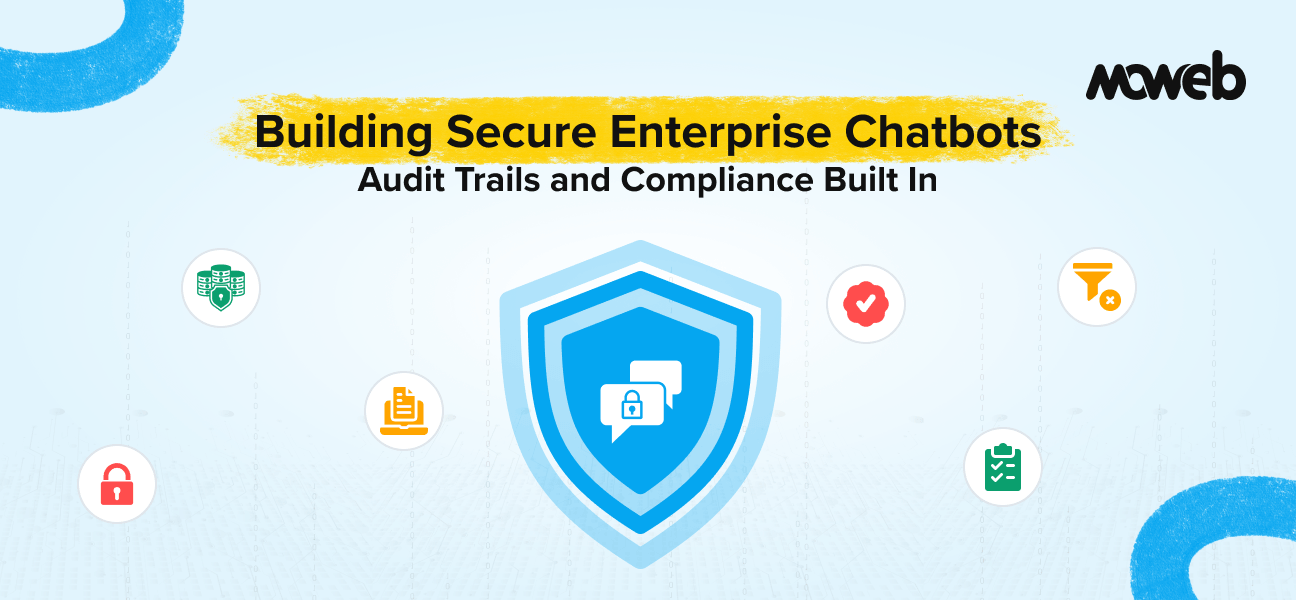

Governance readiness covers AI policy (are there defined rules for what AI can and cannot be used for, and what oversight is required?), data compliance (has the legal basis for using relevant data in an AI system been assessed?), model accountability (is it clear who is responsible when an AI system produces an incorrect or harmful output?), and vendor management (are contracts structured to protect the organisation’s data and intellectual property?).

The governance landscape has changed materially in 2025–2026. The EU AI Act is now in full enforcement for high-risk AI systems, NIST’s AI Risk Management Framework has expanded its operational guidance, and the OECD AI Policy Observatory tracks over 900 AI policy initiatives across 80+ jurisdictions. For enterprises operating across multiple markets, governance readiness now requires jurisdiction-specific assessment, not a single global policy. Regulated industries, such as financial services, healthcare, pharma, and the public sector, face higher baseline requirements, including explainability requirements, audit trail capabilities, and, in some cases, mandatory human oversight for AI-assisted decisions.

What ready looks like: A healthcare organisation wanting to deploy an AI system for patient intake documentation has reviewed HIPAA requirements with its compliance team, established a data processing agreement with the AI vendor, defined a clinical review requirement for any AI output used in patient care, and has an existing AI ethics policy approved by its board.

What not ready looks like: An e-commerce company wanting to deploy a personalisation AI system has never conducted a GDPR or CCPA assessment of its customer data practices, has no AI-specific policy, and its standard vendor contract template has no clauses covering AI model training on customer data or liability for AI-generated outputs.

Moweb’s AI Security & Governance practice helps enterprises operationalise AI governance frameworks as part of project delivery, not as a separate workstream.

Dimension 5: Use Case Clarity

Use case clarity is the most straightforwardly fixable AI readiness gap – it requires alignment work rather than technical investment – but it is also the gap that causes the most waste when it remains unaddressed. Vague use cases produce misaligned systems, which produce stakeholder disappointment, which produces projects that are quietly shelved.

Use case clarity covers problem definition (can you state exactly what problem the AI system will solve, for whom, and how you will measure whether it solved it?), success criteria (are there specific, measurable targets defined before development begins?), and scope boundaries (is it clear what the system will and will not do, including what happens when it encounters something outside its scope?).

The data on use case clarity is stark: 73% of AI projects that fail had no agreed definition of success before the project started (MIT Sloan, 2025). Projects with quantified success metrics defined upfront achieve a 54% success rate. Those without one achieve just 12%. Use case clarity is not a soft “nice to have” it is the single highest-leverage readiness investment available to any organisation.

What ready looks like: An insurance company wanting to build an AI claims triage system has defined: the system will classify incoming claims by type and priority within 30 seconds of receipt, route them to the correct team queue, and flag fraud indicators for human review. Success means 92% classification accuracy on a holdout test set of 500 labelled claims and a false positive fraud flag rate below 5%. Out-of-scope situations (claims in languages other than English, claims with missing mandatory fields) will route to a manual review queue with an explanatory flag.

What not ready looks like: A media company wanting to “use AI to improve content operations” has not defined which content operations to improve, who the primary users would be, what improvement looks like quantitatively, or what an acceptable error rate is. The use case is an aspiration rather than a specification.

AI Readiness vs. AI Maturity: An Important Distinction

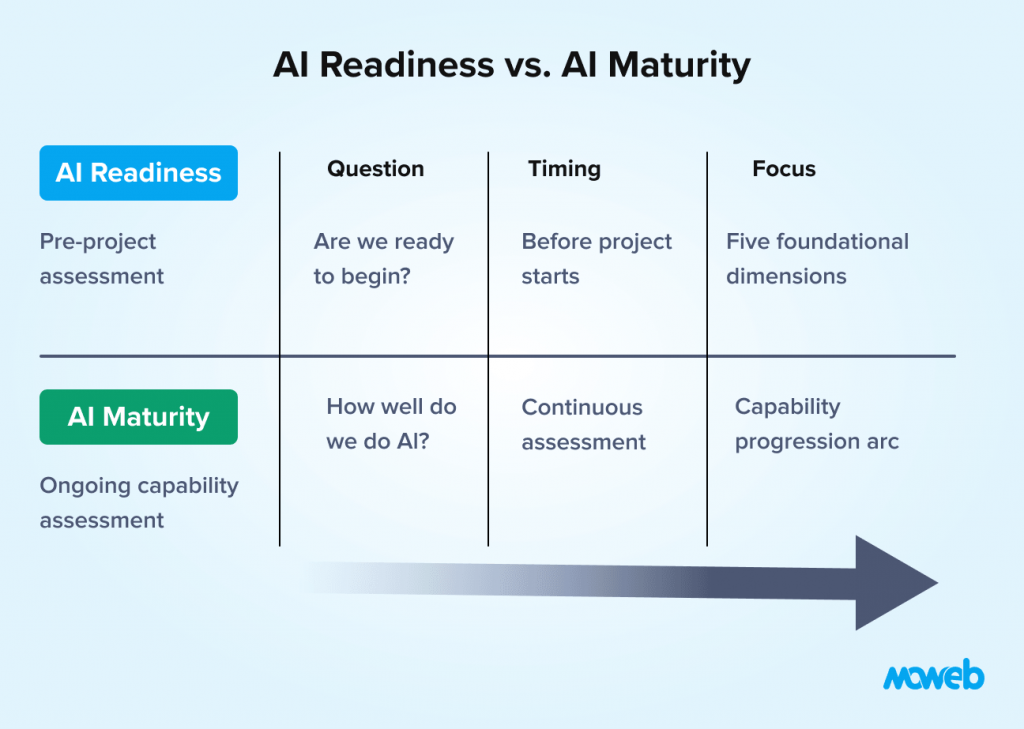

AI readiness and AI maturity are related but distinct concepts, and confusing them leads to misaligned expectations.

AI readiness is a pre-project assessment: are we ready to begin? It focuses on whether the foundational conditions for a successful first or next AI project exist.

AI maturity is an ongoing organisational capability assessment: how well do we do AI as an organisation? It covers the full spectrum from initial experimentation through to AI as a core strategic capability embedded in products and operations.

An organisation can be ready for its first AI project (AI readiness) while being at an early stage of AI maturity. Readiness is the gateway question. Maturity describes the journey after that gateway is crossed.

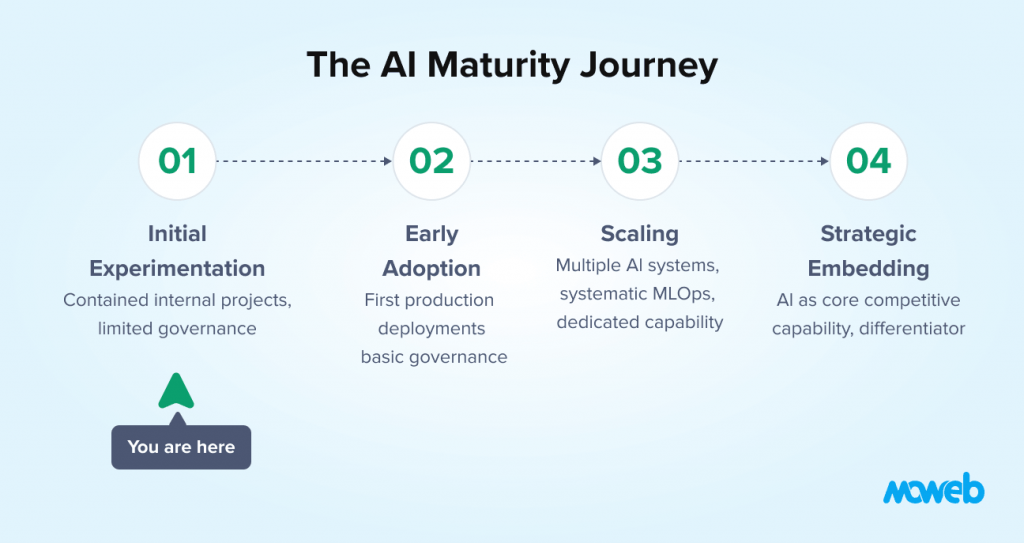

The AI maturity journey for most enterprises follows a recognisable arc: initial experimentation (one or two contained projects, primarily internal tooling), early adoption (first customer-facing or revenue-impacting deployments, basic governance in place), scaling (multiple AI systems in production, dedicated AI capability, systematic governance), and strategic embedding (AI as a core component of product and operational strategy, measurable competitive differentiation from AI capability).

Readiness assessment tells you whether you can take the next step on this journey. Maturity assessment tells you where you are on it. Notably, Deloitte’s 2026 research found that only 34% of enterprises are truly reimagining their business through AI; the majority are achieving efficiency gains but not strategic transformation. This reinforces the importance of setting realistic expectations at each maturity stage.

What Makes an Organisation Not Ready: The Four Most Common Gaps

Understanding the most common AI readiness gaps helps organisations prioritise where to focus remediation efforts.

Gap 1: Data that looks clean but is not. The most common and most underestimated gap. Data that looks complete and well-structured in a database view is often inconsistent in ways that only surface during AI-specific processing: category labels that changed meaning over time, free-text fields with wildly inconsistent formats, timestamp errors, duplicate records with conflicting values, and training data that does not reflect current operational reality. This gap almost always requires a dedicated data quality audit before AI development begins. Informatica’s 2025 CDO Insights survey found that only 12% of organisations report data of sufficient quality and accessibility for AI applications, meaning 88% face some version of this gap.

Gap 2: Use cases defined at the wrong level of abstraction. “Improve customer experience,” “make operations more efficient,” and “use AI for better insights” are goals, not use cases. An AI system can only be built, evaluated, and trusted when the problem it solves is specific enough to write acceptance criteria for. This gap is fixed through facilitated use case definition workshops with technical and business stakeholders, not through technical work.

Gap 3: Governance that is aspirational rather than operational. Many organisations have AI or data governance policies that were written to satisfy a board or regulatory requirement and were never implemented as actual processes. An AI project needs operational governance – named owners, defined review processes, documented accountability – not a policy document on a server somewhere. This gap requires cross-functional engagement between technical, legal, compliance, and business leadership teams.. In 2026, this gap has heightened urgency: the EU AI Act’s enforcement creates legal liability for organisations that cannot demonstrate operational governance of high-risk AI systems, not just paper policies.

Gap 4: No plan for the system after it is delivered. The most consistently overlooked gap in mid-sized enterprise AI planning. An AI system that is built but not monitored degrades. Models drift as data distributions change. Retrieval quality decreases as document corpora grow inconsistently. Security vulnerabilities emerge as the threat landscape evolves. An organisation that has no plan for who operates the system, how quality is monitored, and what triggers remediation is not ready to sustain an AI system, even if it is ready to build one.

Gap 5: Shadow AI displacing sanctioned AI programmes

An emerging and underappreciated gap in 2025–2026: employees from over 90% of enterprises are already using personal AI tools for work tasks, even where only 40% have official LLM subscriptions (MIT NANDA, 2025). Organisations without structured AI readiness frameworks often find their AI programmes competing with ad hoc shadow AI that employees find more responsive and useful. Readiness assessment must account for this reality and channel existing employee AI adoption into governed, production-grade systems.

A Simple Framework for Using AI Readiness in Strategic Planning

AI readiness assessment is most useful not as a one-time pass/fail test but as a strategic planning tool used repeatedly as an organisation develops its AI capability.

Used well, a readiness assessment at the start of an AI initiative answers three questions:

What can we start now? Use cases where readiness is strong across all five dimensions can be initiated immediately, typically beginning with a proof of concept. For most mid-sized enterprises, there is at least one use case that fits this description.

What do we need to fix first? Use cases where readiness gaps are significant enough to require remediation before the project can start need a pre-project investment phase. Identifying this upfront prevents the more expensive scenario of discovering the gap mid-project.Research from Pertama Partners (2026) found that organisations investing in data foundations before development pay 2.8x less in remediation costs than those that skip this step.

What is premature? Use cases where multiple fundamental readiness gaps exist should be deferred until the organisation has addressed the foundational constraints. Investing in AI development before the foundations are in place reliably produces wasted budget and eroded internal confidence.

This framing – start now, fix first, defer – converts a readiness assessment from a discouraging list of gaps into an actionable sequence of investments. If you want to work through a structured assessment of your organisation’s current AI readiness across all five dimensions, our detailed AI readiness assessment checklist for mid-sized enterprises provides 40+ specific evaluation criteria across each dimension with a scoring guide and interpretation framework.

Frequently Asked Questions About AI Readiness for Enterprises

What is the difference between AI readiness and digital transformation readiness? Digital transformation readiness assesses whether an organisation can adopt and use digital tools effectively. AI readiness is more specific: it assesses whether the organisation has the data quality, infrastructure, team skills, governance frameworks, and use case clarity required for AI systems specifically. An organisation can be digitally transformed and still have significant AI readiness gaps, particularly in data quality, model governance, and use case definition.

How do you measure AI readiness? AI readiness is measured through a structured assessment across five dimensions: data, infrastructure, team capability, governance, and use case clarity. Each dimension is evaluated against specific criteria rated as Ready, Partial, or Not Ready. The result is a composite picture of organisational readiness that identifies specific gaps, prioritises remediation, and provides an actionable sequence for moving forward. See our AI readiness assessment checklist for a full evaluation framework.

Can a small or mid-sized company be AI-ready? Yes. AI readiness does not require large-scale infrastructure, a dedicated AI team, or enterprise-grade data warehousing. A small company can be AI-ready for a specific, well-scoped use case if it has clean, accessible data for that use case, basic cloud infrastructure, a technically fluent internal owner, appropriate data handling practices, and a clearly defined problem to solve. AI readiness is use-case-specific, not a global organisational attribute.

What is the first step in an AI readiness assessment? The first step is defining the specific AI use case you are assessing readiness for. Readiness is always relative to a particular use case — the data required for a document classification system is different from the data required for a predictive maintenance model. Once the use case is defined, each of the five dimensions can be assessed against its specific requirements. Starting a readiness assessment without a defined use case produces generic findings that are difficult to act on.

How long does it take to become AI-ready? It depends entirely on the nature and severity of the gaps identified. Use case clarity gaps can be resolved in days to weeks through facilitated workshops. Infrastructure gaps typically take two to six weeks to resolve. Team skills gaps that require recruiting new capability take three to twelve months. Data quality gaps vary enormously: minor cleaning and formatting issues can be resolved in a few weeks; fundamental structural data quality problems can take six to eighteen months. Governance gaps typically take four to eight weeks to operationalise if leadership is aligned and engaged. Most mid-sized enterprises can reach sufficient readiness for a first contained AI use case within three to six months if gaps are addressed in parallel rather than sequentially.

What is the relationship between AI readiness and AI governance? AI governance is one of the five dimensions of AI readiness, but it is also a broader ongoing organisational capability. As an AI readiness dimension, governance covers whether the foundational policies and processes needed to deploy and operate an AI system responsibly are in place before the project begins. As an ongoing capability, AI governance covers the full lifecycle of AI systems: how use cases are approved, how models are monitored, how incidents are handled, and how accountability is maintained as AI becomes more embedded in business operations.

How does AI readiness differ for regulated industries? Regulated industries (financial services, healthcare, pharmaceuticals, government) have higher baseline requirements in the governance dimension. They need to demonstrate not just that AI governance policies exist, but that they are aligned with specific regulatory requirements (GDPR, HIPAA, FCA guidelines, NHS Digital standards, and for any organisation deploying AI in EU markets, the EU AI Act’s risk classification and conformity assessment requirements).). They also tend to face higher data compliance complexity and require stronger model explainability and audit trail capabilities. The readiness framework applies equally, but the bar for “ready” in the governance dimension is higher.

What is the difference between AI readiness and AI maturity? AI readiness is a pre-project assessment focused on whether the foundational conditions for a specific AI project exist right now. AI maturity is an ongoing organisational capability assessment covering how well the organisation does AI across its entire portfolio of initiatives. An organisation can be ready for its first AI project while still being at an early maturity stage. Improving maturity is the longer journey that begins once readiness for a first project is established.

Conclusion: Readiness Is a Choice, Not a Circumstance

No organisation becomes AI-ready by accident, and in 2026, the cost of not being ready has never been clearer. It requires deliberate effort across five specific dimensions, honest assessment of where gaps exist, and disciplined prioritisation of what to address before starting an AI project rather than during it.

The good news is that most mid-sized enterprises are closer to AI-ready than they believe. The most common gaps are use case clarity (fixed through facilitated workshops, not technical investment), data quality for a specific contained use case (often better than feared once a specific dataset is audited rather than assessed in the abstract), and governance (addressable in weeks with leadership alignment). Infrastructure and team skills are often strong enough to proceed with external support.

The barriers to AI readiness are mostly organisational and leadership challenges, not technical ones. Which means they are within every organisation’s power to address.

The organisations that will generate real AI ROI in 2026 and beyond are not the ones that moved fastest. They are the ones who moved with the right foundations in place. If you would like to understand where your organisation stands across the five dimensions with the support of an experienced AI strategy team, Moweb’s AI Strategy & Consulting practice facilitates readiness assessments as the standard first step in enterprise AI engagements. Talk to us about starting yours.

Found this post insightful? Don’t forget to share it with your network!