What is AI in manufacturing used for? AI in manufacturing is used across the full operational chain: predictive maintenance (forecasting equipment failures before they occur to reduce unplanned downtime), quality inspection (computer vision systems detecting defects at speeds and accuracy rates humans cannot match), demand forecasting and production scheduling (optimising inventory and capacity against real-time signals), energy optimisation (reducing per-unit energy consumption through intelligent load management), and supply chain intelligence (predicting disruptions and optimising supplier decisions). Increasingly in 2026, agentic AI systems are orchestrating these functions autonomously, scheduling maintenance, adjusting production parameters, and coordinating across the supply chain with minimal human intervention. The highest-ROI manufacturing AI use cases share a common characteristic: they operate on quantifiable production baselines, which means ROI is measurable, not estimated.

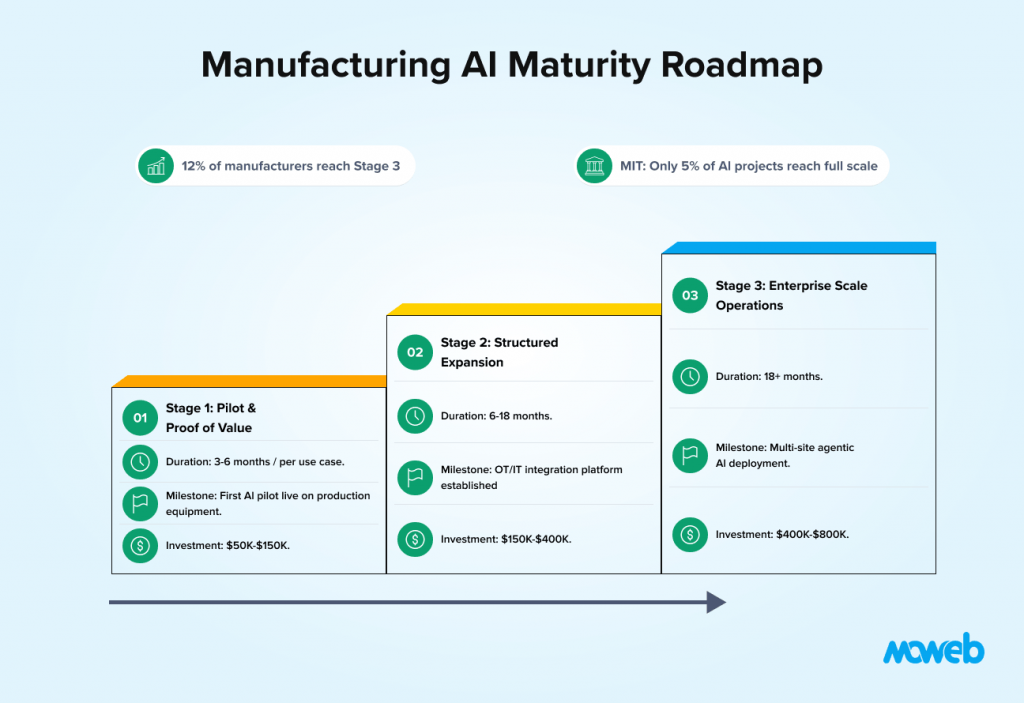

What is the average ROI of AI in manufacturing? Manufacturing delivers an average 200% ROI on AI investments, the highest of any sector, according to Capgemini’s Smart Factories Report, because factory operations provide quantifiable baselines and direct cost-to-savings mappings that knowledge work environments cannot match. Specifically, AI-driven predictive maintenance reduces equipment downtime by 45% and maintenance costs by 25%. The challenge is that only 12% of manufacturers have moved beyond single-use-case deployments to enterprise-scale AI operations and a separate MIT study found that only 5% of AI projects (including agentic pilots) reach full scale across industries meaning the majority of the sector’s ROI opportunity remains untapped.

Manufacturing is the sector where AI ROI is most measurable, most immediate, and most consistently underrealised.

The numbers tell the story clearly. Manufacturing AI delivers an average 200% ROI, the highest of any sector, because factory operations provide quantifiable baselines and direct cost-to-savings mappings. 77% of manufacturers now use AI, up from 70% in 2024. Yet only 12% have moved beyond single-use-case deployments to enterprise-scale AI operations. A separate MIT study found that only 5% of GenAI and agentic AI projects reach full scale across industries, a figure that underscores how difficult the pilot-to-production transition truly is.

The gap between those data points is the central challenge of manufacturing AI in 2026. The technology works. The ROI is real. But most manufacturers are stuck at the pilot stage, running one or two successful experiments that never scale to the plant floor, let alone across multiple sites. Gartner predicts that by 2030, semi-autonomous AI agents will orchestrate 10% of key production, quality, and maintenance decisions, up from just 2% today. The manufacturers who begin building the governance and infrastructure foundations now will be the ones reaching that benchmark first.

This guide is a practical roadmap for manufacturing organisations that want to move from isolated AI pilots to reliable, plant-wide AI deployment. It covers the highest-value use cases in sequence, the OT/IT integration challenges that block most scaling efforts, the governance framework that regulated and safety-critical manufacturing requires, and the realistic timeline from first pilot to enterprise-scale operations.

Why Manufacturing Is AI’s Highest-ROI Sector

The reason manufacturing outperforms every other sector on AI ROI is structural, not accidental. Three characteristics of manufacturing environments create natural conditions for high-confidence AI value measurement.

First, manufacturing has quantifiable operational baselines. A production line runs at a known throughput rate, with a known defect rate, consuming a known amount of energy per unit. When AI changes any of these numbers, the improvement is directly measurable in units, percentages, and currency. There is no ambiguity about whether the system worked. This is fundamentally different from knowledge-work AI use cases, where productivity gains are estimated from time surveys.

Second, manufacturing has continuous, sensor-rich data streams. Modern production equipment generates enormous volumes of structured data: temperature readings, vibration signatures, pressure measurements, cycle times, and energy consumption. This data already exists. The AI application layer connects to data that is already being collected, rather than having to create a data collection infrastructure from scratch.

Third, manufacturing has high-value failure modes. An unplanned line stoppage at a mid-sized manufacturer costs $50,000 to $150,000 per hour in lost production, emergency labour, and supply chain disruption. A quality defect that reaches a customer costs multiples of that in warranty claims, returns, and brand damage. The financial stakes of the problems AI solves in manufacturing are high enough that even a partial solution generates meaningful ROI.

These three characteristics explain why manufacturing AI projects, when they are properly scoped and executed, almost always demonstrate ROI. BCG research further confirms this: manufacturers investing in shop-floor digital upskilling alongside AI deployment achieve 2.3× faster AI time-to-value than those focusing on technology alone. The challenge is execution, not business case.

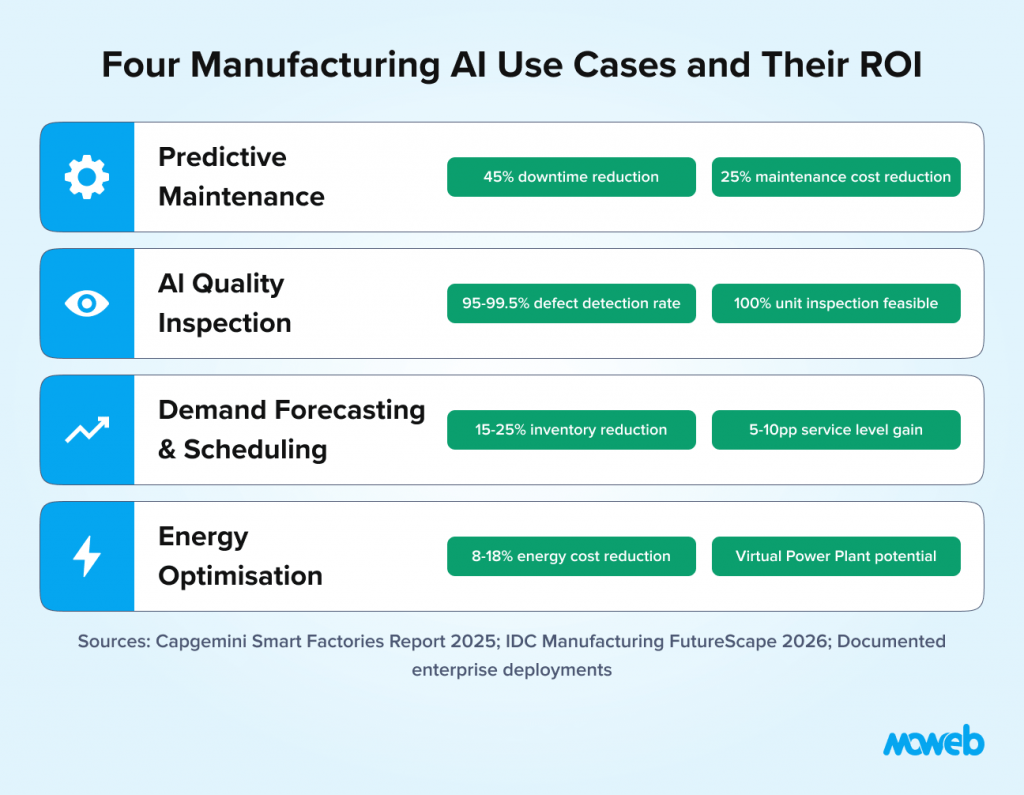

The Four Manufacturing AI Use Cases with Proven ROI

Not all manufacturing AI use cases are equal. Some are mature with extensive production evidence. Others are promising but still emerging. The following four have consistent, well-documented ROI across the manufacturing sector.

1. Predictive Maintenance

Predictive maintenance is the most widely deployed and best-evidenced manufacturing AI use case. The system continuously monitors sensor data from production equipment – vibration signatures, temperature gradients, acoustic emissions, current draw – and detects the patterns that precede specific failure modes before the failure occurs.

The business case is straightforward. AI-driven predictive maintenance reduces equipment downtime by 45% and maintenance costs by 25% in manufacturing settings. For a manufacturer with significant capital equipment and high unplanned downtime costs, these are transformative numbers.

The technical requirements are accessible for most manufacturers. The sensor infrastructure is often already in place (modern PLCs and SCADA systems collect the data). The AI layer connects to the historian or time-series database, learns the normal operating signatures for each equipment class, and flags anomalous patterns for investigation. The implementation challenge is data quality and labelling – having enough historical records of past failures labelled to train the model.

A well-implemented predictive maintenance system produces not just failure alerts but root cause indicators: which specific sensor pattern is anomalous, which component is likely responsible, and how much lead time is available before failure. This transforms maintenance from reactive (fix it after it breaks) through preventive (replace it on a schedule) to truly predictive (intervene exactly when needed, based on evidence). In 2026, leading deployments are adding prescriptive AI agents that go further, not just forecasting failure, but autonomously generating the work order, reserving the parts, and scheduling the technician, as demonstrated by deployments such as Infinite Uptime’s prescriptive maintenance agents, which have been reported to save clients over 125,000 hours of downtime.

For realistic cost expectations before committing to a manufacturing AI pilot, see our breakdown of what an AI proof of concept costs in 2026.

2. AI-Powered Quality Inspection

Computer vision-based quality inspection is the second major manufacturing AI use case and the one where AI most clearly outperforms human capability rather than augmenting it.

Human visual inspection is reliable at around 80% defect detection under optimal conditions and degrades with fatigue, lighting variation, and the monotony of high-volume inspection. Computer vision systems operating on modern inference hardware achieve 95% to 99.5% detection rates depending on defect type, run continuously without degradation, and can inspect at production line speeds that make 100% inspection economically feasible rather than a sample-only process.

The practical scope of AI quality inspection has expanded significantly since 2023. Early systems were primarily effective on surface defects with high visual contrast. Current systems handle multi-modal defect types – dimensional deviations, surface texture anomalies, assembly errors, internal voids (using X-ray or CT integration) – and can be trained from relatively small defect image libraries using synthetic data augmentation techniques.

For manufacturers in regulated industries (automotive, aerospace, medical devices, food and beverage), AI quality inspection also generates the digital quality record per unit that traceability requirements demand. Each inspection generates a timestamped, archived record of the inspection result, the confidence score, and the images inspected – a compliance artifact that paper-based inspection cannot provide. In 2026, causal AI capabilities are also being applied to quality inspection: rather than simply flagging a defect, these systems trace the anomaly back to a specific upstream production variable, a motor vibration deviation, a temperature excursion, or a material batch variance, enabling true zero-defect manufacturing rather than defect detection after the fact.

3. Demand Forecasting and Production Scheduling

Production scheduling based on accurate demand forecasts is the third high-value manufacturing AI use case. It sits at the intersection of the production floor and the supply chain, and its impact compounds across the organisation.

Traditional demand forecasting in manufacturing relied on historical averages, seasonal indices, and manual sales input. The results were consistently too slow to adapt to market changes and too aggregated to capture the SKU-level signals that production planning actually needs.

AI-driven demand forecasting integrates historical sales data, current order book, market signals, promotional calendars, and in some cases external data (weather, economic indicators, competitor activity) to produce rolling probabilistic forecasts at the SKU and location level. These forecasts feed directly into production scheduling systems, driving materials requisition, capacity planning, and workforce scheduling with a responsiveness that manual methods cannot match.

IDC reports that over 40% of manufacturers with a production scheduling system in place are upgrading it with AI-driven capabilities to enable autonomous scheduling processes incorporating real-time machine status, workforce availability, and supply chain variability signals. Inventory reduction of 15% to 25% and service level improvements of 5% to 10 percentage points are the typical range of outcomes in documented deployments.

For manufacturers operating in the current volatile trade environment, AI-driven demand forecasting provides a critical buffer against supply chain disruption, one of the three macro pressures (alongside workforce demographic shifts and the agent-to-AI transition) that Dataiku’s 2026 manufacturing outlook identifies as defining the sector’s competitive landscape.

4. Energy Optimisation

Energy is one of the highest controllable costs in manufacturing, and AI-driven energy optimisation is delivering measurable reductions across process industries, discrete manufacturing, and assembly operations.

The core application is AI-controlled load scheduling: shifting energy-intensive processes (furnaces, compressors, large motors, HVAC) to off-peak tariff periods without compromising production throughput. The AI system learns the production schedule, the thermal and process constraints of each system, and the energy tariff structure, then optimises the operating sequence to minimise peak demand charges and off-peak rate exploitation.

More advanced implementations extend to per-unit energy consumption prediction: forecasting how much energy a specific product run will consume based on parameters (material composition, machine settings, ambient conditions) and identifying production runs where consumption is anomalously high flagging equipment inefficiency or process drift before it compounds. Frontier implementations in 2026 are deploying Energy Digital Twins plant-scale simulations that model energy flexibility in real time. When multiple factories connect these twins, they can form a Virtual Power Plant (VPP) capable of selling excess capacity back to the grid or shifting production to the cheapest energy windows, turning the factory energy system into a net revenue source rather than purely a cost centre.

For manufacturers in energy-intensive processes (steel, cement, glass, chemicals), energy optimisation AI is a strategic priority rather than a productivity enhancement. Documented implementations are delivering 8% to 18% reductions in energy cost per unit, which at an industrial scale represents multi-million dollar annual savings.

The OT/IT Integration Challenge: Why Most Manufacturing AI Pilots Get Stuck

The most common reason manufacturing AI pilots do not scale is not the AI. It is the integration layer between operational technology (OT) and information technology (IT).

Manufacturing environments contain equipment from multiple vendors, installed across decades, running proprietary control protocols (Modbus, OPC-UA, PROFINET, EtherNet/IP) that were never designed to expose data to an AI platform. The PLC running a 2009-vintage injection moulding machine speaks a different protocol than the SCADA system managing the production line, which speaks a different language than the ERP system where production orders live.

A pilot project can work around this by building bespoke integrations for the specific equipment in its scope. Scaling across a plant or across multiple plants requires a systematic OT/IT integration architecture, not point-to-point workarounds.

Before investing in OT/IT integration architecture, a structured AI readiness assessment helps manufacturing organisations identify where their data, infrastructure, and governance gaps are most significant.

The architectural components that enable scalable manufacturing AI integration:

An industrial data platform or historian that consolidates time-series data from OT systems into a queryable data layer accessible to AI applications. OSIsoft PI System, Aveva Historian, and Ignition by Inductive Automation are the most widely deployed options. In 2026, the emerging architectural standard is the Unified Namespace (UNS), a central data lake built on MQTT and OPC UA that allows all machines, systems, and applications to communicate through a single, real-time data fabric rather than point-to-point integrations.

Standardised data modelling using industrial standards like OPC-UA information models or ISA-95/ISA-88 that define how equipment data is structured and labelled consistently across assets and sites.

Edge computing infrastructure for AI applications that require low-latency inference at the machine level (real-time quality inspection, closed-loop process control) rather than cloud-based batch processing. IDC projects that by 2027, 40% of all operational data will be integrated across applications and platforms, autonomously driven by AI agents purpose-built for specific OT/IT data translation tasks.

A data pipeline from OT to the AI platform that handles the translation, normalisation, and routing of sensor data to the AI applications that need it, without requiring manual data extraction for each use case.

Building this integration architecture is the most significant engineering investment in a manufacturing AI programme and the one that most directly determines whether subsequent AI use cases can be deployed in weeks rather than months.

The Manufacturing AI Maturity Roadmap: Three Stages

As of 2026, 42% of manufacturers have deployed AI in some form, but only 12% have moved beyond single-use-case deployments to enterprise-scale AI operations. The typical manufacturer is at Stage 2 (Structured Expansion) with at least one validated AI pilot, but facing the integration and governance gaps that prevent scaling. A separate McKinsey Q4 2025 analysis found that 65% of discrete manufacturers are piloting IoT-AI integrations, reinforcing the evidence that the bottleneck is scaling, not starting.

Understanding where your organisation sits on the maturity curve shapes which investments will have the highest impact right now.

Stage 1: Pilot and Proof of Value (Duration: 3 to 6 months per use case)

The pilot stage validates that an AI application works on your specific equipment, with your specific data, in your specific operational context. A well-run manufacturing AI pilot:

- Selects one high-value use case (predictive maintenance on a critical asset class is usually the best first choice) with an existing data baseline

- Establishes a quantified baseline against which improvement will be measured (mean time between failures, defect rate, energy cost per unit)

- Runs on production data with production constraints, not cleaned data in a controlled environment

- Measures ROI over at least 90 days of operation before claiming success

- Documents the lessons from the pilot in a deployment playbook that can be used for the next asset class or site

The common failure mode at this stage: treating a positive demo as a successful pilot. A demo on curated data is not a pilot. A pilot requires production data, production conditions, and a measurement period long enough to validate that the system performs reliably rather than just impressively in a single session.

Stage 2: Structured Expansion (Duration: 6 to 18 months)

At Stage 2, the pilot ROI has been validated and the organisation is expanding the proven use case to additional assets, lines, or sites while launching pilots for a second use case.

The critical investment at this stage is the OT/IT integration infrastructure. Without it, each expansion is a bespoke engineering project. With it, each expansion follows a repeatable deployment playbook that reduces the time and cost of each subsequent rollout.

Stage 2 also requires building the governance infrastructure: who owns each AI system, what are the quality thresholds that trigger investigation, how are model updates approved and deployed, and how is the safety case maintained for AI applications that influence equipment operation?

Bosch reports spending 30% of AI project budgets on shop-floor training and change management at this stage a figure that surprises technology-focused teams but reflects the operational reality that a predictive maintenance system that operators do not trust will not change maintenance behaviour, regardless of how accurate its predictions are. BCG’s Smart Factory Workforce Report 2025 reinforces this: manufacturers that invest in structured shop-floor digital upskilling achieve 2.3× faster AI time-to-value than those who focus exclusively on the technology layer.

Stage 3: Enterprise-Scale Operations (Duration: 18 months and beyond)

At Stage 3, multiple AI use cases are operating reliably across multiple production lines and sites, with centralised monitoring, a shared integration platform, and a governance framework that manages the full portfolio.

The characteristics of Stage 3 manufacturing AI operations:

- A centralised AI operations centre (or AI function within operations excellence) with visibility across all deployed systems

- Standardised performance dashboards that track AI system health alongside production KPIs

- A systematic use case pipeline: a defined process for identifying, evaluating, and approving new AI use cases

- An OT/IT integration platform that new use cases can connect to without bespoke engineering for each one

An enterprise data governance framework that manages data quality, access controls, and lineage across the AI portfolio, increasingly incorporating agentic AI workflows where AI systems autonomously coordinate across functions, with human oversight reserved for exception handling and critical approvals

IDC predicts that by the end of 2026, 45% of G2000 OEMs and aftermarket firms will use AI to connect field and engineering data, improving product and service quality, while Gartner’s 2026 Manufacturing Predicts report forecasts that by 2030, semi-autonomous AI agents will orchestrate 10% of key production, quality, and maintenance operations, up from 2% today. The manufacturers who reach Stage 3 will be positioned as the operational standard-setters in their sectors over the next five years.

Governance in Manufacturing AI: What Safety-Critical Environments Require

Manufacturing AI governance has requirements that generic enterprise AI governance frameworks do not fully address. Two manufacturing-specific governance requirements stand out.

Safety validation for AI applications in equipment control. When AI is used to control or influence equipment operation (closed-loop process control, autonomous scheduling of safety-critical equipment, AI-guided robotic systems), the safety case must be independently validated before the system operates in production. This is not a bureaucratic requirement – it is the recognition that an AI system that influences physical operations has failure modes with physical consequences. The safety validation process should document the failure modes, the consequences, the detection mechanisms, and the human override procedures for each AI-controlled process. In 2026, Gartner explicitly frames governance not as a roadblock but as an accelerator: manufacturers who institutionalise AI governance, defining levels of agency by asset class and aligning with OT safety standards such as IEC 62443, move faster by reducing operational risk and audit friction.

Change management for operator trust. The most technically sophisticated manufacturing AI system is only as valuable as operators’ willingness to act on its outputs. An operator who does not trust the predictive maintenance alert will not investigate the flagged equipment. An operator who suspects the quality inspection system misses certain defect types will not rely on it. Building operator trust requires a structured change management programme: explaining how the system works in accessible terms, demonstrating accuracy on historical failures the operators remember, creating a feedback mechanism for operators to flag suspected misses or false alarms, and evolving the system based on that feedback.

A third governance dimension is gaining urgency in 2026: institutional knowledge capture. As a generation of senior manufacturing engineers and technicians retires, Dataiku’s 2026 outlook describes this as the ‘silver tsunami’. AI systems that encode operational expertise become a strategic knowledge-retention asset, not just a productivity tool. Manufacturers designing their AI governance frameworks now should include provisions for systematic knowledge elicitation from retiring experts. For a comprehensive governance framework covering AI systems more broadly, our guide to AI governance for LLMs and enterprise agents covers the foundational controls that apply across all enterprise AI deployments.

Selecting an AI Implementation Partner for Manufacturing

Manufacturing AI implementation has requirements that differentiate it from general enterprise AI work. When evaluating implementation partners, manufacturing organisations should assess:

OT/IT integration experience. Has the vendor built integrations with industrial systems (PLCs, SCADA, historians, MES)? Do they understand the protocols (OPC-UA, Modbus, PROFINET) and the data quality challenges of time-series sensor data? A vendor with only IT-side experience will underestimate the integration complexity of the factory floor.

Safety-critical system experience. Has the vendor deployed AI in environments where the safety case must be documented and validated? Do they understand the difference between deploying AI in a back-office application and deploying it in an environment where equipment control is involved?

Domain knowledge in your manufacturing sector. The challenges of discrete automotive assembly are materially different from those of continuous process chemicals manufacturing, which are different again from food and beverage or medical device manufacturing. A vendor who understands your specific sector’s operational patterns, regulatory context, and data characteristics will scope the project more accurately and execute it more reliably.

Agentic AI readiness. As manufacturing AI matures from predictive to prescriptive to agentic architectures, partners need demonstrable experience designing multi-agent systems, defining human-in-the-loop governance boundaries, and integrating agentic workflows with existing MES and ERP platforms. Ask prospective partners for references on agentic deployments, not just predictive model implementations.

Frequently Asked Questions About AI in Manufacturing

What is the most common first AI use case for manufacturers? Predictive maintenance on critical production equipment is the most common and most consistently successful first manufacturing AI use case. It has accessible data requirements (sensor data from existing equipment), a clear ROI model (unplanned downtime cost avoided), and a contained scope that limits the OT/IT integration complexity of the first project. Quality inspection is a close second, particularly for manufacturers where defect rates are a high cost or customer satisfaction driver.

How long does it take to implement AI in a manufacturing environment? A focused predictive maintenance pilot on a defined asset class with existing sensor data typically takes 8 to 14 weeks from project start to first production alerts. Validation of ROI over a 90-day operating period follows. Expanding from a validated pilot to a second asset class or production line typically takes 4 to 8 weeks once the deployment playbook is established. A full plant-wide multi-use-case deployment programme runs 18 to 36 months. The most time-variable factor is OT/IT integration complexity.

What data do manufacturers need before starting an AI project? For predictive maintenance: at least 12 to 24 months of time-series sensor data from the equipment in scope, including records of past failure events. For quality inspection: a library of defect images representative of the defect types the system will detect (typically 500 to 2,000 images minimum per defect class, supplemented with synthetic data where labelled examples are scarce). For demand forecasting: at least 2 to 3 years of sales history at the SKU level, combined with production records and relevant external data.

What is the difference between predictive maintenance and preventive maintenance? Preventive maintenance replaces or services equipment on a fixed schedule (every 1,000 hours, quarterly, annually) regardless of actual equipment condition. It reduces unplanned failures but generates unnecessary maintenance costs when equipment is serviced before it needs it. Predictive maintenance uses sensor data and AI to forecast when specific failure modes are likely to occur and schedule maintenance interventions based on actual equipment condition rather than elapsed time. It reduces both unplanned failures and unnecessary planned maintenance, producing the compounding ROI that makes it the highest-value manufacturing AI use case.

How does AI quality inspection compare to traditional sampling-based inspection? Traditional sampling-based inspection tests a percentage of production (typically 1% to 5%) and infers population quality from the sample. It is cost-effective but allows defective units to pass between sample points. AI computer vision inspection enables 100% inspection at production line speeds, detecting defects in every unit rather than sampling. It also generates a digital quality record per unit, which is increasingly required for traceability in automotive, aerospace, medical device, and food and beverage manufacturing.

What governance is required for AI applications that influence equipment control? AI applications that influence or control equipment operation require a formal safety validation process before production deployment: documented failure mode analysis, tested override procedures, defined thresholds beyond which the AI defers to human judgment, and independent validation of the safety case by a party separate from the development team. The safety governance requirements scale with the severity of potential consequences – a quality inspection system that flags parts for human review has different governance requirements from a closed-loop process control system that adjusts equipment parameters autonomously. In 2026, the ISO/IEC 42001 AI management systems standard and the IEC 62443 OT cybersecurity framework are increasingly referenced as the foundational governance standards for manufacturing AI deployments in regulated and safety-critical environments.

What is agentic AI in manufacturing, and when should manufacturers plan for it? Agentic AI refers to AI systems that take sequences of actions autonomously to achieve defined goals, scheduling maintenance, placing materials orders, and adjusting production parameters rather than simply producing recommendations for human action. Deloitte predicts a fourfold increase in agentic AI adoption in manufacturing by 2026 (from 6% to 24% of deployments). Manufacturers at Stage 2 maturity (Structured Expansion) should begin designing their governance frameworks and OT/IT integration architecture to accommodate agentic workflows, even if full agentic deployment is 12 to 24 months away.

Conclusion: Manufacturing AI Scales When the Foundation Is Right

The manufacturers who are successfully scaling AI from pilots to plant-wide operations in 2026 share a common pattern. They invested in the OT/IT integration infrastructure before trying to scale their second use case. They built governance frameworks – safety validation, operator change management, performance monitoring – as part of their first deployment rather than retrofitting them later. They chose use cases based on data availability and baseline clarity, not on what sounded most impressive.

The result is that their second, third, and fourth AI deployments are faster, cheaper, and more reliable than their first – because each deployment builds on a foundation of shared infrastructure, shared governance, and accumulated operational knowledge.

Manufacturing AI is not a single project. It is a programme. The organisations that treat it as one and invest accordingly will be the operational standard-setters in their sectors by 2028.

Moweb’s AI & ML development and Data Engineering & Foundations practices work with manufacturing enterprises across the full programme: from first pilot design and OT/IT integration architecture through to plant-wide deployment and governance. Talk to us about your manufacturing AI roadmap.

Found this post insightful? Don’t forget to share it with your network!